AWS for Industries

Telco Meets AWS Cloud: Deploying DISH’s 5G Network in AWS Cloud

DISH Network is deploying the first stand-alone, cloud-native, autonomous 5G network. The company envisions a complete cloud-native 5G network with all its functions, except minimal components of the Radio Access Network (RAN), running in the cloud with fully automated network deployment and operations.

In this blog post, we describe DISH’s approach to building a scalable 5G cloud-native network in its entirety on AWS. The blog provides details on how DISH is utilizing the AWS global infrastructure footprint, native services and on-demand scalable resources to benefit from the disaggregated nature of a cloud-native 5G Core and RAN network functions. We also discuss the network’s cloud infrastructure integration with parts of DISH’s RAN network that will continue to run on-premises. Telecom business owners and technology practitioners will learn the benefits of utilizing AWS Cloud as a 5G core platform, as it enables faster innovation and agility.

System Design Guiding Principles

To achieve DISH’s ambitious 5G rollout target, the company’s architecture team partnered with AWS to design a scalable, automated platform to run its 5G functions. As an industry first and a groundbreaking deployment, the following guidelines were used in architecting the new platform:

- Maximize the use of cloud infrastructure and services.

- Enable the use of 5G components for services in multiple target environments (Dev/Test/Production/Enterprise) with full automation.

- Maximize the use of native automation constructs provided by AWS instead of building overlay automation.

- Maintain the flexibility to use a mix of cloud native APIs as well as existing telecom protocols.

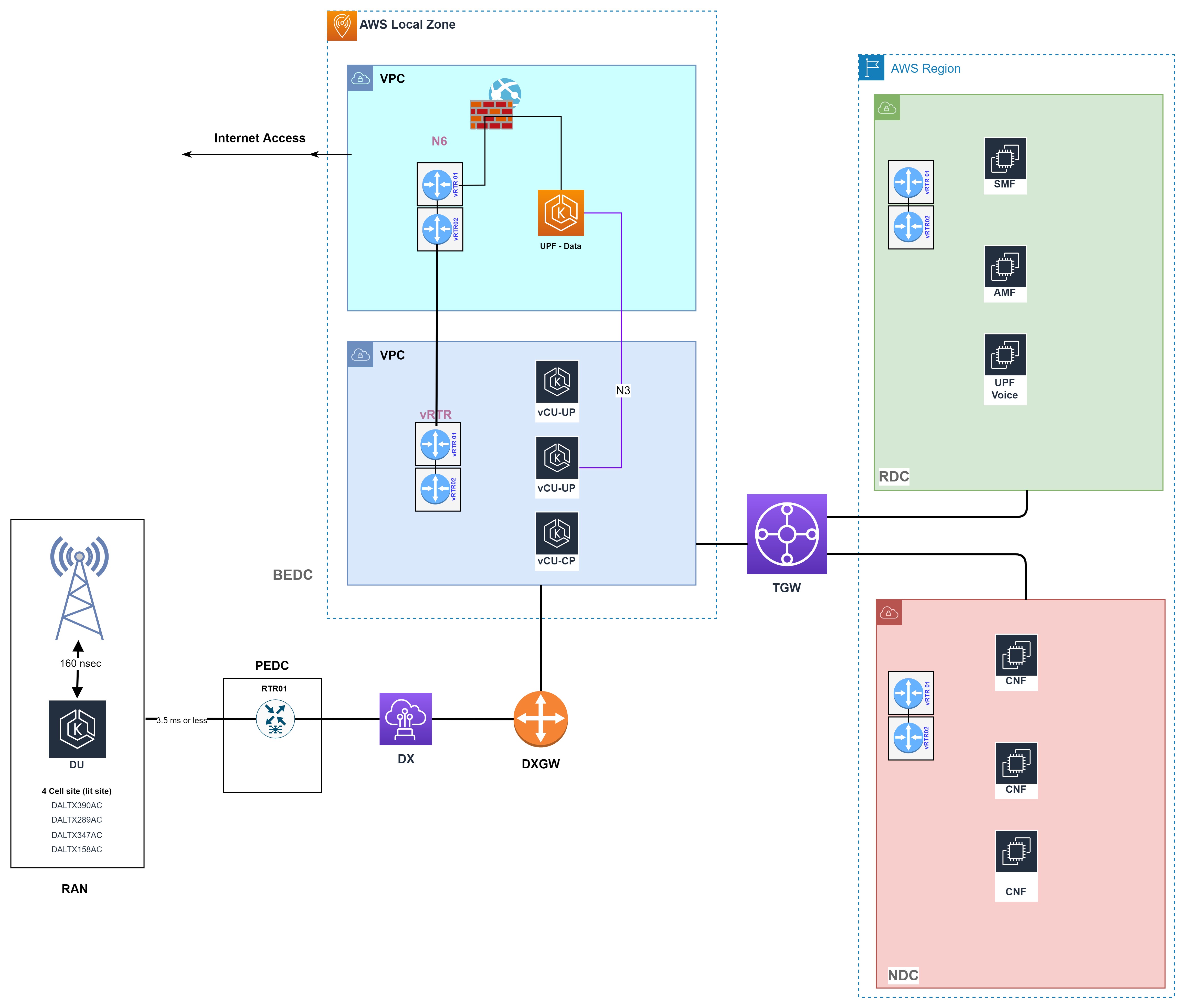

Figure 1: DISH 5G Cloud Architecture

Figure 1: DISH 5G Cloud Architecture

Deployment in AWS Cloud

The architecture of DISH’s 5G network leverages the distributed nature of 5G cloud-native network functions and AWS Cloud flexibility, which optimizes the placement of 5G network functions for optimal performance based on latency, throughput and processing requirements. Through this design, DISH aims to provide nationwide 5G coverage.

DISH’s network design utilizes a logical hierarchical architecture consisting of National Data Centers (NDCs), Regional Data Centers (RDCs) and Breakout Edge Data Centers (BEDCs) (Fig 1) to accommodate the distributed nature of 5G functions and the varying requirements for service layer integration. BEDCs are deployed in AWS Local Zones hosting 5G NFs that have strict latency budgets. They are connected with DISH’s Passthrough Edge Data Centers (PEDC), which serve as an aggregation point for all Local Data Centers (LDCs) and cell sites in a particular market. BEDCs also provide internet peering for general 5G data service and enterprise customer-specific private network service.

DISH is pioneering the deployment of a 5G network using O-RAN standards in the United States. An O-RAN network consists of an RU (Radio Unit), which is deployed on towers and a DU (Distributed Unit), which controls the RU. These units interface with the Centralized Unit (CU), which is hosted in the BEDC at the Local Zone. These combined pieces provide a full RAN solution that handles all radio level control and subscriber data traffic.

Colocated in the BEDC is the User Plane Function (Data Network Name (DNN) = Internet), which anchors user data sessions and routes to the internet. The BEDCs leverage local internet access available in AWS Local Zones, which allows for a better user experience while optimizing network traffic utilization. This type of edge capability also enables DISH enterprise customers and end-users (gamers, streaming media and other applications) to take full advantage of 5G speeds with minimal latency. DISH has access to 16 Local Zones across the U.S. and is continuing to expand. For latest information about Local Zones, visit the AWS Local Zone Page.

RDCs are hosted in the AWS Region across multiple availability zones. They host 5G subscribers’ signaling processes such as authentication and session management as well as voice for 5G subscribers. These workloads can operate with relatively high latencies, which allows for a centralized deployment throughout a region, resulting in cost efficiency and resiliency. For high availability, three RDCs are deployed in a region, each in a separate Availability Zone (AZ) to ensure application resiliency and high availability. An AZ is one or more discrete data centers with redundant power, networking and connectivity in an AWS Region. All AZs in an AWS Region are interconnected with high-bandwidth and low-latency networking over a fully redundant, dedicated metro fiber, which provides high-throughput, low-latency networking between AZs. CNFs deployed in the RDC utilizes an AWS high speed backbone to failover between AZs for application resiliency. CNFs like AMF and SMF, which are deployed in RDC, continue to be accessible from the BEDC in the Local Zone in case of an AZ failure. They serve as the backup CNF in the neighboring AZ and would take over and service the requests from the BEDC.

The NDCs host a nationwide global service such as subscriber database, IMS (IP multimedia subsystem: voice call), OSS (Operating Support System) and BSS (Billing Support System). NDC is hosted in the AWS Region and spans multiple AZs for high availability. To meet geographical diversity requirements, NDCs are mapped to AWS Regions where three NDCs are built in three U.S. Regions (us-west-2, us-east-1, and us-east2). AWS Regions us-east-1 and us-east-2 are within 15 ms while us-east-1 to us-west-2 is within 75 ms delay budget. An NDC is built to span across three AZs for high availability.

Figure 2: DISH 5G Architecture in AWS Cloud

Figure 2: DISH 5G Architecture in AWS Cloud

Cloud Infrastructure Architecture

DISH 5G netowrk’s architecture utilizes Amazon Virtual Private Cloud (Amazon VPC) to represent NDCs/RDCs or BEDCs (xDCs). Amazon VPC enables DISH to launch CNF resources on a virtual network. This virtual network is intended to closely resemble an on-premises network, but also contains all the resources needed forData Center functions. The VPCs hosting each of the xDCs are fully interconnected utilizing AWS global network and AWS Transit Gateway. AWS Transit Gateway is used in AWS Regions to provide connectivity between VPCs deployed in the NDCs, RDCs, and BEDCs with scalability and resilience (Fig2).

AWS Direct Connect provides connectivity from RAN DUs (on-prem) to AWS Local Zones where cell sites are homed. Cell sites are mapped to a particular AWS Local Zone based on proximity to meet 5G RAN mid-haul latency expected between DU and CU.

5G Core Network Connectivity

In the AWS network, each Region hosts one NDC and three RDCs. NDC functions communicate to each other through the Transit Gateway, where each VPC has an attachment to the specific regional Transit Gateway. EC2 and native AWS networking is referred to as “Underlay Network” in this network architecture. Provisioning of Transit Gateway and required attachments are automated using CI/CD pipelines with AWS APIs. Transit Gateway routing tables are utilized to maintain isolation of traffic between functions.

Some of the 5G core network functions require support for advanced routing capabilities inside VPC and across VPCs (Ex. UPF, SMF and ePDG). These functions reply to routing protocols such as BGP for route exchange and fast failover (both stateful and stateless). To support these requirements, virtual routers (vRTRs) are deployed on EC2 to provide connectivity within and across VPCs, as well as back to the on-prem network.

Traffic from vRTRs is encapsulated using Generic Routing Encapsulation (GRE) tunnels, creating an “Overlay Network.’ This leverages the Underlay network for end-point reachability. The Overlay network uses Intermediate Systems to Intermediate Systems (IS-IS) routing protocol in conjunction with Segment Routing Multi-Protocol Label Switching (SR-MPLS) to distribute routing information and establish network reachability between the vRTRs. Multi-Protocol Border Gateway Protocol (MP-BGP) over GRE is used to provide reachability from on-prem to AWS Overlay network and reachability between different regions in AWS. The combined solution provides DISH the ability to honor requirements such as traffic isolation and efficiently route traffic between on-prem, AWS and 3rd parties (e.g., voice aggregators, regulatory entities etc.).

AWS Direct Connect for DISH’s RAN Mid-Haul

AWS Direct Connect is leveraged to provide connectivity between DISH’s RAN network and the AWS Cloud. Each Local Zone is connected over 2*100G Direct Connect links for redundancy. Direct Connect in combination with Local Zone provides a sub 10 msec Midhaul connectivity between DISH’s on-prem RAN and BEDC. End-to-end SR-MPLS provides connectivity from cell sites to Local Zone and AWS region via Overlay Network using the vRTRs. Through thisDISH has the ability to extend multiple Virtual Routing and Forwarding (VRF)from RAN to the AWS Cloud.

AWS Local Zone and Internet Peering

Internet access is provided by AWS within the Local Zone. This “hot potato” routing approach is the most efficient way of handling traffic, rather than backhauling traffic to the region, a centralized location or incurring the cost of maintaining a dedicated internet circuit. It improves subscriber experience and provides low latency internet. This architecture also reduces the failure domain by distributing internet among multiple Local Zones.

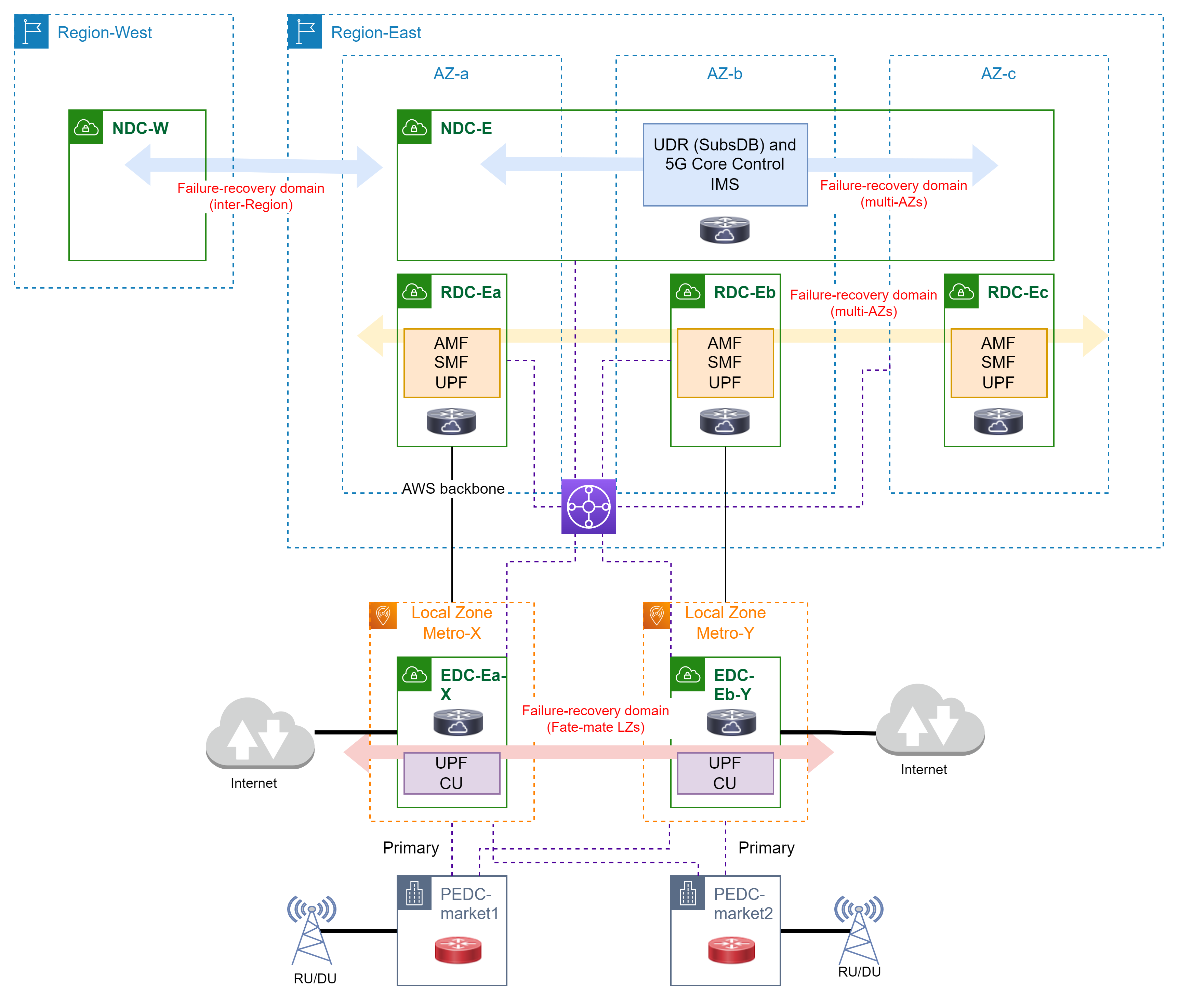

Figure 3: Network Resilience and Failover Scenarios

Figure 3: Network Resilience and Failover Scenarios

Network Resiliency

In telco-grade networks, resiliency is at the heart of design. It’s vital to maintain the targeted service-level agreements (SLAs), comply with regulatory requirements and support seamless failover of services. While redundancy and resiliency are addressed at various layers of the 5G stack, we will focus here on transport availability in failure scenarios. High availability and geo-redundancy are NF dependent, while some NFs are required to maintain state.

- NDC

- High Availability: High availability is achieved by deploying two redundant NFs in two separate availability zones within a single VPC. Failover within an AZ can be recovered within the region without the need to route traffic to other regions. The in-region networking uses the underlay and overlay constructs, which enable on-prem traffic to seamlessly flow to the standby NF in the secondary AZ if the active NF becomes unavailable.

- Geo-Redundancy: Geo-Redundancy is achieved by deploying two redundant NFs in two separate availability zones in more than one region. This is achieved by interconnecting all VPCs via inter-region Transit Gateway and leveraging vRTR for overlay networking. The overlay network is built as a full-mesh enabling service continuity using the NFs deployed across NDCs in other regions during outage scenarios (e.g., Markets, B-EDCs, RDCs, in us-east-2 can continue to function using the NDC in us-east-1).

- RDC

- High availability and geo-redundancy are achieved by NFs failover between VPCs (multiple Availability zones) within one region. These RDCs are interconnected via Transit Gateway with the vRTR-based overlay network. This provides on-premise and B-EDC reachability to the NFs deployed in each RDC with route policies in place to ensure traffic only flows to the backup RDCs, if the primary RDC becomes unreachable.

- PEDC

- DISH’s RAN network is connected, through PEDC, to two different direct connect locations for reachability into the region and local zone This allows for DU traffic to be rerouted from an active BEDC to backup BEDC in the event a local zone fails (Fig3).

Deployment Automation: As a Code

For network automation as well as scalability, the AWS and DISH architecture teams selected the infrastructure as code (IaC) to enable automation. It can be tempting to create resources manually in the short term, but using infrastructure as code:

- Enables full auditing capabilities of infrastructure deployment and changes.

- Provides the ability to deploy a network infrastructure rapidly and at scale.

- Simplifies operational complexity by using code and templates as well as reduces the risk of misconfiguration.

All infrastructure components such as VPCs and subnets to TGWs are deployed using AWS Cloud Development Kit (AWS CDK) and AWS CloudFormation templates. Both AWS CDK and Cloud Formation use parameterization and embedded code (through Lambda) to allow for automation of various environment deployments without the need to hardcode dynamic configuration information within the template.

AWS has pioneered the development of new CI/CD tools to help a broad spectrum of industries develop and roll out software changes rapidly while maintaining systems stability and security. These tools include a set of DevOps (software development and operations) services, such as AWS CodeStar, AWS CodeCommit, AWS CodePipeline, AWS CodeBuild, and AWS CodeDeploy. Moreover, AWS has also been evangelizing the idea of IaC using AWS CDK, AWS CloudFormation, and API-based third-party tools (e.g., Terraform). Using these tools, NF deployment processes can be stored in AWS as a source code and maintain the same source code in the CI/CD pipeline for continuous delivery.

The DISH and AWS teams worked with independent software vendors (ISVs) to deploy CNFs following cloud-native principles, with full CI/CD, observability and configuration through cloud-native tools, like Helm and ConfigMaps. This approach, combined with the resiliency components discussed earlier, maximizes 5G service availability in outage scenarios at the networking domain or when NFs are impacted by failures.

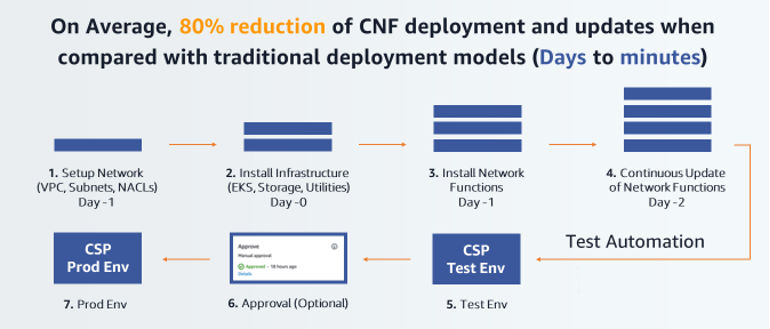

The CI/CD process, developed in partnership with DISH and ISVs, includes the following steps:

- Network setup – AWS CDK and CloudFormation initiate the creation of the network prerequisites

- Networking stack ( VPC, Subnets, NAT gateway, route table, and internet gateway)

- Infrastructure deployment – AWS CDK and CloudFormation initiate the creation of the following resource stacks:

- Compute stack (EKS cluster creation, EKS worker nodes, Lambda)

- Storage stack ( S3 buckets, EBS volumes, and EFS)

- Monitoring stack (Amazon CloudWatch, Amazon OpenSearch Service)

- Security stack ( IAM roles, IAM policies, EC2 security groups, VPC NACLs)

- CNF deployment – In this stage, CNF is deployed onto EKS clusters using Kubectl and Helm chart tools. This stage also deploys any specific applications or tools needed by the CNFs to work efficiently (e.g., Prometheus, Fluentd). CNFs can be either deployed via Lambda functions or AWS CodeBuild, which can be part of the AWS CodePipeline stages.

- Continuous updates and deployment – These are a sequence of steps that will be carried out iteratively to deploy changes \ as part of container and configuration changes resulting in upgrades. Similar to the CNF deployment case, this is automated using AWS services with the trigger from AWS CodeCommit, Amazon Elastic Container Registry or third-party source systems such as GitLab Webhook.

Figure 4: AWS CI/CD Pipeline Flow

The CI/CD pipeline is built using AWS CodePipeline and utilizes a continuous delivery service that models, visualizes and automates the steps required to release software. By defining stages in a pipeline, you can retrieve code from a source code repository, build that source code into a releasable artifact, test the artifact and deploy it to production. Only code that successfully passes through all stages will be deployed. In addition, other requirements can be added to the pipeline, such as manual approvals, to help ensure that only approved changes are deployed to production.

Figure 5: DISH CI/CD Pipeline Architecture

By leveraging infrastructure as code, DISH can automate the creation (and decommissioning) of environments. This increases the pace of innovation, reduces human error and ensures compliance with DISH’s security postures. The automated CI/CD pipeline checkpoints and constant monitoring tools like Amazon GuardDuty (threat protection) and Amazon Macie (sensitive data identification and protection) is included in infrastructure as a code for security checks.

CI/CD Security

Security is a critical element in the network design and is introduced to various parts of the solution. Below is a list of security steps that DISH and AWS CI/CD processes would take into account while deploying an application.

- Source: The ECR repository allocated to the ISV is configured with “Scan on Push” flag enabled, so that any uploads of Docker images will be immediately subjected to a security scan. Any known common vulnerabilities and exposures (CVE) are flagged with notifications. Apart from ECR, the charts put into AWS CodeCommit repository, the ISVs will be requested to encrypt any passwords used using AWS Secrets Manager rather than in plain text.

- Artifacts integrity: The artifacts used across the pipeline are encrypted, both at rest (using AWS managed keys) and in transit (using SSL/TLS).

- IAM users and roles: User or resource permissions provided are based on the principle of minimum permission. Cross-IAM role trust relationship is configured and used for operating across resources in different services, for example, AWS CodeBuild needing permission to run commands on an EKS cluster.

- Audit: The auditing capability of AWS CloudTrail service is used to maintain an audit trail in the DISH environments. It tracks each and every API call across services and user operations and will allow an evaluation of any past events.

- Image vulnerability scanning: CNF images that are uploaded to Amazon ECR are automatically scanned for security vulnerabilities. A report of the scan findings is available in the AWS console and can also be retrieved via API. The findings can also be sent to the accountable parties for corrective action, including replacement of the CNF image.

Security checks are kicked off at various stages of the pipeline to ensure the newly uploaded image is secure and complies with the desired compliance checks, and a notification can be sent to DISH for approval. The container registry would scan for any open CVE vulnerabilities. The configuration is checked against leaking out any sensitive information (known PII pattern), the test stage triggers compliance check rules (for example, unexpected open TCP/UDP ports, DOS vulnerabilities), and eventually verifies backward and forward compatibility for graceful upgrade and rollback safety. Apart from the application, it is critical to provision pipeline security by ensuring encrypted transfer of artifacts across stages, whether at rest or in transit.

Beginning of 5G

In this post, we presented a cloud-native architecture for deploying 5G networks on AWS Cloud. By utilizing the AWS Cloud, DISH was able to architect, design, build and deliver a complete cloud-native 5G network with full operations and network deployment automation, including adoption of Open Radio Access Network (O-RAN) and other open community projects. This platform allows DISH to create innovative new services and react faster to customer demands. It also mitigates heavy lifting activities that put additional work loads on the architecture, engineering and operations teams.

- Deploying 5G at Cloud Scale: By utilizing the AWS Cloud platform, DISH was able to architect, design, build, and deliver the first 5G data and voice call using the 5G core platform deployed in the public cloud in a short period of time.

- Tech Stack Innovation: By utilizing a common cloud architecture across core and edge, the DISH team was able to promote the adoption of CNFs and cloud-native architecture across a diverse set of 5G partners and software providers.

- Edge Optimization: By utilizing the right mix of AWS resources in the regions and in Local Zones, DISH was able to address latency-sensitive requirements of 5G applications by deployment in AWS Local Zones while maintaining central deployment of the rest of 5G core applications in AWS Regions.

DISH is an active member of O-RAN Alliance as well as other open community projects. To learn more about DISH’s digital innovation opportunities, visit https://www.dishwireless.com/

To learn more about telecom on AWS, visit https://aws.amazon.com/blogs/industries/category/industries/telecommunications/