Artificial Intelligence

Identity verification using Amazon Rekognition

In-person user identity verification is slow to scale, costly, and high friction for users. Machine learning (ML) powered facial recognition technology can enable online user identity verification. Amazon Rekognition offers pre-trained facial recognition capabilities that you can quickly add to your user onboarding and authentication workflows to verify opted-in users’ identities online. No ML expertise is required. With Amazon Rekognition, you can onboard and authenticate users in seconds while detecting fraudulent or duplicate accounts. As a result, you can grow users faster, reduce fraud, and lower user verification costs.

In this post, we describe a typical identity verification workflow and show how to build an identity verification solution using various Amazon Rekognition APIs. We provide a complete sample implementation in our GitHub repository.

User registration workflow

The following figure shows a sample workflow of a new user registration. Typical steps in this process are:

- User captures selfie image and the image of a government-issued identity document.

- Quality check of the selfie image and optional liveness detection of the user face.

- Comparison of the selfie image with the identity document face image.

- Check of the selfie against a database of existing user faces.

You can customize the flow according to the business process. It often contains some or all of the steps presented in the preceding diagram. You can choose to run all the steps synchronously (wait for one step to complete before moving on to the next step). Alternately, you can run some of the steps highlighted in orange asynchronously (don’t wait for that step to complete) to speed up the user registration process and improve the customer experience. If the steps aren’t successful, you must roll back the user registration.

In addition to new user registration, another common flow is an existing or returning user login. In this flow, a check of the user face (selfie) is performed against a previously registered face. Typical steps in this process include user face capture (selfie), check of the selfie image quality, and search and compare of the selfie against the faces database. The following diagram shows a possible flow.

You can customize the steps of the process according to your business needs, and choose to include or exclude the liveness detection.

Solution overview

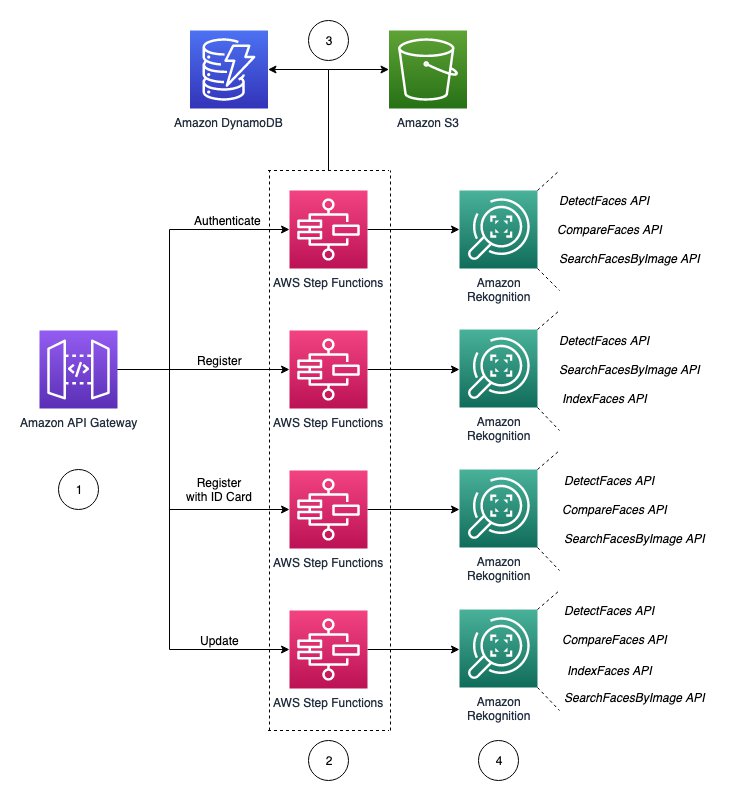

The following reference architecture shows how you can use Amazon Rekognition, along with other AWS services, to implement identity verification.

The architecture includes the following components:

- Applications invoke Amazon API Gateway to route requests to the correct AWS Lambda function depending on the user flow. There are four major actions in this solution: authenticate, register, register with ID card, and update.

- API Gateway uses a service integration to run the AWS Step Functions express state machine corresponding to the specific path called from API Gateway. Within each step, Lambda functions are responsible for triggering the correct set of calls to and from Amazon DynamoDB and Amazon Simple Storage Service (Amazon S3), along with the relevant Amazon Rekognition APIs.

- DynamoDB holds face IDs (

face-id), S3 path URIs, and unique IDs (for example employee ID number) for eachface-id. Amazon S3 stores all the face images. - The final major component of the solution is Amazon Rekognition. Each flow (authenticate, register, register with ID card, and update) calls different Amazon Rekognition APIs depending on the task.

Before we deploy the solution, it’s important to know the following concepts and API descriptions:

- Collections – Amazon Rekognition stores information about detected faces in server-side containers known as collections. You can use the facial information that’s stored in a collection to search for known faces in images, stored videos, and streaming videos. You can use collections in a variety of scenarios. For example, you might create a face collection to store scanned badge images by using the

IndexFacesoperation. When an employee enters the building, an image of the employee’s face is captured and sent to theSearchFacesByImageoperation. If the face match produces a sufficiently high similarity score (say 99%), you can authenticate the employee. - DetectFaces API – This API detects faces within an image provided as input and returns information about faces. In a user registration workflow, this operation may help you screen images before moving to the next step. For example, you can check if a photo contains a face, if the person identified is in the right orientation, and if they’re not wearing any face blocker such as sunglasses or a cap.

- IndexFaces API – This API detects faces in the input image and adds them to the specified collection. This operation is used to add a screened image to a collection for future queries.

- SearchFacesByImage API – For a given input image, the API first detects the largest face in the image, and then searches the specified collection for matching faces. The operation compares the features of the input face with face features in the specified collection.

- CompareFaces API – This API compares a face in the source input image with each of the 100 largest faces detected in the target input image. If the source image contains multiple faces, the service detects the largest face and compares it with each face detected in the target image. For our use case, we expect both the source and target image to contain a single face.

- DeleteFaces API – This API deletes faces from a collection. You specify a collection ID and an array of face IDs to remove.

Prerequisites

Before you get started, complete the following prerequisites:

- Create an AWS account.

- Clone the sample repo on your local machine:

We use the test client in this repository to test the various workflows.

- Install Python 3.6+ on your local machine.

Deploy the solution

Choose the appropriate AWS CloudFormation stack to provision the solution into your AWS account in your preferred Region:

As we discussed earlier, this solution uses API Gateway integrated with Step Functions and Amazon Rekognition APIs to run the identity verification workflows. To test the solution, follow the steps in the code repository to use the provided test client.

The following sections describe the various workflows implemented via Step Functions.

New user registration

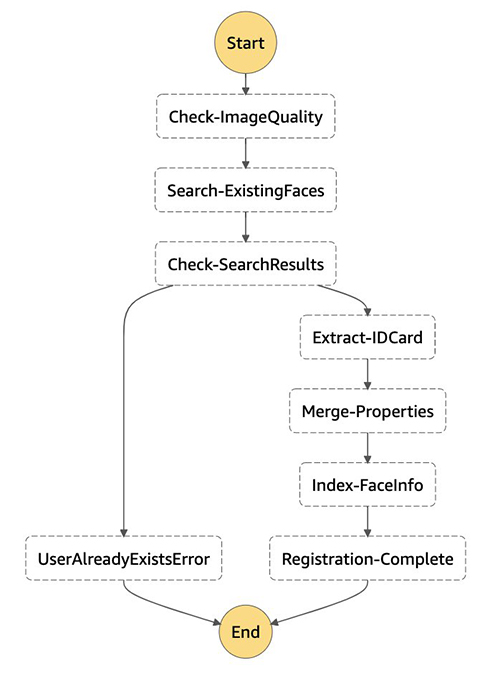

The following image illustrates the Step Functions definition for new user registration. The steps are defined in the register_user.py file.

Three functions are called in this workflow: detect-faces, search-faces, and index-faces. The detect-faces function calls the Amazon Rekognition DetectFaces API to determine if a face is detected in an image and is usable. Some of the quality checks include determining that only the face is present in the image, ensuring the face isn’t obscured by sunglasses or a hat, and confirming that the face isn’t rotated by using the pose dimension. If the image passes the quality check, the search-faces function searches for an existing face match in the Amazon Rekognition collections by confirming the FaceMatchThreshold confidence score meets your threshold objective. For more information, refer to the section on using similarity thresholds to match faces. If the face image doesn’t exist in the collections, the index-faces function is called to index the face into the collection. The face image metadata is stored in the DynamoDB table and the face images are stored in an S3 bucket.

To register a new user, run the app.py script (test-client) by running the following code:

If the new user registration succeeds, the face image attribute information is added in DynamoDB.

New user registration with ID card

The steps to register a new user with an ID card are similar to the steps for registering a new user. The following image illustrates the steps, which are defined in the register_idcard.py file.

The same three functions that we used to register a user (detect-faces, search-faces, and index-faces) are called in for this workflow. First, the customer captures an image of their ID and a live image of their face. The face image is checked to confirm it meets our defined quality standards using the DetectFaces API. If the image meets the quality standards, the live face image is compared to the face in the ID to determine if they’re a match. If the images don’t match, the user receives an error and the process ends. If the images match, we check if the face already exists in the Amazon Rekognition collections using the SearchFacesByImage API. The search results are compared to the user’s current face image. If the user already exists, the user isn’t registered. If the user doesn’t exist in the collections, the relevant properties are extracted from the ID card. You can extract key-value pairs from identity documents using the newly launched Amazon Textract AnalyzeID API. The extracted properties from the ID card are merged and the user’s face is indexed in the DynamoDB table. After the image is indexed, the new user ID registration process is complete.

Existing user authentication

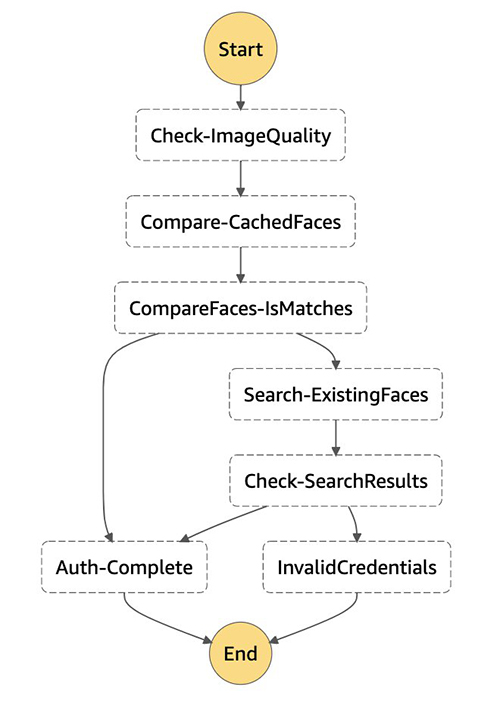

The following image illustrates the workflow for authenticating an existing user. The steps are defined in the auth.py file.

This Step Function workflow calls three functions: detect-faces, compare-faces, and search-faces. After the detect-faces function verifies that the captured face image is valid, the compare-faces function checks the DynamoDB table for a face image that matches an existing user. If a match is found in DynamoDB, the user authenticates successfully. If a match isn’t found, the search-faces function is called to search for the face image in the collections. The user is verified and the authentication process completes if their face image exists in the collections. Otherwise, the user’s access is denied.

To test authenticating an existing user, run the app.py script (test-client) by running the following code::

Existing user login with a request for photo update

The following image illustrates the workflow to update an existing user’s photo. The steps are defined in the update.py file.

This workflow calls four functions: detect-faces, compare-faces, search-faces, and index-faces. The steps are similar to the steps in the existing user authentication workflow. After the user captures their face image and the image quality is checked, we check for a matching face image in DynamoDB using the compare-faces function. If a match for the user is found, their user profile is updated, their new face image is indexed by calling the index-faces function, and the update process completes. Alternatively, if a match isn’t found, the search-faces function is called to search for the face image in the Amazon Rekognition collections. If the face image is found in the collection, the user’s profile is updated and their new face image is indexed. The user’s access is denied if their image isn’t found in the collections.

To update an existing user’s photo, run the app.py script (test-client) by running the following code:

Clean up

To prevent accruing additional charges in your AWS account, delete the resources you provisioned by navigating to the AWS CloudFormation console and deleting the Riv-Prod stack.

Deleting the stack doesn’t delete the S3 bucket you created. This bucket stores all the face images. If you choose to delete the S3 bucket, navigate to the Amazon S3 console, empty the bucket, and then confirm you want to permanently delete it.

Conclusion

Amazon Rekognition makes it easy to add image analysis to your identity verification applications using proven, highly scalable, deep learning technology that requires no ML expertise to use. Amazon Rekognition provides face detection and comparison capabilities. With a combination of the DetectFaces, CompareFaces, IndexFaces, and SearchFacesByImage APIs, you can implement the common flows around new user registration and existing user logins.

Amazon Rekognition collections provide a method to store information about detected faces in server-side containers. You can then use the facial information stored in a collection to search for known faces in images. When using collections, you don’t need to store original photos after you index faces in the collection. Amazon Rekognition collections don’t persist actual images. Instead, the underlying detection algorithm detects the faces in the input image, extracts facial features into a feature vector for each face, and stores it in the collection.

To start your journey towards identity verification, visit Identity Verification using Amazon Rekognition.

About the Authors

Nate Bachmeier is an AWS Senior Solutions Architect that nomadically explores New York, one cloud integration at a time. He specializes in migrating and modernizing applications. Besides this, Nate is a full-time student and has two kids.

Nate Bachmeier is an AWS Senior Solutions Architect that nomadically explores New York, one cloud integration at a time. He specializes in migrating and modernizing applications. Besides this, Nate is a full-time student and has two kids.

Anthony Pasquariello is an Enterprise Solutions Architect based in New York City. He provides technical consultation to customers during their cloud journey, especially around security best practices. He has an MS and BS in electrical and computer engineering from Boston University. In his free time, he enjoys ramen, writing non-fiction, and philosophy.

Anthony Pasquariello is an Enterprise Solutions Architect based in New York City. He provides technical consultation to customers during their cloud journey, especially around security best practices. He has an MS and BS in electrical and computer engineering from Boston University. In his free time, he enjoys ramen, writing non-fiction, and philosophy.

Lauren Mullennex is a Solutions Architect based in Denver, CO. She works with customers to help them architect solutions on AWS. In her spare time, she enjoys hiking and cooking Hawaiian cuisine.

Lauren Mullennex is a Solutions Architect based in Denver, CO. She works with customers to help them architect solutions on AWS. In her spare time, she enjoys hiking and cooking Hawaiian cuisine.

Amit Gupta is a Senior AI Services Solutions Architect at AWS. He is passionate about enabling customers with well-architected machine learning solutions at scale.

Amit Gupta is a Senior AI Services Solutions Architect at AWS. He is passionate about enabling customers with well-architected machine learning solutions at scale.