AWS Storage Blog

Preserving and archiving television history with an eye toward the future

How Team Coco used AWS and Iron Mountain to durably store, transcode, view, clip, and publish highlights from Late Night with Conan O’Brien’s 2725-episode library to millions of Conan’s fans.

There’s an old English poem that says you capture some good luck as you embark on a profound new endeavor as long as you bring: something old, something new, something borrowed, and something blue.

Most people put this age-old wisdom to use on the momentous day that someone asks for their hand in marriage. We at Team Coco, however, decided to put it to use on a different kind of proposal altogether. Ours came from our boss, the legendary late night television host Conan O’Brien, and his ask was for us to embark on an audacious plan to:

- Digitize 2725 episodes of his NBC show

- Durably store the episodes in a future-proof digital format

- Implement a system to view and catalog every episode

- Give our fans groundbreaking access to their favorite moments, which had been locked away and stored on degrading tapes in a vault for years

Oh, and also… we needed to begin work immediately because the goal was to begin publishing by the 25th anniversary of the first show in September of 1993, and we don’t like letting our boss down.

At Team Coco we love to say yes to new challenges, but we had never faced a challenge like this once-in-a-lifetime opportunity. It was difficult to find any easy parallels of similar projects that could have provided us a roadmap on how best to handle this sort of a task. The scope and scale of the digitizing, storing, transcoding, and cataloging was impossible for our small team at Team Coco to handle using traditional on-premises means. This was exacerbated by our tight production timeline. This left only one feasible way for us to build a workflow to accomplish our goals: the AWS Cloud. In this blog post, I discuss how Team Coco used AWS to durably store, transcode, view, clip, and publish highlights from Late Night with Conan O’Brien’s 2725-episode library to millions of Conan’s fans.

Something old and something borrowed: tape vaults, video tape masters, transportation, and digitization

Before our project, the highest quality of clip available was a fan upload

Here’s a simplified overview of the process to digitize the tapes:

- We borrowed 2725 broadcast master tapes from the NBC vaults.

- Iron Mountain, an APN Partner, delicately moved and tracked the tapes as they journeyed from NBC’s tape vault to Iron Mountain’s digitization facility in Los Angeles, CA.

- All 2725 tapes were then housed, inbounded, organized, and digitized at Iron Mountain Entertainment Services (IMES).

- The broadcast video tapes spanned the following video tape formats: Betacam-SP, D-2, D-3, MPEG-IMX, HDCAM, HDCAM-SR.

- D-3 presented the biggest challenges as it was an early composite digital tape format NBC used in the early 1990s for the first 1629 episodes of the show.

- The format is now considered obsolete and there are only a few decks available in the world that can play back the tapes at a sufficiently high quality.

- The video tapes were captured into a computer-friendly format.

- The newly created video files were then staged on Iron Mountain’s on-premises storage platform and readied for delivery to us at Team Coco.

Something new: The AWS Cloud

Our third good luck charm on our audacious project was something new: AWS and the plethora of service offerings available to us. The biggest driver for us when choosing AWS was that even while facing so many unknowns, we knew we would have access to nearly infinite compute and storage resources. We also had our experience running custom-built AWS infrastructure that had been powering TeamCoco.com, our iOS and Android apps, and our in-house Eisenhower product that we have been building since 2012.

Today, Eisenhower operates much of Team Coco’s daily functions serving as a: CMS, asset manager, searchable database, archiving solution, video transcoder, publishing system, and even a collaboration tool for our team. Eisenhower’s customized technology has allowed Team Coco to continuously reimagine how we produce and distribute entertainment as a groundbreaking production company. Despite being a small and agile team, we’ve been able to accomplish great feats thanks to tools like Eisenhower. These tools have enabled us to realize enormous efficiencies by pulling together managed services from the AWS catalog. Throughout this post, we touch on some of the services that were used to make the Conan25 project a reality.

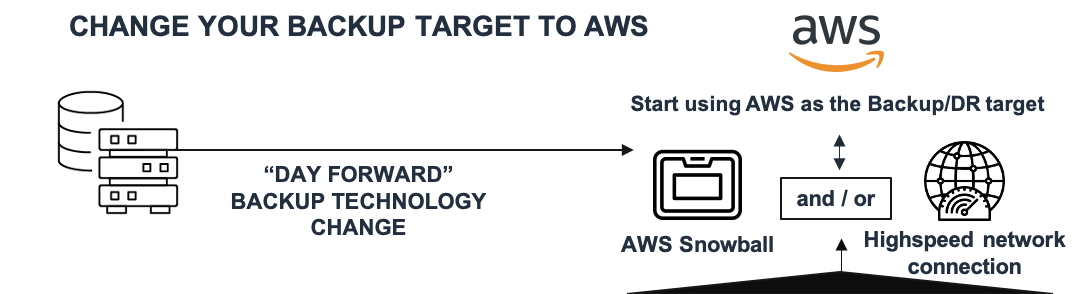

Overview of how we moved our data to Amazon S3:

- For our first delivery, full quality files were copied from the storage server to an AWS Snowball device. The device was then shipped to the nearest AWS data center where the files were copied to our Amazon S3 bucket.

- Later in the process we employed a direct cloud seeding workflow to our S3 bucket using the AWS CLI and a 10-Gb data pipe.

- Full quality files were then sent to AWS Elemental MediaConvert to convert to a lower bitrate mezzanine and proxy format. AWS Elemental enabled us to use industry-trusted video encoding expertise as a fully managed service. This freed us from the need to set up and manage individual video encoding servers and offered all of the industry standard formats we needed.

- Once our initial processing work was finished, we were then able to implement Amazon S3 Lifecycle policies to migrate our larger files to S3 Inelligent-Tiering, Amazon S3 Glacier, or S3 Glacier Deep Archive, helping us realize savings on long-term storage costs. We opted for S3 Intelligent-Tiering for our smaller browser-compatible h.264 files, which enabled us to realize some cost savings. Doing so also freed us of the need for direct management of each file’s storage class no matter how frequently the file is accessed. For higher-quality mezzanine files, the 1–5 minute expedited retrieval option that Amazon S3 Glacier provided struck the perfect balance for our workflow. Designing the workflow such that it could tolerate several minutes of access latency enabled us to reduce our storage costs by up to 80% in certain cases. For our largest video files that are accessed very infrequently, and for our workflows that can tolerate a 12-hour latency, we used S3 Glacier Deep Archive for additional savings.

Something blue: a data lake of television history built for our fans

It’s blue because (data) lakes are blue. Just go with it!

The last cornerstone for good luck on this project was the (blue) data lake we were building, and continue to build, to gain access to previously inaccessible media. Our data lake is filled with 2725 hours and some of our favorite moments in television history, and it has become an integral part of our fan experience.

But our job wasn’t done yet. To realize true value from the data lake, we used AWS services that enabled us to catalog, search, clip, and transcode segments from the 2725-episode archive.

At a glance: episode cataloging, CMS import, clip generation

- Full episodes were screened by a team of remote loggers via the h.264 episode proxy in a standard web browser. Segments were titled and marked with in and out points.

- Once the initial metadata was generated we then migrated that data into Team Coco’s Eisenhower platform running on other well-known AWS services like Amazon EC2, AWS Lambda, and Amazon RDS.

- From here, clips can be generated out of the episode with audio and video fades added automatically via a custom and highly scalable container encoding solution running on Amazon ECS and AWS Batch.

- Files are then stored in Amazon S3 and transcoded to high-quality MP4s for platforms like YouTube and Facebook. We also encoded HLS variable bitrate streams for TeamCoco.com (again, MediaConvert).

- Highlights are then published online where they are enjoyed by millions of Conan’s fans who may have not seen these segments for over 20 years (or ever!).

Results of our AWS-based project

- Our high-quality video masters are durably stored on Amazon S3, S3 Intelligent-Tiering, S3 Glacier, and S3 Glacier Deep Archive. We rest easy knowing that our ½ PB of television history is stored in Amazon S3, which is designed for 99.999999999% (11 9’s) of durability.

- We now have a searchable and instantly viewable database of our segments within our homegrown Eisenhower platform that uses AWS to search and display segments from every single episode. The amazing part is that Eisenhower is able to be used in any desktop web browser for our staff. This significantly lowers the barriers to entry for our team and adds to their ability to work remotely.

- AWS services like MediaConvert and AWS Batch allowed us to transcode video at an immense scale.

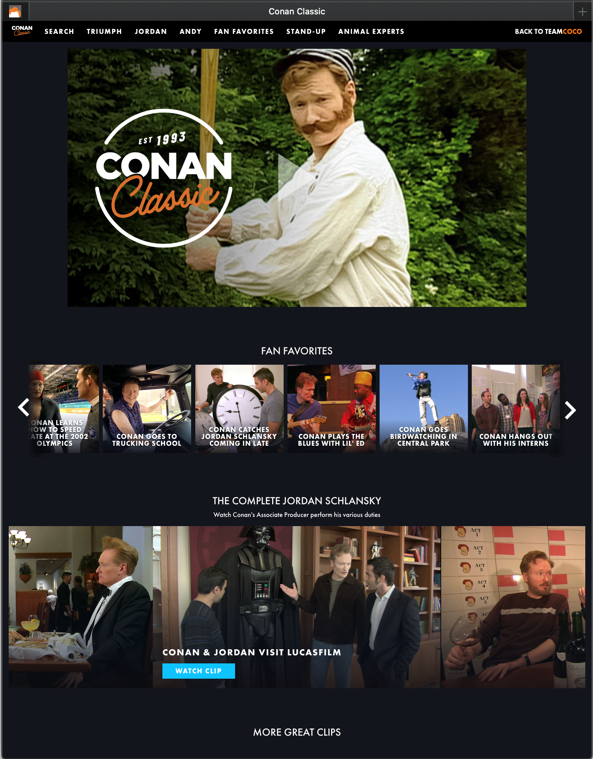

- We built a bespoke CONAN Classic web experience served out via Amazon CloudFront that gives our fans the fullest digital experience.

- Clips transcoded with AWS services are published every single week to Team Coco’s YouTube, Instagram, Facebook, and Twitter accounts, where they are being enjoyed by millions of fans.

- With the video files in Amazon S3, it opens up a whole host of possibilities to have them used across the AWS product catalog. When we shifted our show to be produced from home earlier this year, we were able to spin up a virtualized post-production environment using Amazon WorkSpaces, S3, and Amazon FSx shared storage.

- Having our entire catalog in Amazon S3 allowed us to seamlessly switch to remote work, which was hugely important for business continuity purposes.

- On our roadmap for this year is an expansion into Amazon ML tools like Amazon Transcribe that will allow us to transcribe the vast archive of video content we have. We’re also excited to implement Amazon Rekognition solutions in order to unlock the vast potential of our photo archive.

In using the AWS service ecosystem, I would normally say that the sky is the limit, but even that is an understatement. Believe it or not, there’s even a service to manage a fleet of satellites if that happens to be your need (Seriously! Look at this page). Luckily for me and the rest of the Team Coco tech team we don’t have any projects looming that would require managing an entire fleet of satellites. However, should that day ever come where we once again find ourselves in need of some good luck at the outset of a great new endeavor…we know where we are turning to.

Conclusion

Our famous talk show host boss at Team Coco came to us with what may very well have been an impossible task not long ago. We were able to meet the challenge head on. Our success was enabled in large part thanks to the elastic scale that we were able to achieve with an asset and digitization partner like Iron Mountain and our long-term cloud computing partner, AWS. We hit our near-term goals of bringing fan-favorite highlights to our digital platforms. At the same time, we digitally preserved television history in an incredible durable, highly available, and easy-to-use Amazon S3 cloud storage for generations to come. Head over to CONAN Classic and enjoy the results of our project!

We hope you enjoyed the post. Please feel free to leave a comment in the comments section. For some additional info about our project, watch our talk as part of the data archiving and digital preservation solutions presentation from re:Invent 2019:

The content and opinions in this post are those of the third-party author and AWS is not responsible for the content or accuracy of this post.