AWS Partner Network (APN) Blog

How to Optimize Your AWS Workload Cost with Capgemini and Virtana

By John Bobowicz, Sr. Manager Architecture at Capgemini North America

By Bob Farzami, VP, Cloud Strategy at Virtana

By Amit Mukherjee, Solutions Architect at AWS

Imagine if your organization suddenly had a quarter-million dollars freed up in your engineering budget without having to sacrifice on performance. What would you do with it?

Imagine if your organization suddenly had a quarter-million dollars freed up in your engineering budget without having to sacrifice on performance. What would you do with it?

For a vacation-experience customer at Capgemini, the decision was easy—reinvest in the roll-out of new applications.

Capgemini is an AWS Partner Network (APN) Premier Consulting Partner and leader in digital transformation that delivers what’s next for customers through data science, strategy, and innovation. They are an AWS Managed Service Provider (MSP) with AWS Competencies in Migration, Financial Services, and SAP.

For our project with the vacation-experience customer, we worked with APN Select Technology Partner Virtana to deliver significant cost savings. Virtana empowers IT management to optimize Amazon Web Services (AWS) workload resource utilization and reinvest savings without sacrificing application performance.

It took just 10 days for the customer to realize 30 percent savings on overlooked AWS assets, resulting in an overall 10 percent optimization in AWS cost. This level of savings is a boon for any business. To do this, however, you need to be aware of the resources available.

In this post, we’ll walk you through our approach to cost optimization using principles taken from the AWS Well-Architected Framework.

Basic Steps for Cost Optimization on AWS

Here are the three steps we use at Capgemini to optimize cost for customers:

- Remove idle resources, which poses zero risk on application performance and gets you instant savings.

- Right size over-allocated resources. This should be done after proper data analysis.

- Plan Amazon EC2 Reserved Instances (RI) purchasing for resized EC2 instances to capture long-term savings.

Addressing idle resource clutter looks easy, but costs often get lost in environments lacking a proper tagging strategy. Tags created to address wasted resources also help you to properly size resources by improving capacity and usage analysis. After right sizing, committing to reserved instances gets a lot easier—most of the work required to scope out ideal contracts has been done. You then have everything needed to realize significant savings.

By following these steps, we estimate the total savings for our vacation-experience customer project to approach $2 million dollars annually. In fact, more than $250,000 was saved in the first month alone.

Key Resources for Cost Optimization

AWS provides a thorough guide to cost optimization through the Well-Architected Framework. We recommend starting with the AWS Cost Optimization Pillar, as it provides an essential blueprint for designing your workload in a cost-effective way.

The AWS Well-Architected Framework helps cloud architects build the most secure, high-performing, resilient, and efficient infrastructure possible for their applications. This framework provides a consistent approach to evaluate architectures, and provides guidance to implement designs that scale with your application needs over time.

The Cost Optimization Pillar walks you through a series of comprehensive savings approaches to employ after going to market, such as:

- Appropriate provisioning

- Right sizing

- Purchasing options

- Geographic selection

- Managed services

- Optimizing data transfer

Most engineers, fearing hampered performance, avoid the task of cost optimization altogether until it becomes absolutely necessary. Beginning your cost optimization journey doesn’t have to be daunting, though, and Capgemini and AWS are here to help.

Capgemini’s proven expertise and methodologies, along with the AWS Trusted Advisor online tool, can perform an analysis of resources and report on any under- or over-utilized resources. You can also take advantage of AWS Managed Services to handle change management and governance.

Curious about where your environment stands? Compare the state of your workloads against AWS best practices with the new AWS Well-Architected Tool.

Using the AWS Cost Explorer

You must know your workloads on a granular level to get the most out of your cost-optimization exercise. Fortunately, AWS Cost Explorer allows you to programmatically query all kinds of useful data.

Our project relied on AWS Cost Explorer to gauge cost-optimization potential. Our partners at Virtana used these AWS API endpoints to perform their analysis:

- AWS Cost Explorer Service Endpoint for aggregated and granular usage data.

- AWS Budgets Endpoint for budget usage analysis and end-of-month predictions.

- AWS Price List Service Endpoint for all services, products, and pricing information.

Now that you’re familiar with the best practices and tools available to begin cost optimization, let’s see them in action.

Phase 1: Creating Asset Awareness

In this section, we’ll learn how to prepare existing infrastructure for data collection and analysis to better understand the state of its efficiency, and set a well-contextualized foundation for building out a cloud optimization project plan.

Capgemini’s project had a mandate to architect, implement, and operationalize a new application platform. We scoped and coordinated the effort involved in setup that would allow for a deep analysis of the customer’s development, quality assurance (QA), and production environments.

In total, these environments were comprised of hundreds of virtual servers or Amazon Elastic Compute Cloud (Amazon EC2) instances spread across multiple AWS accounts. Once we understood the scope, it was time to lay the foundation of good governance.

Start with Tagging All Assets

We used tags to overlay business and organizational information onto our customer’s billing and usage data. When you apply tags to your AWS resources and activate the tags, AWS adds this information to the Cost and Usage Reports. These tags become available when accessing your billing data through the API.

After all tagging is complete, we recommend using the Consolidated Billing feature found in your AWS Organizations console. This makes tracking easy and opens up savings opportunities via volume discounts.

Need help coming up with a tagging strategy? Check out the official AWS Tagging Strategies guide.

Integrate with Virtana

We needed a cost analysis solution that would pair well with the AWS API to optimize the cost of this project with minimal impact on application performance. We chose Virtana because they deliver an ever-growing list of reporting and recommendation tools to keep your AWS portfolio optimal and lean.

Virtana’s tools helped us with:

- Bill analysis and cost alerts to detect unusual changes in billing.

- Detecting idle and unused resources.

- Identifying over-allocated capacity and right sizing the computing resources.

- Recommending RI purchases based on granular usage analysis.

- Detecting performance bottlenecks to ensure safe capacity adjustments.

Track Your Inventory

To keep tabs on inventory, we used a combination of AWS Config, Amazon CloudWatch, and Amazon CloudTrail—integrating with our customer’s existing asset management systems. Virtana kept track of each asset’s relationships in its element (resource) inventory and monitored their performance.

Update Internal Processes

All assets being tagged, tracked, and integrated with Virtana meant that Capgemini was ready to create a new change management process. We started by capturing a set of application benchmarks using our customer’s QA environment. These benchmarks consisted of baseline utilization measurements of all standard components.

We then set up alerting policies in the Virtana app that would notify us of any upward deviation in memory, IOPS, or CPU utilization for a new release. A new release would not leave QA without additional justification and approval if these deviations surpassed specific thresholds. This enabled our customer to understand the operational cost impact of change.

Next, Capgemini created a technical review process to validate any resizing recommendations given by Virtana. This included subject matter expert adjustment and sign-off before execution since sizing recommendations don’t have full context for resources in question.

Finally, we created a plan for removing idle resource volumes. This involved archival of idle Amazon Elastic Block Store (EBS) volumes into Amazon Simple Storage Service (Amazon S3) or Amazon Glacier until they could be fully reviewed for removal.

We also improved the automation of de-provisioning of Amazon EC2 instances. While primary EBS volumes can be automatically terminated with the Amazon EC2 instances, secondary EBS volumes can’t. Enhanced automation ensured that secondary EBS volumes wouldn’t get forgotten and orphaned as unattached volumes.

With those governance tasks complete, we were ready to tackle right sizing the hosted cloud platform and ultimately optimize its resource usage.

Phase 2: Turning Data into Strategy

In this section, we’ll explore how to use the well-contextualized data collected from Phase 1 to discover opportunities for improvement and develop a cloud optimization strategy.

When most people think of optimizing for cost, their initial thoughts are to size instances down—but that’s not always the case. Virtana’s EC2 Recommendation Report discovered areas where it was more cost-effective for our customer to size up their instances.

Determine Your Right Sizing Requirements

Start with your historic workload patterns. We analyzed utilization measurements in CPU, memory, I/O, and network for this project. The screenshot in Figure 1 shows five live instances that needed to be resized, using Virtana’s EC2 Recommendation Report.

This report analyzes data ingested from:

- Customer’s detailed billing CSV files to ascertain current cost and element metadata.

- AWS Price List Service Endpoint to compare current spend with all of the available SKUs in the AWS product line.

Figure 1 – Virtana’s EC2 Recommendation Report table.

If the instances in Figure 1 were in your portfolio, you’d net over $63,000 in yearly savings—on just those five instances. That’s not including additional savings to be gained by converting those instances from on-demand to reserved.

Virtana calculated the projected savings by cross-referencing the customer’s given instance types with all possible compatible SKU permutations (over 400,000). Those permutations were boiled down to a list that would meet capacity requirements. Finally, that list was refined to the single, most-optimal instance type by applying any additional constraints set by the report user.

Note that the level of insight achieved at this stage is directly dependent upon having your billing accounts consolidated and all assets consistently tagged. By having everything prepared for analysis, you can:

- Determine requirements for each and every Amazon EC2 instance.

- Select configurations best suited for each specific workload.

Check for Idle Resources

Consider how often your environment changes. It’s difficult to keep track of whether every resource purchased is being used. A couple of unattached Elastic Load Balancers, some old EBS snapshots, and a few idle Amazon EC2 instances may not be a huge issue, but scale this problem up. Larger projects with multiple environments and ephemeral instances often harbor significant wasteful spending.

For this project, Virtana’s suite of Idle Resource Reports exposed 1,152 unused resources, which created $250,068 in instant savings for our customer.

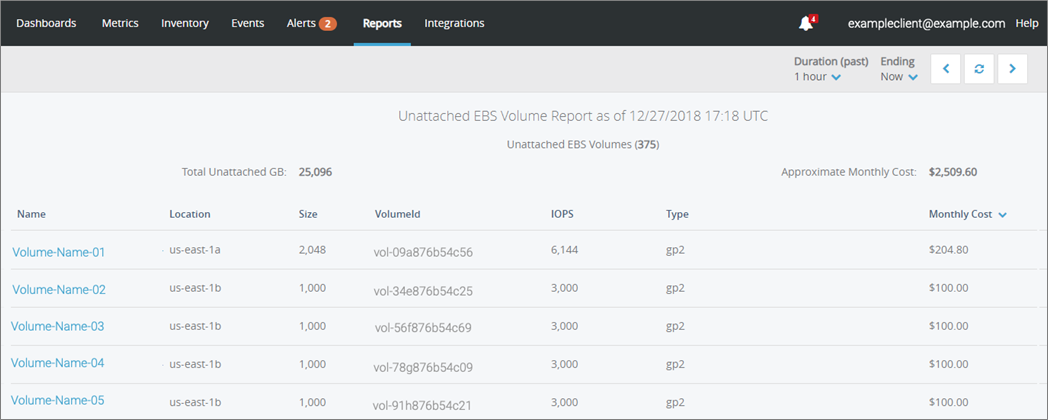

In Figure 2, you can see an example screenshot of the Unattached EBS Volume Report in Virtana; this report uses detailed billing to obtain cost data and the AWS Cost Explorer Service Endpoint to obtain element metadata.

Figure 2 – Results from Virtana’s Unattached EBS Volume Report.

There are currently three Idle Resource Reports available in Virtana that look for:

- Unattached EBS volumes

- Unattached Elastic Load Balancers

- EBS volumes on stopped Amazon EC2 instances

Examine Results

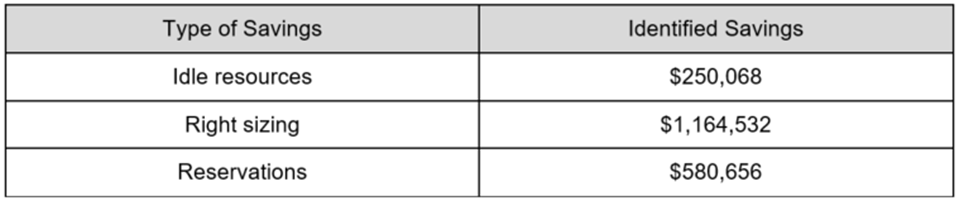

The total savings results are shown in Figure 3 and were made possible by utilizing Capgemini’s implemented governance processes, AWS services, and Virtana’s analysis platform.

Figure 3 – Total savings results calculated using tools from Capgemini, Virtana, and AWS.

Phase 3: Maintaining the Success

In this section, we’ll create the internal processes that enable the practice of cloud optimization to persist within your established workflow.

Building a culture of proactive cost-awareness is not easy when the emphasis in our industry is often on project delivery speed, application performance, and platform stability. Once you’ve finally got the buy-in and managed to complete an initial savings project, how do you prevent your applications from gradually returning to an expensive, less-optimal state?

Use Gates to Implement Change

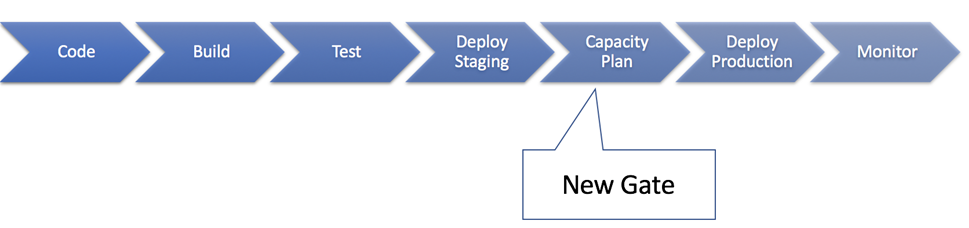

Suppose you have a new release being deployed. During UAT testing, your capacity measurements reveal the new software version consumes computing resources at a much higher rate. This would be the right time decide between rolling back to a previous version or adjusting your budget and upgrading.

Use this opportunity to create a gate that adds governance for capacity (and cost) management.

Figure 4 – Create a gate to add governance for capacity and cost management.

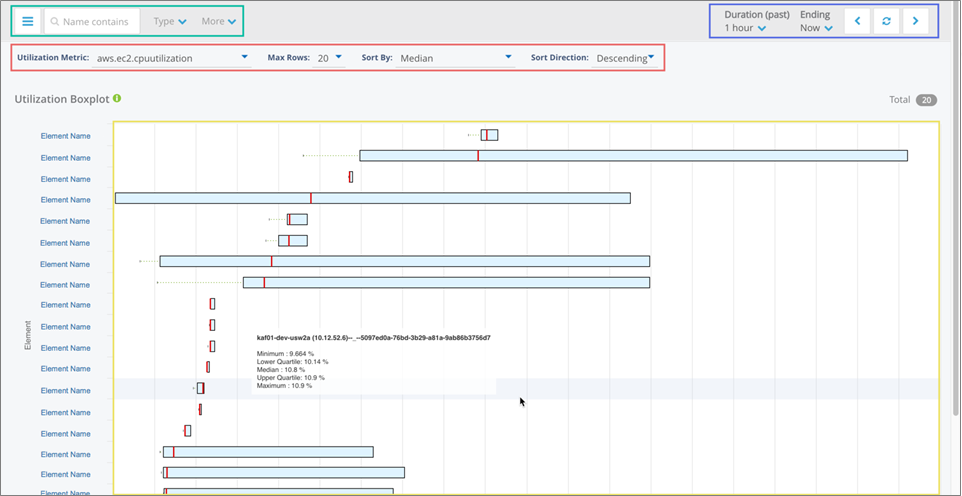

We measured resource consumption for this project using Virtana’s Utilization Boxplot Report. This report collects utilization data through the AWS Cost Explorer Service Endpoint, aggregating it over time. Figure 5 shows a list of resources on the left, with quartile boxes for each resource which gauge the min-max utilization (as well as the median).

Figure 5 – Virtana’s Utilization Boxplot report shows resource utilization over time.

Measure Performance Before and After Changes

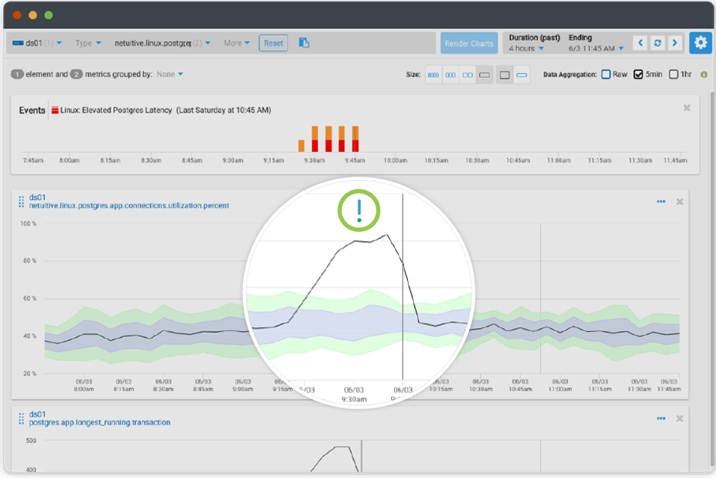

This post is about cost, but I’d like to point out that Virtana isn’t just a cost-optimization solution. Virtana detected a major performance bottleneck issue for us—before the customer’s pre-existing monitoring solution—thanks to their set of default alerting policies that look for common infrastructure performance issues.

These policies are backed by machine learning anomaly detection algorithms that operate in real-time to learn the expected behavior of each metric. This is achieved within the context of other metrics for a given resource, for each individual resource.

The expected behavior is called a contextual band, and when using Virtana’s monitoring dashboards it can be seen as the thick blue line on a metric widget.

Figure 6 – A dashboard metric widget using Virtana’s anomaly detection capability.

Because Virtana detected the issue so fast, we asked for backup from Virtana’s support service team to troubleshoot.

This incident ended up becoming a case study which was presented during a CTO council meeting to highlight the benefits of managing infrastructure cost, capacity, and performance together.

Leverage Your Partnerships

We believe in overcoming obstacles by leveraging partnerships such as the AWS Partner Network—where Capgemini and Virtana came together to collaborate.

The following combination allowed us to optimize cost while rolling out a demanding project:

- AWS’s Cost Explorer Service API.

- Virtana’s cost-optimization platform.

- Capgemini’s cloud expertise, governance, and change management processes.

The process of right sizing comes with a great deal of responsibility. Implementing resource changes can adversely affect performance if executed without the right tools, data insights, partnerships, and of course a supportive client.

Conclusion

At Capgemini, we recommend laying the proper foundation at the onset of any cloud migration project, regardless of your application architecture, so you can avoid wasting money and maintain a cost-conscious operational culture over time.

To recap, here are the most important steps to remember:

- Eliminate waste from your current platform by removing idle and over-allocated capacity.

- Purchase Reservation Instances (RIs) after you have stabilized your requirements.

- Monitor for performance bottlenecks that may be caused by capacity change.

- Implement a new gate in your CI/CD process to detect and plan for capacity changes.

It’s never too early to implement these tools and processes to avoid waste. After all, your budget is best spent on reinvesting in innovation projects.

.

|

Capgemini – APN Partner Spotlight

Capgemini is an APN Premier Consulting Partner. They create and deliver business, technology, and digital solutions that fit customers’ needs, enabling them to achieve innovation and competitiveness.

Contact Capgemini | Practice Overview

*Already worked with Capgemini? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project..

|

Virtana – APN Partner Spotlight

Virtana is an APN Select Technology Partner and leading hybrid cloud optimization platform for digital transformation.

Contact Virtana | Solution Overview

*Already worked with Virtana? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.