AWS Partner Network (APN) Blog

VMware Cloud on AWS Hybrid Network Design Patterns

By Sheng Chen, Sr. Specialist Solutions Architect at AWS

VMware Cloud on AWS enables customers to deploy VMware’s software-defined data centers (SDDCs) and consume vSphere workloads on Amazon Web Services (AWS) global infrastructure as a managed service. Jointly engineered by VMware and AWS, VMware Cloud on AWS brings a true hybrid cloud experience to customers.

As customers continue to adopt VMware Cloud on AWS, it’s become important to provide scalable and reliable hybrid connectivity to help integrate their SDDCs with on-premises and cloud-native services. Customers should also be able to expand connectivity globally to seamlessly support their business demands.

VMware Cloud on AWS customers have additional network security requirements including network encryption, firewall integration, and traffic segmentation that need to be fulfilled.

In this post, I will explore VMware Cloud on AWS hybrid network design patterns and considerations. I’ll go through various network architecture design options and use cases that address customer requirements.

AWS Direct Connect

AWS Direct Connect is a cloud networking service that provides dedicated connectivity from your premises to AWS. Using AWS Direct Connect, you can establish secure and private connections between AWS and your on-premises with flexible connection types and speeds from 50Mbps to 100Gbps. As a result, it provides more consistent network performance, increased throughput, and reduced data transfer out (DTO) cost than internet-based connection.

For these reasons, it’s recommended customers deploy AWS Direct Connect to connect their on-premises environment with VMware Cloud on AWS SDDCs and cloud-native services. You can refer to this AWS blog post on how to build Direct Connect connections into VMware Cloud on AWS with best practices.

Private Virtual Interface (Private VIF)

With an AWS Direct Connect dedicated connection, you can use industry-standard 802.1Q VLANs to create up to 50 logical partitions over the physical link called virtual interfaces (VIFs). These include Private, Public, and Transit VIFs.

For customers with a small cloud footprint that includes a single SDDC and a few Amazon Virtual Private Clouds (Amazon VPCs), you can create and assign one private VIF for every VPC and SDDC to establish connectivity back to the on-premises environment.

A private VIF is established between a customer gateway and a Virtual Private Gateway within the VPC. In the case of VMware Cloud on AWS, the private VIF is terminated on the Virtual Private Gateway within the VMware-managed shadow VPC that hosts the SDDC. A Border Gateway Protocol (BGP) session established over the private VIF is used to learn and advertise routes between on-premises and the AWS Cloud.

Figure 1 – Connecting VPCs and SDDC using Private VIFs.

Although this design provides simple private connectivity, it does not offer any VPC-to-VPC or VPC-to-SDDC transitive routing capability within the AWS region. This leads to increased latency and a potential throughput bottleneck at the customer gateway.

Moreover, since each private VIF requires a separate BGP session, this design adds additional management overhead and limits the overall network scalability.

Transit Virtual Interface (Transit VIF)

To achieve a more scalable network design, customers can deploy a transit VIF (instead of multiple private VIFs) which is now supported on all Direct Connect connection types and speeds. In this case, a single BGP session is established over the transit VIF and terminated on a Direct Connect Gateway, which is then associated with an AWS Transit Gateway that provides transitive routing between VPCs, and between on-premises and VPCs in the same AWS region.

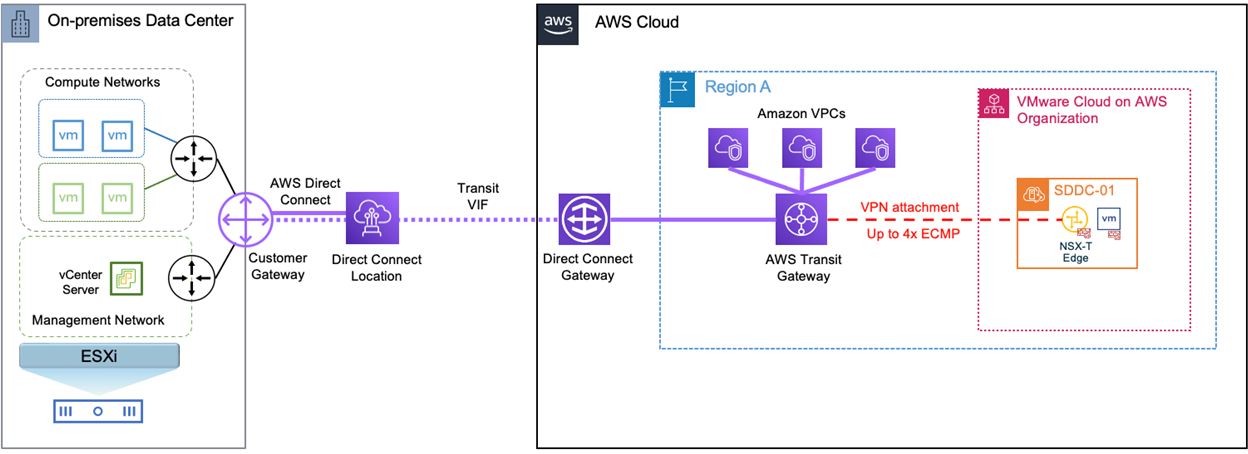

In addition, you can establish a virtual private network (VPN) between the SDDC’s NSX Edge appliance and the AWS Transit Gateway using a VPN attachment. The AWS Transit Gateway can now route traffic between the SDDC, VPCs, and on premises using the same Transit VIF. Up to four route-based IPsec VPN tunnels with Equal-Cost Multi-Path (ECMP) are supported at the NSX Edge to provide increased bandwidth and resiliency.

Figure 2 – Connecting VPCs and SDDC using Transit VIF with Transit Gateway.

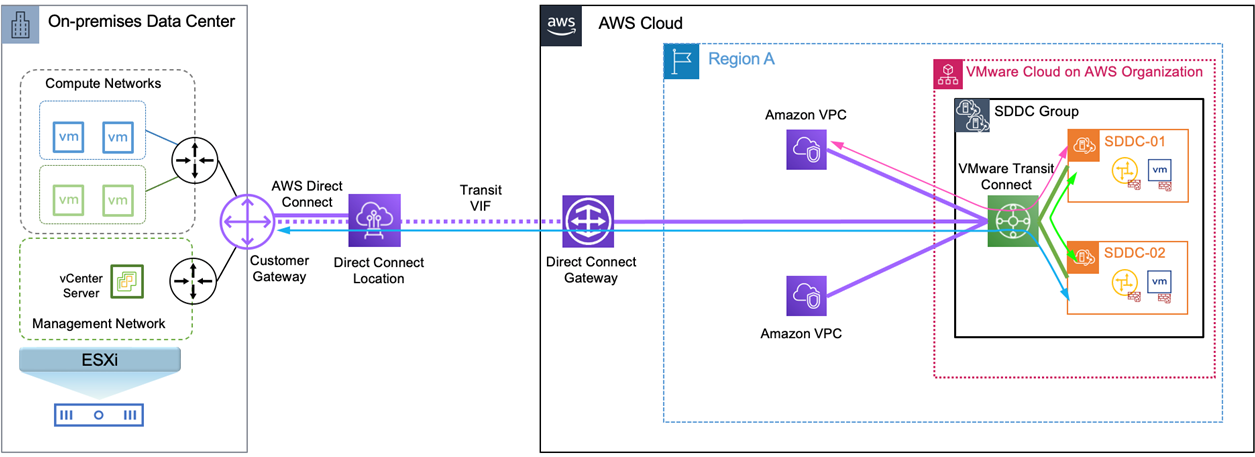

Customers with multiple SDDCs requiring high bandwidth connectivity can leverage the VMware Transit Connect to provide high-speed communication between the SDDCs.

Transit Connect is a VMware-managed AWS Transit Gateway solution that interconnects SDDCs within an SDDC group, and establishes resilient connectivity to on premises over a Direct Connect Gateway. It eliminates VPN management overhead and helps customers simplify network operations at scale through automatic route propagations to route tables in each SDDC.

Figure 3 – VMware Transit Connect.

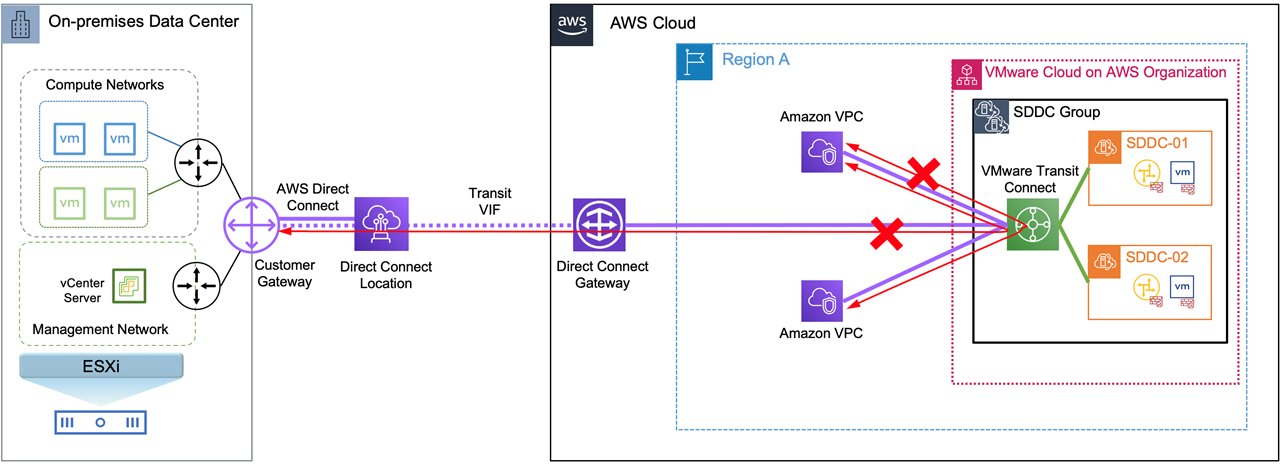

However, it’s important to note that VMware Transit Connect only supports traffic flow either originated from or destined to resources within a SDDC. As shown in the diagram below, all inter-VPC flows or traffic between VPCs and on-premises will be dropped at the Transit Connect.

Figure 4 – Transit Connect unsupported flow patterns.

Scalable Network Architecture within AWS

Leverage AWS Transit Gateway Intra-Region Peering

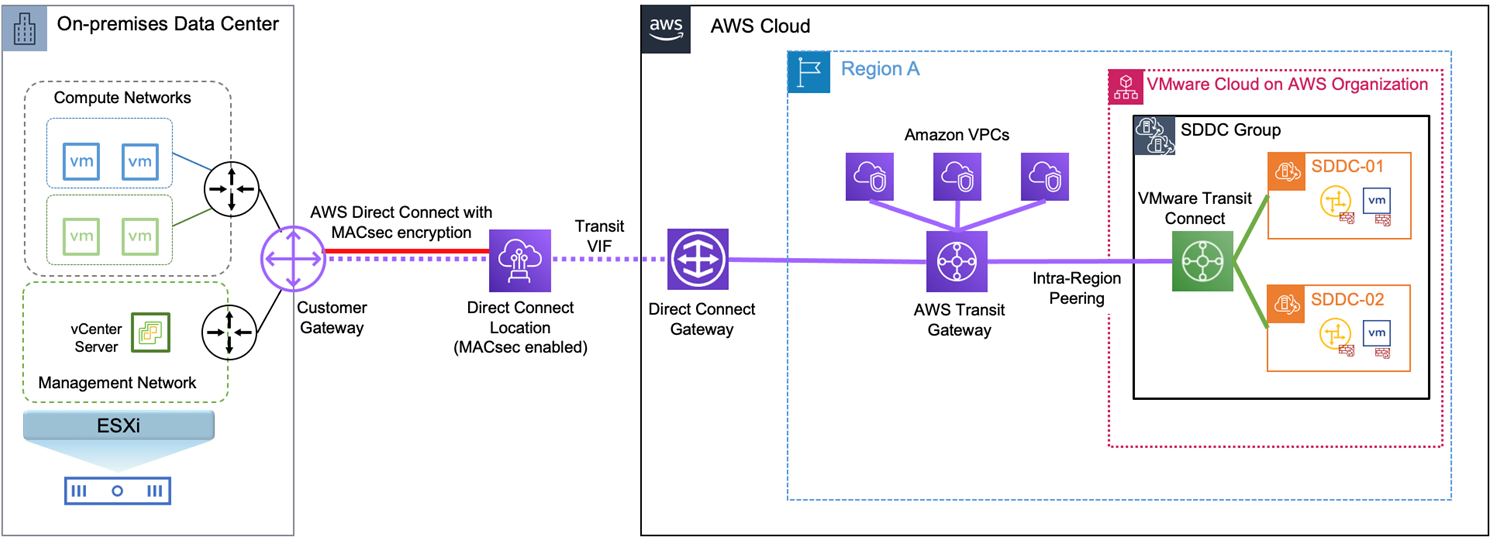

During AWS re:Invent 2021, we announced Transit Gateway intra-region peering, which can overcome the VMware Transit Connect flow restrictions. This new capability brings external connectivity from VPCs and the transit VIF into SDDC groups by leveraging intra-region peering between AWS Transit Gateway and VMware Transit Connect.

VPC-to-VPC and VPC-to-on-premises traffic will be routed directly at the AWS Transit Gateway bypassing the VMware Transit Connect restrictions. SDDC-to-VPC and SDDC-to-on-premises traffic will traverse the VMware Transit Connect and then through the AWS Transit Gateway via the intra-region peering attachment.

Figure 5 – Connecting AWS Transit Gateway and VMware Transit Connect using intra-region peering.

Expand to Multi-Region with AWS Transit Gateway Inter-Region Peering

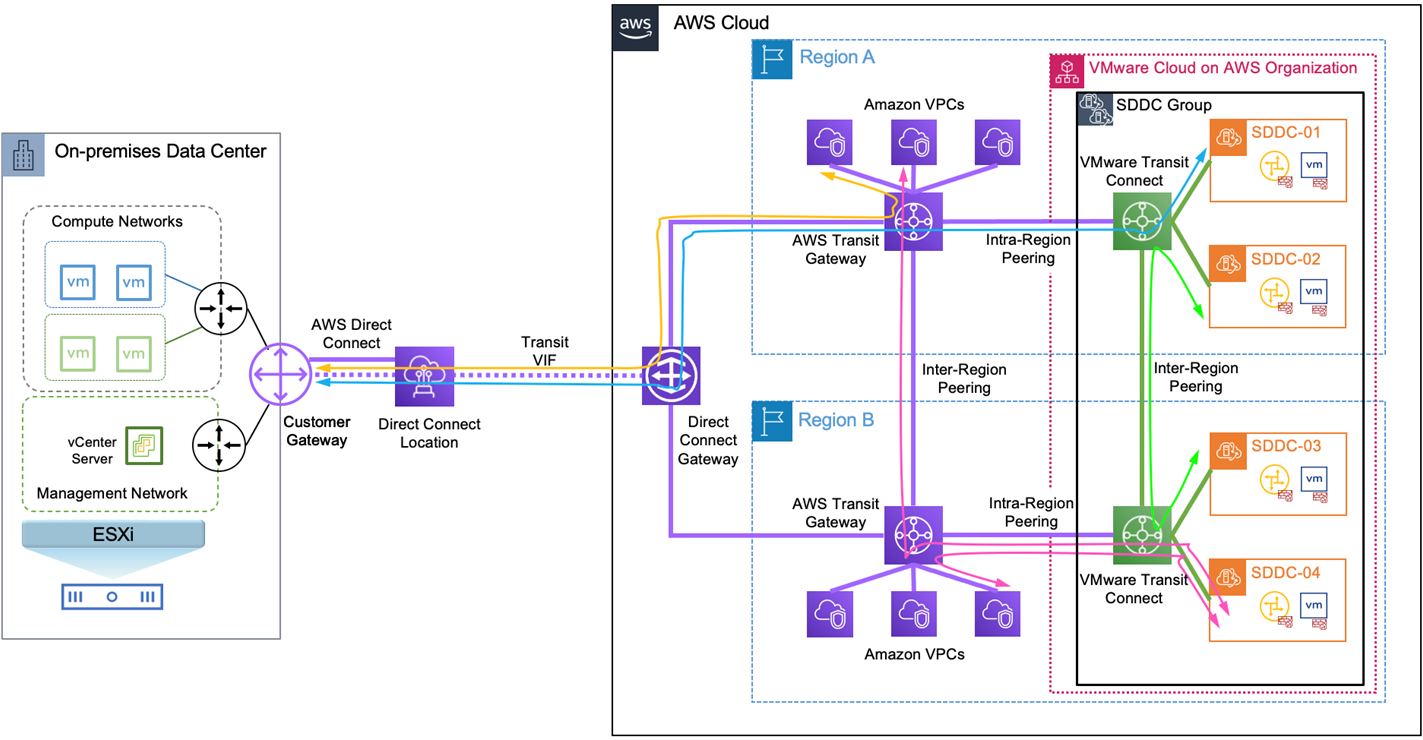

Customers with multiple SDDCs and VPCs across multiple AWS regions can easily expand hybrid connectivity to a global scale by utilizing the Transit Gateway inter-region peering capability. AWS Direct Connect Gateway being a global resource is capable of associating itself with up to three Transit Gateways to establish connectivity between multiple AWS regions and on premises via the existing transit VIF.

Furthermore, by using a combination of Transit Gateway intra-region and inter-region peering, you can build connectivity between SDDCs, VPCs, and on premises across same or different AWS regions. The following diagram illustrates various traffic flows in a multi-region deployment that share the same Direct Connect connection.

Figure 6 – Shared Direct Connect connection for VPCs and SDDCs in a multi-region deployment.

Customers with latency-sensitive requirements between on-premises and SDDCs, or prefer to have a dedicated uplink into SDDC groups, could deploy a separate Direct Connect connection with a different transit VIF for connecting your VMware Transit Connect.

Figure 7 – Separate Direct Connect connections for VPCs and SDDCs in a multi-region deployment.

This design helps offload SDDC-to-on-premises traffic to a different Direct Connect Gateway and transit VIF, therefore reducing potential bottleneck at the intra-region peering. It provides maximum scalability and SDDCs in each region have bandwidth of up to 50Gbps for accessing resources inside VPCs.

Network Encryption

Some customers may plan to migrate sensitive workloads into SDDCs or require data encryption in-transit. In this case, you can build IPsec VPNs over the internet, or over an AWS Direct Connect public VIF with more reliable and consistent performance.

It’s recommended to run centralized VPN tunnels from your on-premises gateway to the AWS Transit Gateway (rather than to each SDDC), and then bring traffic into SDDCs using the intra-region peering. Each IPsec tunnel provides a maximum throughput of 1.25Gbps and customers can leverage ECMP to scale the VPN throughput up to 50Gbps to the AWS Transit Gateway. This design reduces management overhead of establishing VPN tunnels into individual SDDCs, and avoids VPN restrictions on the VMware Transit Connect.

In June 2022, AWS announced the Private IP VPN capability that provides an alternative option to encrypt Direct Connect traffic between on-premises and AWS over a transit VIF, without the need for public IP addresses. This feature improves your overall security posture and enables enhanced network privacy. Additionally, customers have the flexibility to utilize private IP VPN over Direct Connect as the primary path, while using VPN over the internet as a backup path.

Figure 8 – Use Private IP VPN as primary path and VPN over Internet as backup.

With the introduction of MACsec security (IEEE 802.1AE) in 2021, AWS Direct Connect now offers a new encryption option for customers running 10Gbps or 100Gbps dedicated connections at select locations. With this release, AWS Direct Connect delivers native, point-to-point link protection at layer 2 by encrypting the frame’s ethertype and payload.

MACsec encryption provides the following benefits:

- Ensures confidentiality and integrity, protects Ethernet links from threats such as man-in-the-middle snooping and passive wiretapping.

- Encrypted data is transmitted at near line rate speed.

- Open standard – IEEE 802.1ae standard supported by multiple vendors.

- Eliminates the complexity of managing multiple IPsec VPN tunnels.

As shown in the below diagram, MACsec encryption is configured transparently at layer 2 between AWS Direct Connect device and customer gateway. No VPN overlay is required and all layer 3 and above payload is fully encrypted before arriving at the AWS network.

Figure 9 – Direct Connect with MACsec encryption.

Network Security and Segmentation

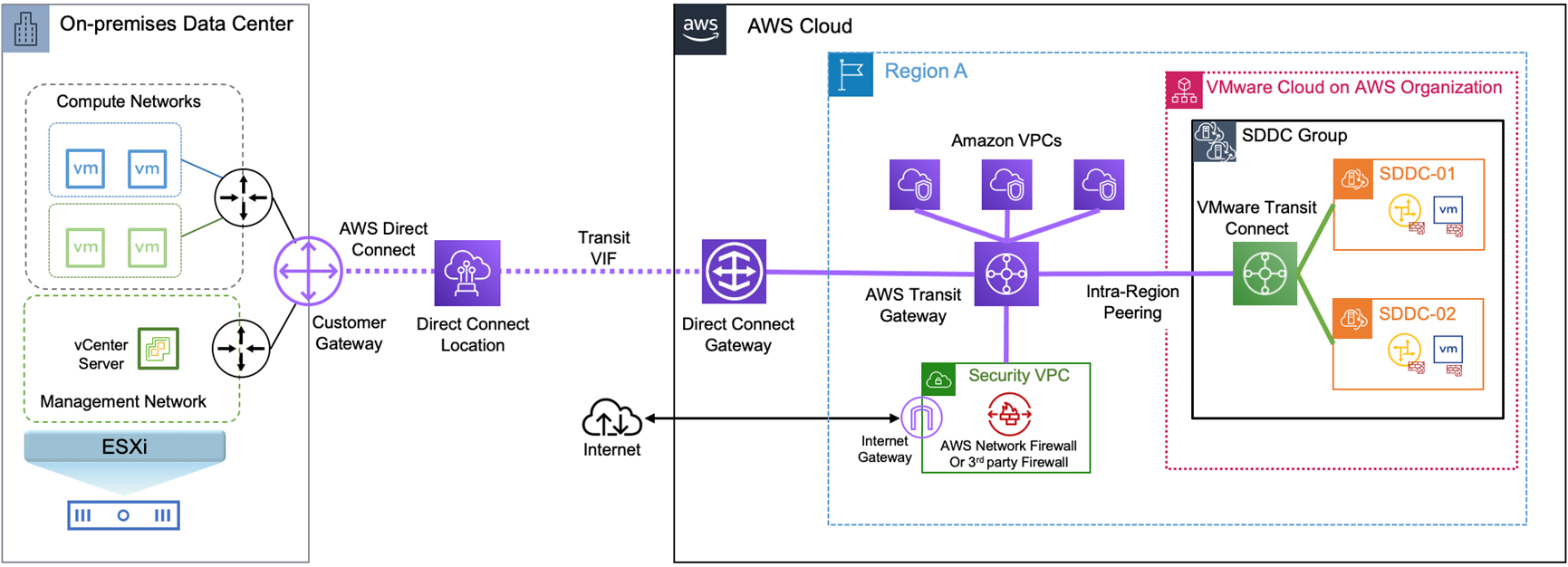

Leveraging AWS Transit Gateway intra-region peering, customers can integrate a security VPC with the SDDC group for centralized traffic inspection. You can deploy the highly available and scalable AWS Network Firewall service or third-party firewall services into the security VPC by using the Gateway Load Balancer.

With this design, the following traffic flows can be inspected using the security VPC:

- Between SDDC and VPC

- Between SDDC and on-premises

- Between SDDC and internet

- Between VPC and VPC

- Between VPC and on-premises

- Between VPC and internet

Note that east-west traffic inspection between SDDCs within the same SDDC group is not currently supported. More details of firewall deployment models and best practices are available in these AWS blog posts:

- Deployment models for AWS Network Firewall

- Centralized inspection architecture with AWS Gateway Load Balancer and AWS Transit Gateway

Figure 10 – Integrating with a security VPC for centralized traffic inspection.

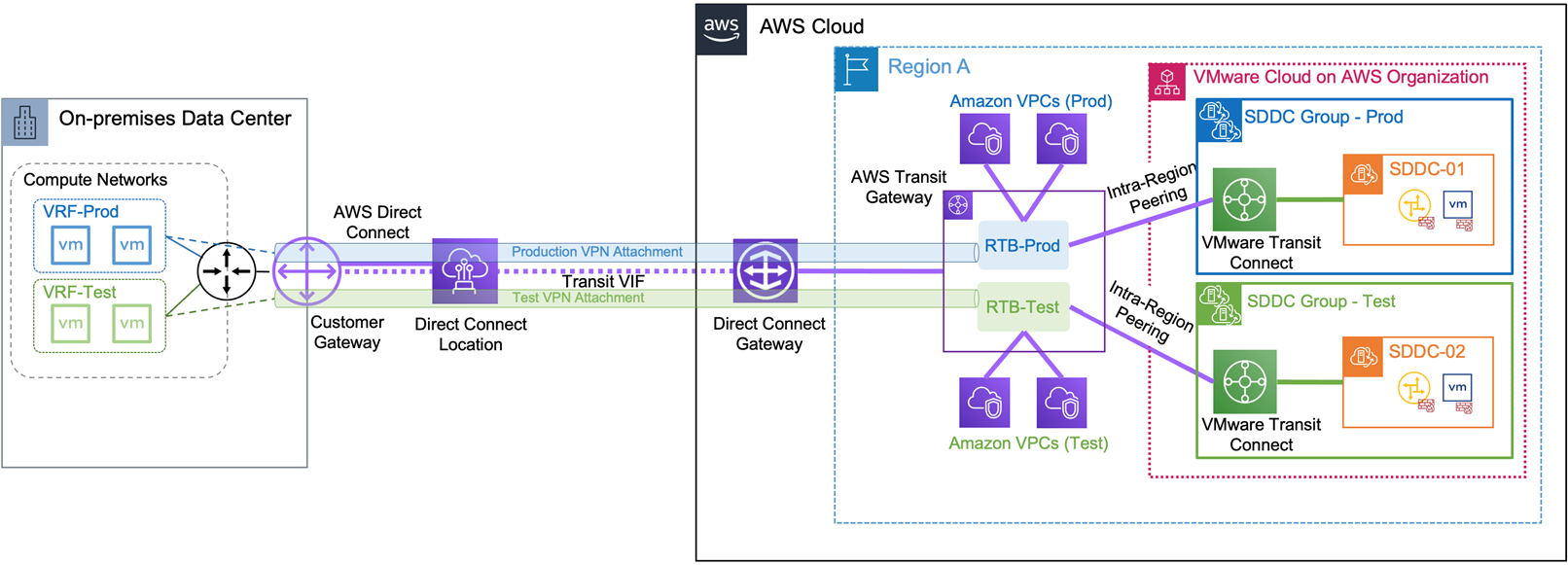

In addition to the network encryption use case, another possible scenario is to use Private IP VPNs for traffic segmentation. You can extend different on-premises virtual routing and forwarding instances (VRFs) to the Transit Gateway by creating one Private IP VPN attachment per VRF.

In the example below, you can see two separate Transit Gateway route tables created for Production and Non-Production environments. Production VPCs, Production VPN attachment, and intra-region peering from the Production Transit Connect are associated and propagated to the Production route table.

A similar configuration is applied to the Non-Production route table. This creates an end-to-end network segmentation from on-premises VRFs to VPCs and SDDCs for each corresponding environment.

Figure 11 – Traffic Segmentation with Private IP VPNs.

Connected VPC

VMware Cloud on AWS customers can seamlessly integrate their SDDCs with native AWS services. During a SDDC onboarding process, customers are able to establish high-bandwidth and low-latency connectivity to a designated VPC, which is often referred to as the connected VPC. This connectivity is established using cross-account Elastic Network Interface (X-ENI) between the NSX Edge appliance in the VMware-managed shadow account and a subnet within the connected VPC in the customer-managed account.

Data transfer between the SDDC and connected VPC will not incur any egress costs as long as the SDDC and destined native services reside in the same AWS Availability Zone (AZ).

A single consolidated connected VPC can also be used to simplify native services sharing with multiple SDDCs. Virtual machine (VM) workloads running on various SDDCs and accessing native services hosted in the connected VPC will utilize X-ENIs instead of traversing the VMware Transit Connect, therefore improving operations efficiency and minimizing data transfer costs. It’s recommended you reserve a /26-CIDR block at the X-ENI subnet for each SDDC, as discussed in this whitepaper.

In the following example, we have a Production SDDC deployed in AZ1 and a Disaster Recovery (DR) SDDC deployed in AZ2. Both SDDCs are connected to the same connected VPC via X-ENIs attached to separate subnets in their corresponding AZs where the SDDCs reside.

As a best practice, we have enabled multi-AZ support on native services such as Amazon FSx and Amazon Relational Database Service (Amazon RDS) in the connected VPC. This ensures that VM workloads can still access these services via the X-ENI after failover to the DR SDDC during an AZ failure. Furthermore, the active instances of these native services should be configured in the same AZ as the Production SDDC to avoid cross-AZ data charges during normal operations.

Figure 12 – Using a shared connected VPC with multiple SDDCs.

Additional Considerations

AWS Direct Connect is the foundational pillar for establishing hybrid connectivity to your VMware Cloud on AWS and cloud-native environments. We strongly recommend using connections from more than one AWS Direct Connect location to ensure resiliency against device or colocation failure. Learn more about recommended topologies and best practices to achieve various levels of resiliency using AWS Direct Connect.

If you have requirements for low-latency access to critical services such as Amazon Aurora, or I/O intensive applications such as backup from SDDCs to Amazon Simple Storage Service (Amazon S3), it’s recommended to deploy these services into the connected VPC to improve application performance and optimize data transfer costs.

With the recent data transfer price reduction announcement, cross-AZ data transfer within the same AWS region for AWS PrivateLink, AWS Transit Gateway, and AWS Client VPN is now free of charge. This helps our customers to further reduce costs when transferring data between SDDCs, or accessing native services across different AZs via VMware Transit Connect or AWS Transit Gateway.

Summary

In this post, I have discussed VMware Cloud on AWS hybrid network design patterns and considerations for interconnecting your SDDCs with on-premises and AWS services by leveraging AWS networking constructs.

To learn more, we recommend you to review these additional resources: