AWS Partner Network (APN) Blog

Migrating Applications from Monolithic to Microservice on AWS

By Kautham Dhanasekaran, Solutions Architect at Tech Mahindra

By Saurabh Shrivastava, Partner Solutions Architect at AWS

By Vivek Raju, Manager, Global Integrator SA at AWS

|

|

|

|

As cloud becomes the new normal, many businesses want to use its potential to improve their customer experience. Amazon Web Services (AWS) helps businesses migrate to the cloud and provides best practices for the modernization of their applications.

Organizations all around the world are using the breadth and depth of AWS services to become more cloud-native. Telia used the AWS platform to modernize their customer information management (CIM) platform from monolithic to microservice for flexibility and scalability. Telia is a Europe-based telecom provider with 20,000 employees serving millions of customers across the globe.

This post is about Telia’s journey to the cloud and how Tech Mahindra helped them with cloud migration and created a cloud-native architecture. Tech Mahindra is an AWS Partner Network (APN) Advanced Consulting Partner and member of the AWS Managed Services Provider (MSP) Partner Program.

Telia was hosting its CIM platform in an on-premises data center in Sweden. They had the following challenges:

- Large, monolithic application with reliability and performance issues.

- Increased blast radius due to the tightly coupled architecture, where an issue in one component could take down the entire system.

- Dependency on costly, third-party licensed applications.

- More downtime due to the increase in maintenance cycles.

- Tedious manual deployments for new-feature releases.

In this post, we describe how Telia Denmark modernized their CIM application by migrating to AWS using a containerized microservices architecture.

Overview

Each of Telia’s application components were re-architected to a microservices framework and containerized using AWS Fargate for Docker deployment. Tech Mahindra used AWS DMS to migrate database from on-premises Oracle to cloud-native Amazon Aurora, which helped Telia save costs on licensing.

Tech Mahindra also set up a continuous integration and continuous deployment (CI/CD) pipeline to automate the deployment process by reducing application downtime. In the following sections, you will learn about the overall architecture and how Telia achieved cloud-native architecture on AWS.

Application Architecture

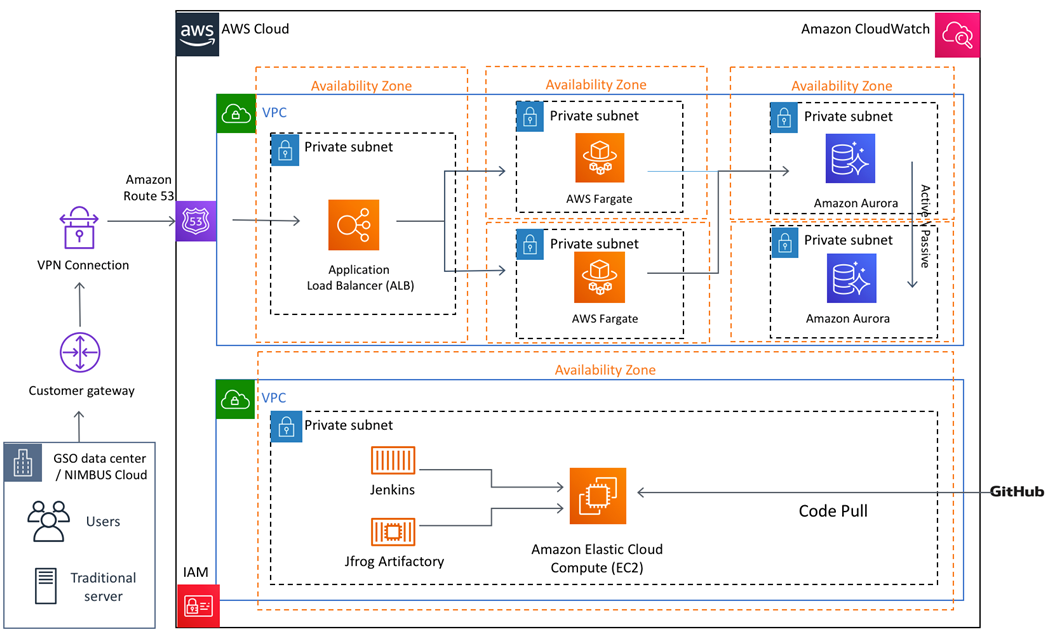

The following diagram shows the application architecture after migrating to AWS and containerizing into microservices architecture:

The following AWS services and features now host components of Telia’s new CIM microservice architecture:

- AWS VPN establishes a secure and private tunnel from the on-premises data center to the AWS global network.

- Amazon Route 53 is a Domain Name Server (DNS), which routes global traffic to the application using Elastic Load Balancing.

- Amazon Virtual Private Cloud (Amazon VPC) sets up a logically isolated, virtual network where the application can run securely.

- Application Load Balancer is a product of Elastic Load Balancing, which load balances HTTP/HTTPS applications and uses layer 7-specific features, like port and URL prefix routing for containerized applications.

- Amazon Elastic Container Service (Amazon ECS) is a container orchestration service that supports Docker containers to run and scale containerized applications on AWS.

- AWS Fargate is a compute engine for Amazon ECS that allows running containers without having to manage servers or clusters. Microservices are deployed as Docker containers in the Fargate serverless model.

- Amazon Elastic Container Registry (ECR) is integrated with Amazon ECS as a fully managed Docker container registry that makes it easy to store, manage, and deploy Docker container images. This is used as a private repository to host built-in Docker images.

- Amazon Aurora is a relational database compatible with MySQL and PostgreSQL, used as a database for the CIM platform migration.

- AWS DMS migrates on-premises Oracle databases to cloud-native Aurora databases.

- Amazon CloudWatch is a monitoring and management service used to monitor the entire CIM platform and store application logs for analysis.

- Amazon Elastic Compute Cloud (Amazon EC2) provides compute capacity in the cloud, and was used to host Jenkins and JFrog Artifactory as a container for the CI/CD pipeline.

- AWS Identity and Access Management (IAM) manages access to AWS services and resources securely.

In the rest of this post, we describe how each component played a role in modernizing Telia’s CIM application.

Application Security and Compliance

For any application, security is the first priority. To secure connectivity between the on-premises data center and AWS Cloud, the Tech Mahindra team set up VPN tunnels. Site-to-Site VPN extends the data center to the AWS Cloud and VPN helps to connect to a VPC, establishing secure and private sessions with IP security (IPsec) and Transport Layer Security (TLS) tunnels.

Amazon VPC is configured to provide isolated network boundaries to host the resources and restrict network access. The team created multiple private subnets to host applications with no open internet endpoint, and reduced the blast radius for any unforeseen security incidents. VPC security groups were configured to restrict port and protocol access for corporate networks only at the instance level.

An additional layer of network security rule added using network access control list (network ACL) acts as a firewall for controlling traffic in and out at the subnet level.

The databases and Docker containers are hosted in private subnets and deployed across multiple Availability Zones (AZs) for high availability. To secure all resources, any inbound traffic is blocked and outbound traffic is only allowed using the VPC NAT gateway securely.

To satisfy audit and compliance needs, the team configured AWS CloudTrail, which provides event history of AWS account activity. The activity includes actions taken through the AWS Management Console, AWS Command Line Interface (CLI), or AWS Software Development Kits (SDK). They also enabled AWS Config to assess, audit, and evaluate the configurations of AWS resources.

Application Migration and Containerization

During application discovery, the main pain point for Telia was to revamp its monolithic application and improve the customer experience by reducing application downtime and increasing performance. Other areas to address were the tedious deployment process, and removing any dependencies on costly third-party licensed applications.

Tech Mahindra suggested using this migration opportunity to rebuild the application in a cloud-native architecture. It was also an opportunity to transform the current monolithic architecture to a loosely coupled, microservices-based architecture.

The team decoupled the new architecture into the following microservices:

- CIM service stores, fetches, and updates customer information.

- Customer information bridge service connects the on-premises database to CIM service, which was built on the cloud-native database Aurora PostgreSQL.

- Search indexes customer information for easier record retrieval.

- Customer identification service integrates the third-party customer identification system to verify unique, government-issued identities.

- Subscription service fetches customer subscriptions.

- Device service fetches customer device information.

The microservices were containerized using a serverless model with Fargate, which can run Docker containers without having to manage any servers or clusters. Amazon ECS was used for Docker container orchestration and ECR to store, manage, and deploy Docker container images.

Route 53 was configured to provide CIM application accessibility for customers and on-premises applications. After getting a customer request, Route 53 routes traffic to the Application Load Balancer, which distributes the workload between multiple Fargate Docker containers. All of the application logs are captured and stored in CloudWatch for analysis and alerts.

The Application Load Balancer was configured with Fargate for high availability and performance. Fargate uses automatic scaling to achieve elasticity and scale resources in or out as the workload requires. When the load breaches the memory utilization threshold of 90 percent, horizontal scaling takes place and launches one more Docker container, attaching it to the load balancer.

When the memory utilization threshold is less than 50 percent, it scales in automatically and removes the corresponding resources gracefully from the load balancer.

Database Migration

The source database was Oracle on-premises and the target database deployed on AWS was Aurora with the PostgreSQL relational database service (RDS) engine. Tech Mahindra used AWS DMS for this heterogeneous database migration, along with automation scripts for accurate and accelerated migration execution to the cloud.

After the data was migrated to Amazon Aurora PostgreSQL with AWS DMS, they used custom PL/SQL scripts to handle the schema changes. They also used Oracle’s advanced queuing to transfer the incremental data load to the target DB.

Telia had over 70 million records of entries in its current system that had to be moved within a short span of time. The Tech Mahindra team migrated those records to AWS through an extensive data migration approach using AWS DMS.

For this migration, some of the key challenges encountered included:

- Heterogeneous database migration.

- Schema changes.

- Huge volume of data to be migrated in a short time.

- Case-sensitive nature of Aurora PostgreSQL.

The migration approach included moving the necessary data from the existing system, matching the schema of the target DB engine, and cleansing and transforming the target data through automation scripts.

Set Up a CI/CD Pipeline

Reducing the deployment time was another major pain point that had to be addressed, and Tech Mahindra used an automated CI/CD pipeline to mitigate that.

To set up the CI/CD pipeline, the team used Jenkins for continuous integration and continuous deployment, with Maven for building. JFrog Artifactory stores Maven-built .jar files and is a universal binary repository manager where you can manage multiple applications, dependencies, and versions in one place.

Artifactory also enables you to standardize the way you manage package types across all applications developed in your company, no matter the code base or artifact type.

Amazon EC2 is used to host Jenkins and JFrog Artifactory in Docker containers. Running Jenkins in Docker containers allows the more efficient use of servers running Jenkins worker nodes. It also simplifies the configuration of the worker node servers. Using containers to manage builds allows the underlying servers to be pooled into a cluster. The Docker image is built with the .jar files, stored in ECR, and deployed in Amazon ECS as a Fargate deployment.

During new deployment/release, the new Docker image is launched first in Fargate and attached to the Application Load Balancer. After it reaches a healthy state, the old Docker container is drained. It’s later removed from the load balancer after all the connections are drained, thus maintaining zero downtime during deployment and releases.

Putting it All Together

Telia used the AWS value proposition to improve performance and reduce their application downtime. They maximized their business benefit by hosting CIM on AWS and received the following benefits:

- Independent development of microservices, granular compute resource allocation (individual memory for each service), and reusability gained by re-architecting the CIM application using a microservices framework.

- Greatly improved application availability and reliability, as the application leverages core AWS services such as Elastic Load Balancing and AWS Auto Scaling.

- Successfully migration of 70+ million data records in just 90 minutes from the on-premises database to the AWS Cloud.

- Automated deployment of microservices as a Docker container in Fargate in a single click with Jenkins.

- Significant performance improvement, as the application was designed to use load balancing and caching techniques.

- Addressing compliance and risk measures at scale using AWS best practices and recommendations incorporated with AWS Config and CloudTrail.

Conclusion

A business must continue evolving to improve its customer experience, both to satisfy customer needs and save on costs. Creating a serverless architecture provides true cloud-native architecture in which you only pay for what you use.

Businesses don’t need to worry about high availability and scalability when going serverless. Defining loosely coupled architecture with microservices reduces your blast radius and provides the ability to scale each component independently.

In this post, you learned about different AWS components to help develop a cloud-native architecture, and how traffic flows from Amazon Route 53 to actual applications through Elastic Load Balancing, which handles the load by distributing traffic across the server fleet.

You also learned how to ensure network security using Amazon VPC, and restrict access using security groups and network ACLs. For audit and monitoring, you can use AWS CloudTrail, CloudWatch, and AWS Config.

You learned how to use containers in architecture to build microservices for your application and automatically scale the load between them. Also, you saw how building a containerized CI/CD pipeline helps to achieve zero downtime during application deployment and maintenance. AWS DMS is a great tool to migrate on-premises databases to more cloud-native databases like Amazon Aurora and accelerate the overall migration process.

.

|

|

Tech Mahindra – APN Partner Spotlight

Tech Mahindra is an AWS Managed Service Provider. They offer innovative and customer-centric IT services that connect across a number of technologies to deliver tangible business value and experiences to customers.

Contact Tech Mahindra| Practice Overview

*Already worked with Tech Mahindra? Rate this Partner

*To review an APN Partner, you must be an AWS customer that has worked with them directly on a project.