AWS Partner Network (APN) Blog

How HCLTech Centralized a Customer’s Log Management Solution Within a Hybrid Environment

By Fang Wang, AWS Cloud Architect – HCLTech

By Bobby Hallahan, Sr. Specialist Solutions Architect – AWS

By Rajesh Tailor, Sr. Partner Solutions Architect – AWS

|

| HCL Technologies |

|

Many customers operate in a hybrid environment with on-premises infrastructure interconnected with a cloud provider’s infrastructure.

Customers encounter challenges when multi-tiered systems experience issues or outages, followed by a root cause analysis to be executed by multiple operational teams.

This process can be streamlined and simplified by creating a centralized log management solution on Amazon Web Services (AWS) that allows customers to collect, analyze, and display logs in near real time.

This post details how HCLTech used the AWS Centralized Log Management Reference Architecture, and discusses how HCLTech removed the requirements for Amazon Kinesis Data Streams. We’ll also explore how HCLTech used Amazon Kinesis Data Firehose to stream from an Amazon CloudWatch Logs destination in a centralized logging account.

HCLTech’s main priority in this engagement, in addition to reducing costs, was to improve operations for the customer by placing logs in a single location. The team at HCLTech was also focused on simplifying analysis and operational tasks, as well as providing a secure storage area for log data.

HCLTech is an AWS Premier Tier Services Partner and Managed Service Provider (MSP) with AWS Competencies in DevOps, Migration, SAP, Mainframe Migration, and Storage.

Solution Overview

HCLTech’s centralized log management solution uses AWS managed services to collect Amazon CloudWatch Logs from multiple accounts and AWS Regions.

Through cross-account subscription to a CloudWatch Logs destination in the same region in the centralized logging account, HCLTech’s solution is able to stream logs into an Amazon Kinesis Data Firehose delivery stream, either in the same region or cross-region, and then save the logs into an Amazon Simple Storage Service (Amazon S3) bucket.

An AWS Lambda function is triggered by S3 PUT Events to read and parse the log data. It also ingests processed data as indices into Amazon OpenSearch Service, which contains a visualization tool called Kibana that can perform analysis, custom visualization and dashboards, alert configuration, anomaly detection, and reporting.

Figure 1 – Centralized log management solution with AWS.

Working with AWS Architecture on Centralized Logging

The AWS centralized logging solution is already a robust solution for managing logging with a centralized account.

HCLTech improved on this solution, and here are the components that changed:

- Both Amazon Kinesis Data Streams and Kinesis Data Firehose support cross-account and cross-region subscription. However, for this solution there is no need to use Kinesis Data Streams. The target of the CloudWatch Logs destination is a Kinesis Data Firehose delivery stream, whereas the AWS reference architecture also uses Kinesis Data Streams.

- Kinesis Data Firehose streams logs directly to S3 buckets without requiring any additional processing. Lambda functions are triggered from S3 PUT Events to read and parse the logs, and ingest indices into Amazon OpenSearch Service, which provides the following benefits:

- Error messages from OpenSearch ingestion appear directly into CloudWatch Logs for Lambda, making this a simple method to troubleshoot log ingest.

- Data can stay in S3 buckets and Amazon S3 Glacier for long-term retention, making historical data available for auditing purposes. In HCLTech’s configuration, retention in Amazon OpenSearch Service and S3 are the same, and data stays in S3 Glacier for long retention. HCL uses “S3 Glacier Restore Completed” events to trigger a separate Lambda function to create a special index into OpenSearch just for auditing purposes.

- Through the combination of S3 and Lambda, HCLTech’s solution provides the flexibility to ingest into other security information and event management (SIEM) solutions if Amazon OpenSearch Service is not to be used. For example, with the Splunk add-on data can be ingested from S3 into Splunk.

- HCLTech’s customer used Azure AD as their identity provider (IdP) for this solution, which is why HCLTech leveraged Azure AD as the identity provider for Kibana. However, customers can use other IdPs through the Security Assertion Markup Language (SAML) 2.0 standard; for example, you can connect Okta to AWS Single Sign-On (AWS SSO).

Approach

HCLTech’s customer needed to ingest logs from the following sources:

- Application logs and Amazon Elastic Compute Cloud (Amazon EC2) system logs generated on EC2 instances collected through Amazon CloudWatch Agent.

- AWS services that generate logs directly to CloudWatch log groups.

- For services like Elastic Load Balancing that don’t write directly to CloudWatch Logs, a Lambda function can be used to write logs into a CloudWatch log group. Read the Sending Amazon CloudFront standard logs to CloudWatch logs for analysis blog post for more information.

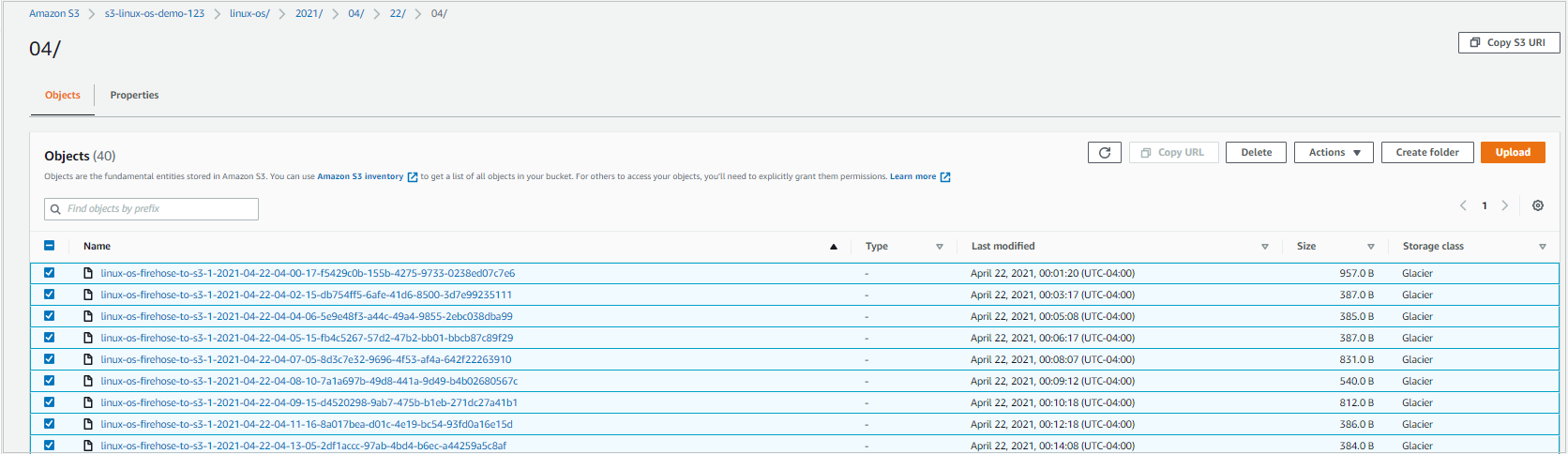

HCLTech introduced the ability to retrieve offline data for auditing purposes by configuring S3 to move data onto Amazon S3 Glacier after six months. If requested, the solution can retrieve historical data for a selected date and time in S3 buckets where the storage class is now S3 Glacier.

HCLTech’s customers are able to select the objects under that time range and initiate a restore.

Figure 2 – Amazon S3 Glacier objects.

After a restore is complete, a Lambda function is triggered by “Glacier Restore Completed” events. This function inserts an audit index into Amazon OpenSearch Service, which customers can use when accessing Kibana to retrieve log information for the new audit index.

This implementation of the centralized log management solution allows data to be searched based on account ID and regions. CloudWatch Logs are able to pass account ID information, and the log management solution uses the account name and region being passed as part of the log group subscription.

Here’s an example for a log group called “your-index-logs” on account “ClientDevAccount01” in the “eu-west-1” region. The log management solution combines the “ES Index” + “Account Name” + “Region Name” and creates a subscription filter; for example, “your-index-ClientDevAccount01-eu-west-1.” A Lambda function then processes the information.

Below is the example:

HCLTech’s log management solution incorporates S3 and Amazon S3 Glacier to meet long-term retention requirements in a cost-effective manner. S3 combined with Lambda underpin the solution as core services for Amazon OpenSearch Service.

To be cost effective, the log management solution takes advantage of OpenSearch ultrawarm nodes, while implementing the “hot-warm-delete” index state management policies to facilitate the rollover of stages. Data remains in the hot stage for seven days, while using normal data nodes with Amazon Elastic Block Store (Amazon EBS).

A move to the warm stage until retention is then reached to delete the data. During the warm stage, data is saved in ultrawarm Amazon OpenSearch Service data nodes, which use S3 storage to provide a cost-effective and highly available solution for longer-term retention.

Introducing fault tolerance was a key requirement for HCLTech’s solution. Regardless of faults originating from Lambda processing or connections to Amazon OpenSearch Service, the data will always reside in S3 buckets, allowing customers to re-process the data and ingest into OpenSearch.

Conclusion

In this post, we demonstrated how HCLTech was able to iterate on the AWS Centralized Log Management solution to provide their customers a cost-effective and fault-tolerant solution.

AWS customers can use HCLTech’s solution to send cross-account, cross-region traffic to a Centralized Log Management account, and process the log data that remains in Amazon S3 buckets and is visible through Kibana.

HCLTech – AWS Partner Spotlight

HCLTech is an AWS Premier Tier Services Partner and MSP. With a dedicated cloud-native business unit, HCLTech builds and provides enterprise cloud computing solutions on the AWS platform.