AWS Partner Network (APN) Blog

How N-iX Developed an End-to-End Big Data Platform on AWS for Gogo

By Salim Tutuncu, Partner Solutions Architect, Data and Analytics – AWS

By Rostyslav Fedynyshyn, Head of Data and Analytics Practice – N-iX

|

| N-iX |

|

Gogo is a global provider of broadband connectivity products and services for business aviation. Its mission is to make planes fly smarter with inflight entertainment, Wi-Fi, email, text and talk, and cockpit data so the company’s aviation partners perform better and their passengers travel happier.

Gogo needed a qualified engineering team to undertake a complete transition of its solutions to the cloud, build a unified data platform, and streamline the best speed of the inflight internet.

The N-iX team developed a data platform for Gogo based on Amazon Web Services (AWS) that aggregates data from over 20 different sources using Apache Spark on Amazon EMR. N-iX also built a data lake from scratch for collecting data from all sources in one place.

N-iX is an AWS Advanced Tier Services Partner with the Data and Analytics Competency and Amazon EMR service delivery specialization. Since 2002, N-iX has helped businesses across the globe expand their engineering capabilities and develop successful software products.

Customer Challenge

To ensure flawless operation of all systems and the best speed for the inflight internet, Gogo needed to collect and analyze large amounts of data. This included structured data like system uptime and latency, and unstructured data like the number of concurrent Wi-Fi sessions and video views during a flight.

The data Gogo gathered was stored on premises in many different sources, however, and the client’s main goal was to build and support a unified data platform in the cloud. This would enable Gogo to process its big data more efficiently and enhance customer experience based on the analysis results.

The new platform build by N-iX allows Gogo to perform service-level agreement (SLA) calculations with airlines and save costs the customer could spend on penalties.

Migrating to AWS

Gogo decided to engage N-iX, whose expertise includes various cloud migration approaches such as rehosting, refactoring, and rebuilding, as well as the development of cloud solutions from scratch. N-iX also has teams of qualified big data and business intelligence (BI) engineers.

The decision to migrate to AWS was easy for the client because of the many benefits it offers. First of all, Gogo’s legacy solution was hard to maintain. The cloud, in turn, provides flexibility and reliability.

Second, the old solution required high license costs, and moving to the cloud allowed the client to reduce total cost of ownership (TCO). As AWS provides the core staples of technology infrastructure, it enabled the client to focus on business rather than infrastructure.

N-iX’s cooperation with Gogo’s Unified Data Platform (UDP) team is organized in a way where some responsibilities are split between the client’s teams and N-iX’s teams. The overall management and operation of Gogo’s AWS account and some AWS-managed services are administered by the client.

These accounts and services are provided to N-iX’s teams with the least privilege security principle in mind, but with enough privilege granted to build a full-fledged solution. N-iX and Gogo teams cooperate and iterate to set up or tune AWS services when it’s required for the project scope.

Solution Overview

To enable the processing and storage of a considerable amount of data, N-iX has performed a complete migration of Gogo’s solution to the cloud. N-iX built a unified data platform (on top of AWS with Hadoop and Spark technologies) that collects and aggregates all of the data from more than 20 different sources.

There are two main directions on the project:

Ingestion and Parsing of Airborne Logs

The first directions is the ingestion and parsing of airborne logs, as the team receives a colossal number of logs from the planes—up to 2 TB a day. The task was to develop a flexible parser that would cover most of the client’s cases.

For this purpose, N-iX needed to normalize logs in a single structure (a table or a set of tables) so it would become a data source for users.

N-iX employed data compression to minimize costs and stay within the max record size limit of 1 MB, as well as implemented the pub/sub pattern for data transfer, in order to promote the adoption of Amazon Kinesis by the client. As a solution, N-iX used data compression to reduce costs and avoid the 1 MB limit. The team used the pub/sub pattern for data transferring.

The fleet of Amazon Elastic Compute Cloud (Amazon EC2) instances processes the continuous flow of logs and then sends the log data to Amazon Kinesis Data Firehose, which saves the data in Amazon Simple Storage Services (Amazon S3). It’s the main Kinesis stream where the average transaction volume is from 200 MB to 1 GB per minute of compressed and grouped data.

Additionally, Amazon Kinesis collects the parsed data, and then Spark streaming on Amazon EMR reads the messages and saves the data in S3. The N-iX team streams over 90 shards with about 22 TB of data processed per month. In general, there are around 140 available shards.

N-iX configured Amazon Kinesis Data Streams to use the optimal number of shards per app, per user, per consumer, and producer, so there was no throttling. The team measures the Iterator Age metrics and metrics that showcase the lag of Kinesis Data Streams through the Kinesis user interface (UI).

Integration of a Variety of Data Sources

Another direction is the integration of a variety of data sources to build reporting and analytics solutions that bring actionable insights. N-iX built and supported the data lake on AWS using PySpark for extract, transform, load (ETL) processing.

N-iX’s engineers have made an end-to-end delivery pipeline—from the moment when the logs come from the equipment to the moment when they are entirely analyzed, processed, and stored in the data lake.

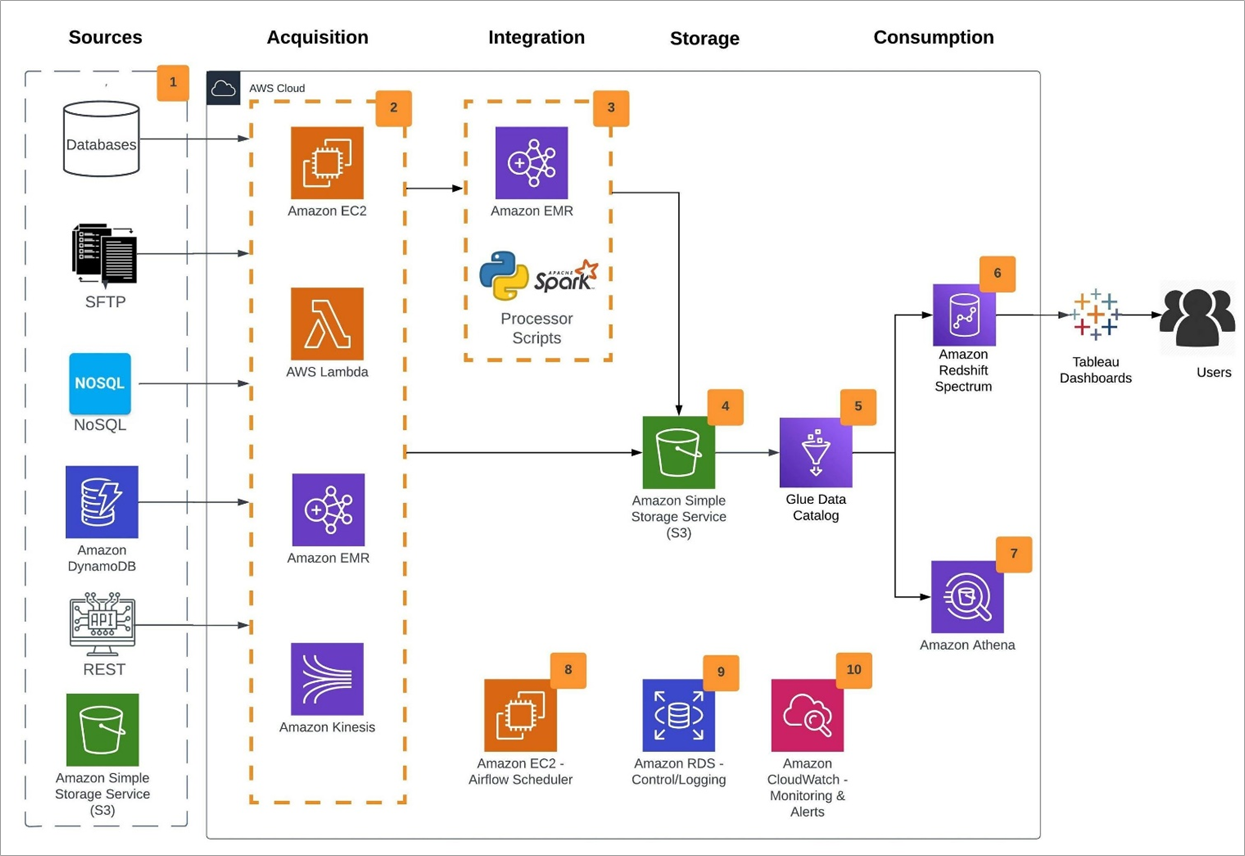

Figure 1 – Solution design.

The solution design contains the following blocks:

- Data is collected from multiple data sources across the organization.

- Based on the type of data source, Amazon EC2, AWS Lambda, and Amazon EMR are used to ingest the data into a data lake on AWS.

- Amazon EMR is used to transform, enrich, move, and replicate data across multiple data stores and the data lake.

- Amazon S3 is used as the data lake storage.

- Amazon Glue Catalog is used to catalog databases and tables.

- Amazon Redshift Spectrum is used as a cloud data warehouse.

- Amazon Athena enables interactive querying, analyzing, and processing capabilities.

- Apache Airflow on Amazon EC2 is used to manage workflows.

- Amazon Relational Database Service (Amazon RDS) is used to manage ETL states and progress

Amazon CloudWatch is used to collect application logs and alerting.

Application log tables and metadata processing are stored in Amazon RDS. N-iX builds long-running acquirers to run Scala combinator library on Amazon EC2.

AWS Lambda functions are used to process the notifications, while Amazon S3 stores raw and processed files, dimension and fact tables, backups, and application logs.

Amazon DynamoDB stores data about processed input files. Amazon Redshift stores in-memory data warehouses and is also used as a data source for the reporting team.

N-iX chose Amazon Virtual Private Cloud (VPC) for cloud infrastructure as a service since it allows for building scalable and secure data transfer. Gogo uses Amazon Athena for data analytics, and Amazon EMR for data processing, namely for writing ETL in Spark and Spark Streaming.

N-iX use Amazon Kinesis to transfer processed data from EC2 to S3. AWS Identity and Access Management (IAM) allows the solution to create and manage AWS users and groups and use permissions. Amazon CloudWatch collects the performance and operational data in the form of logs.

A Lambda function is triggered by using Amazon Simple Notification Service (SNS) notifications, while Amazon Simple Queue Service (SQS) manages message queues and is used by custom applications.

The N-iX team uses Jenkins as a continuous integration tool and Spinnaker as a deployment tool to deploy to the target environments: staging and then prod.

All parts of the system that are deployed and set up during the automatic deployment process use VPCs, AWS Availability Zones, and subnets that are pre-provisioned and defined by the customer.

Total Cost of Ownership Analysis

The N-iX team is responsible for the end-to-end development of the data platform.

After the architecture was defined, before the implementation, it was agreed with the client that the running costs of the solution are variable and directly depend on the amount of data being processed. This, in turn, depends on the activity of the airline business.

The Kinesis stream receives logs that come from aircraft. The more aircraft, the more data, and vice versa.

Starting from the beginning of the collaboration, the N-iX team has been analyzing the costs per each service. For example, N-iX estimated the costs of the Kinesis Data Streams based on the shards and volume of the streaming data and EMR clusters by the size of the date and number of consumers.

Every week, N-iX performs an analysis of all the AWS services on the project to analyze the weekly costs and identify how the client spends the cost and determine what can be changed and improved. The N-iX team predicts the usage of the infrastructure and are reserving the instances/computing power in advance.

Results and Benefits

N-iX developed the data platform on AWS that aggregates all data from over 20 different sources using Apache Spark on Amazon EMR.

The team built a data lake from scratch for collecting data from all sources in one place and delivered a solution to Gogo for measuring the availability and in-cabin performance of various devices, as well as for analyzing the operation of wireless access points and other equipment.

N-iX’s solutions allow Gogo to reduce the operational expenses on penalties to airlines for the ill-performance of Wi-Fi services.

Lessons Learned

- The local state is a cloud anti-pattern. After migrating to AWS, Gogo received an opportunity to focus more on applications thanks to the elasticity and availability of the solution.

. - Asynchronous and event-driven patterns helped scale. The asynchronous invocation of AWS Lambda helped Gogo save money and time. When a Lambda function is invoked asynchronously, it does not wait for a response from the function code. Lambda can also be configured to handle errors and send invocation records to a downstream resource to chain together the application components.

.

To configure the system, N-iX used function utilization and performance, invocations, concurrency, and provisioned concurrency. The event-driven pattern inherits the scalability and availability offered by managed services like Amazon S3 and AWS Lambda.

. - Great management with Amazon Kinesis. As Kinesis is fully managed, there is no need to maintain or manage any infrastructure for running the streaming applications.

Conclusion

In this post, we shared a solution using AWS services to aggregate all of the data from over various sources and streamline the best speed of the inflight internet.

One of the key benefits of this architecture is that you can process a large amount of data. N-iX’s solutions allow Gogo to reduce the operational expenses on penalties to airlines for the ill-performance of Wi-Fi services.

N-iX – AWS Partner Spotlight

N-iX is an AWS Advanced Tier Services Partner with the Data and Analytics Competency. Since 2002, N-iX has helped businesses across the globe expand their engineering capabilities and develop successful software products.