Networking & Content Delivery

CloudFront migration series (Part 1) – introduction

September 8, 2021: Amazon Elasticsearch Service has been renamed to Amazon OpenSearch Service. See details.

This is the first post in a blog series about Amazon CloudFront migrations. CloudFront works with other AWS edge networking services, to provide content delivery, perimeter security, end-user routing, and edge compute.

CloudFront is a Content Delivery Network (CDN), which places content closer to your end-users, improving performance and customer satisfaction. CloudFront supports functions and customization at the edge with edge computing features such as Lambda@Edge. End-user connection routing is established using DNS service Amazon Route 53, while AWS WAF and AWS Shield provide perimeter security and help protect your site from DDoS and other malicious attacks.

By reading this series, you will be guided through real customer migration stories with expert commentary, architectural best practices, migration framework guidance, and exposed pitfalls. In this first blog, you will be given an overview of the benefits and the recommended step-by-step process to follow when migrating to Amazon CloudFront in the AWS Cloud.

The key benefits of running edge network services in the AWS Cloud include the following:

- High security bar – security of the infrastructure and security controls are aligned with the AWS shared responsibility model.

- Developer experience – you can template your configuration with infrastructure as code and deploy it using Continuous Integration and Continuous Deployment (CI/CD) pipelines

- AWS platform – where you can leverage technical and financial benefits of using the AWS platform together with CloudFront. You can optimize application performance by having the origin and the CDN on the same AWS network, and reduce their Data Transfer Out charges. You can also take advantage of other AWS services and integrate them with Amazon CloudFront such as managing TLS certificates with Amazon Certificate Manager.

- Agility – you can write you own edge hosted logic using Lambda@Edge, combined with any of the wide variety of solutions available by the AWS developer community or by AWS partners.

Below, are the recommended steps you should take to successfully migrate to Amazon CloudFront. These steps have been broken into three categories:

Preparation

The business challenge

Starting any migration, you should first define the business challenge. Here is an example – your contract with your current Content Distribution Network provider is ending and you were unhappy with aspects of the cost, performance, and security. Having evaluated several CDNs, you have decided to migrate to Amazon CloudFront for its global reach, high performance, flexible costing, security, and close integration with AWS services. You want to migrate your site quickly (say 3 months) and you would like to get a clear understanding ahead of time what it takes to move a site to CloudFront while limiting the impact to your end-users.

Defining scope

Next, you want to define the scope of your migration. If you set the scope of the migration from the outset, you will have a better understanding of the technical and resource requirements, leading to a higher chance of a successful (smooth, timely, and positively business impacting) migration. From here, you can break down the migration into its individual tasks and assign these to a delivery team to carry out.

The first step in scoping the problem is to categorize the migrations, according to complexity and risk, into three buckets – Simple, Complex, and Business Critical. Here are some examples – let’s say that you are planning to migrate an about-us page. You would categorize this as a Simple migration which requires the following tasks:

- Parse existing CDN configuration

- Create an equivalent CloudFront configuration

- Update DNS to point to the CloudFront endpoint.

A comments page is an example of a Complex migration involving the following additional tasks:

- Recreate a HTTP redirect with Lambda@Edge (a feature of CloudFront)

- Serve Geo-specific content using multiple CloudFront behaviors

- Throttle request traffic from individual IPs using AWS WAF

Assuming that an outage to your company`s login page has a direct impact on revenue, you should think about categorizing this type of migration as potentially reputation affecting or Business Critical. Some of the additional steps involved in this type of migration might include the following:

- Use Amazon Route 53 weighted DNS to gradually cutover to CloudFront

- Queue up or replay requests not served

- Allocate additional support resource to deal with customer calls

- Have a detailed plan with steps to rollback or troubleshoot a new configuration.

Once you have sorted your sites into these categories you can then start to migrate a subset of each type of site. This can help you limit the risk as you gradually prove out individual migration tasks and refine them as you see fit.

Design and target state

Before you proceed with the migration, you should write up your high-level plan in a design document. This document should cover: the current state of your system, its desired or target state, and the high-level tasks needed to get there. You should highlight the justifications for each task and call out any assumptions or unknowns that may influence your timeline. Communicating the plan clearly to your stakeholders can go a long way towards making your migration a success.

The target state for your login page is a Single Page App on CloudFront which authenticates with an Identity provider. The high-level tasks needed to implement this target state are below:

- Create a viewer request Lambda@Edge function that authenticates and authorizes users with Amazon Cognito

- Upload certificates to Amazon Certificate Manager

- Configure a bespoke cache policy with CloudFront

Define ownership

Now that you have an understanding of the migration tasks, you can start to implement them using the AWS Console, the AWS SDK, or CLI. Higher-level services can also be helpful – AWS CodePipeline can build, test, and enable CloudFront configurations as part of a migration.

Security & compliance services like AWS IAM and AWS Config can also help by providing guardrails to ensure teams only have the access they need.

If possible, assign migration tasks to a centralized team or to an individual application owner – for instance, the team that looks after the about-us page. If you find that you need assistance planning and implementing the migration, AWS Professional Services and trusted APN Partners are also available to help out.

Estimate effort

You can now begin to size up each individual task with the migration team. We recommend that you measure the size or effort required. Previous migrations have used units of work or days.

A simple cache control header configuration could measure one unit of work or 0.5 person days whereas writing a complex edge function could measure 5 units of work or 2.5 person days. Once you have estimated the tasks you can begin to plan them into a sprint.

Documenting these individual tasks can also help when planning future migrations. As you repeatedly implement, a task take the opportunity to think about refining certain steps – look for opportunities to automate manual tasks for the next time around.

Enable access

You now have a well-defined and documented plan and can start handing out the permissions needed by team members to carry out their migration tasks. By applying the concept of least privilege you will contain any misconfigurations within a limited part of your environment.

AWS IAM allows you to implement least privilege by allowing specific actions against relevant resources. In this way, you could grant UploadServerCertificate permissions to a user performing a certificate related migration task only during office hours.

AWS IAM can also be used to grant access to higher-level services such as AWS CloudFormation, AWS Cloud Development Kit (CDK), and AWS CodeDeploy that can be used to make continuous improvements.

Ensure Observability

You will find that improving observability can help you better infer the success of a migration. Observability lets you determine the internal state of an environment from external data. AWS services generate rich metrics and detailed logs, which you can use to see the impact of changes to your environment following a migration.

Here are recommendations from previous migrations that you can implement to improve observability:

- Monitor metrics – continue to measure performance metrics before and after a new configuration and perform a comparison. You can track the end-user experience with a combination of Synthetic (TTFB, RTT) and . These metrics can be measured with Amazon CloudWatch or services like Dynatrace or Catchpoint.

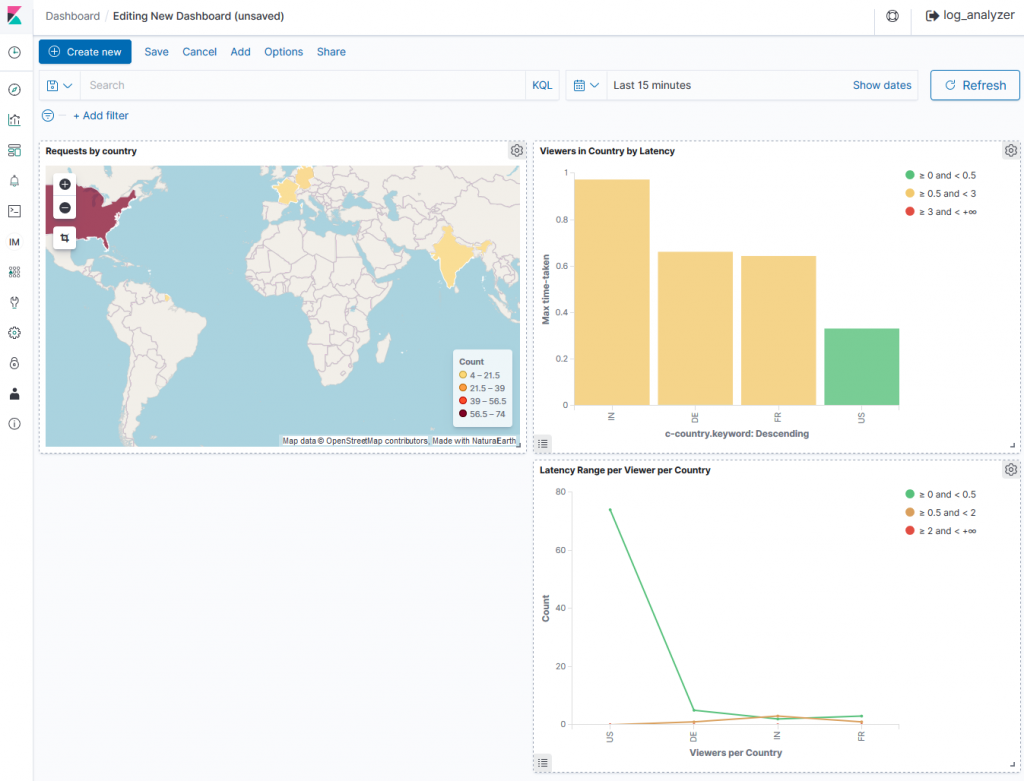

- Surface your metrics – you can integrate with your SIEM – a security information and event management system, or create a dashboard using ELK to highlight important metrics and configure alarms to notify teams when a threshold is breached. Divergent behavior can be identified with CloudWatch Anomaly Detection.

- Log analysis – implement CloudFront Log Analysis by enabling logging throughout your stack and using Amazon Athena or Amazon Elasticsearch Service to follow a request from edge to application.

- Smoke tests – test key end-user journeys with a staged CloudFront distribution. This helps ensure that site functionality is maintained ahead of a cutover.

Migration

Create configuration and the pipeline

Here is an example pipeline for migrating the about-us page. You are going to recreate an existing CDN configuration as a CloudFront Cache Policy and distribution.

Certificates are added to Amazon Certificate Manager and any logic is recreated with Lambda@Edge.

- Interpret existing configurations – capture the functionality of existing configurations including default site-wide cache-control policies, custom policies for subdomains or specific path URIs. Capture any advanced metadata used to implement logic.

- Design target state for specific properties/applications – plan which features and properties to add to your edge configurations as built-in properties or as a Lambda@Edge function. Consider complexity, cost, and performance when making this decision.

- Create CloudFront configurations – you can now start to recreate your CDN configuration on CloudFront.

- Structure your distribution according to the target state – specifying single or multiple aliases, and multiple behaviors for Lambda@Edge functions.

- Define caching policies for default or individual behaviors including error caching.

- Ensure there are mechanisms to fail over to a cache-able static holding page while also alerting teams.

- Security controls – use AWS WAF and Shield Advance (DDoS Protection service) to protect your web applications and APIs against common web exploits and DDOS attacks. You can create rules to block common attacks and apply these to configurations using a deployment pipeline. You can restrict access to your origin to publicly available CloudFront IPs or by using IAM policies.

- Import certificates – adding certificates to AWS Certificate Manager (ACM) will allow them to be included in your CloudFront configurations.

- Recreate logic with Lambda@Edge – advanced configuration logic can be implemented with Lambda@Edge functions, a feature of CloudFront. You can enable metrics and logging on Lambda@Edge to help understand issues, misconfiguration and service limits. For examples, please visit our documentation.

Stage configuration

Perform existing tests against the stage endpoint. If the end-user can access this stage endpoint, and retrieves the expected content, the team can begin to plan a cutover.

Test harnesses point to spoofed live endpoints and can be used to validate configurations ahead of a cutover.

- Local host entries, local DNS servers and testing tools can be configured to forward requests to stage configurations.

- A Smoke test helps you discover regression errors introduced with new configurations. You should also validate key end-user journeys.

Switching to CloudFront

You should consider gradually rolling out changes to reduce the blast radius, in case of a misconfiguration. You can do this by using Amazon Route 53 with weighted DNS to distribute a proportion of requests to legacy and updated endpoints. Closely observe metrics and logs for your website as you tip the weighting to CloudFront endpoints.

- Consider beginning the cutover in a single AWS Region and during low traffic periods to minimize interruptions to end-users.

- Staggering the amount of traffic distributed across endpoints with weighted routing can help manage the change.

|

|

|

Monitoring

Observing key metrics and logs after cutover can help you discover misconfigurations. Here are some examples to look out for:

The old endpoint is still receiving traffic – in this case you need to update a DNS record to point to the new destination.

- Change is affecting users – are there any user journeys affected after the cutover? This might be the result of certificate migration delays or stale cached content.

Finally, Have a Contingency Plan. In case of an issue, be ready with documentation and a referable plan to roll back to the previous configuration or to fix-forward using a CI/CD pipeline.

Documentation

Documentation is an important element in your project. Placing documentation for build configuration in a version control system like git helps you to track changes, and recover earlier versions.

Referring to a detailed set of documentation helps ensure business continuity. Here are some of the key considerations when preparing documentation:

- Document configurations – include reasoning, flowcharts, and code in your documentation.

- Version control configurations – changes to documentation and code are tracked, making it easier to understand and roll back changes.

- Create a Runbook– you capture the change process, common issues, incident handling, and escalation in a Runbook

- Create a Disaster Recovery plan – include steps to rebuild your configurations into a new account

Post Migration

The migration is now complete. Legacy distributions can be removed and any old integrations and configurations can be trimmed.

To complete the process, perform any clean-up tasks:

- Clean up distribution – disable unused configurations that are no longer serving traffic.

- Remove integrations – remove obsolete integrations, certificate stores or third-party monitoring services.

- Remove old CNAMEs – disable any unused CNAMES and Certificates.

Ongoing maintenance

It is important to continue monitoring for performance variations and to protect against malicious attacks. AWS Services help build automations for continuous improvement. Using AWS WAF Security Automations and AWS Firewall Manager, you can ensure all your CloudFront distributions are protected. Here are the common tasks you should take up to maintain healthy distributions.

- Monitoring and remediation – end-user journeys and endpoints should be monitored, with events being triggered when required. You could configure a CloudWatch event to notify the site owner, or automatically throttle requests.

- Deploy with CI/CD – use CloudFront API, AWS CodePipeline and AWS CloudFormation to create your Continuous Delivery

- Audit configuration changes – enabling AWS CloudTrail and AWS Config helps you monitor and track changes made to your CloudFront configurations. Maintaining audit logs helps you satisfy regulatory or compliance requirements.

- Create Billing Alarms – you can configure billing alarms to track and manage the cost of your workloads.

- Automate current workflows – you can leverage API operations to automate existing workflows such as invalidating an asset after publishing an updated version.

Summary

In this post, we introduced you to some common steps and considerations when migrating to CloudFront. As we continue to post blogs in this series, we will use real customer migration stories to demonstrate specific examples and highlight informative migration journeys, along with the success and challenges that they faced.

As new blog posts roll out in this series, we will add links here. Additional blogs:

- CloudFront Migration Series (Part 2): Audible Plus, The Turning Point | Amazon Web Services

- CloudFront migration series (Part 3): OLX Europe, The DevOps way