AWS Partner Network (APN) Blog

AWS Cloud Economics: Where Every Dollar Counts

By Narasimhan Balasubramanian, Chief Architect, AWS Practice – Cognizant

By Dan Margulies, Principal Partner Solutions Architect – AWS

|

|

|

Organizations hosting workloads on the cloud are more flexible and scalable, something made possible by different service-level agreement (SLA) options. However, these come with varying costs, and the onus is on organizations to choose the appropriate service and cost.

For those adopting cloud, financial governance and cloud economics have taken center stage. It’s imperative that companies adopt an appropriate governance policy to validate and monitor their cloud spend.

Cloud cost management, or cloud optimization, is the organizational strategy that enables effective monitoring and cost control within cloud environments.

This is often the highest priority for organizations on the cloud, as Flexera notes in their 2020 State of the Cloud Report:

“Organizations are running over budget on their cloud spend by an average of 23 percent. Cloud savings and spend optimization continues to be the topmost priority for cloud customers four years in a row. Cloud adoption progress is dependent on the success of this effort.”

Cloud cost optimization should be an integral part of the transformation process and not only be considered an operations task. For example, cloud migration costs are a big chunk of the overall cloud adoption engagement, and organizations must safeguard themselves from cost overruns.

In this post, I will discuss Cognizant’s AWS economics service offering and how cost optimization techniques can be incorporated into Amazon Web Services (AWS) across the different stages of cloud transformation.

Cognizant is an AWS Premier Consulting Partner with five AWS Competencies. Cognizant is also a member of the AWS Managed Service Provider (MSP) and AWS Well-Architected Partner Programs.

Cloud Adoption

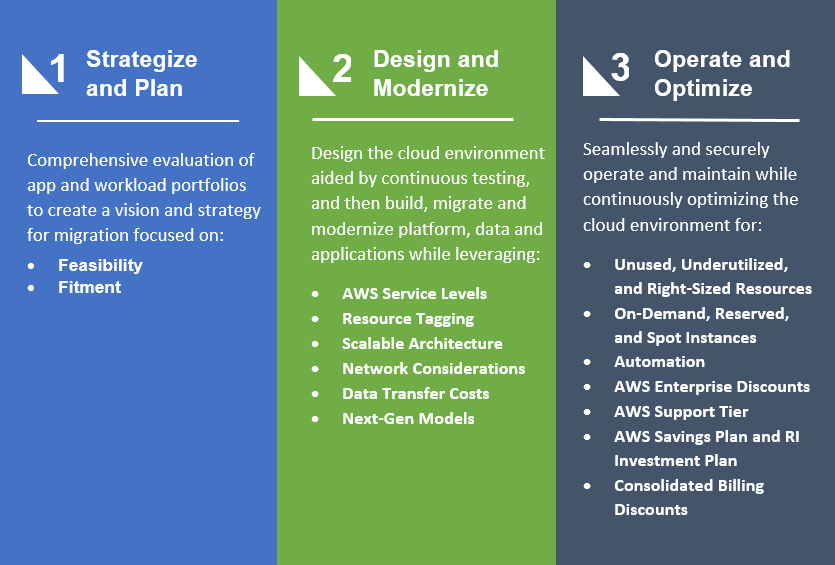

First, let’s take a look at the comprehensive Cognizant Cloud Steps Transformation Framework.

The Cloud Steps Transformation Framework is a minimally intrusive, automation-focused Agile end-to-end methodology that covers the breadth of the cloud adoption journey. A well-defined repeatable framework goes a long way in ensuring a smooth cloud migration effort, and prevents any unexpected surprises that may have cost implications.

The three-phased framework includes:

- Strategize and Plan

- Design and Modernize

- Operate and Optimize

Each phase is comprised of activities, tools, and accelerators to achieve a highly efficient, automated, and streamlined platform with improved return on investment (ROI) and lower total cost of ownership (TCO) for savings in cloud spend.

Figure 1 – Cloud Steps Transformation Framework and AWS economics.

Strategize and Plan

This stage assesses cloud alignment to an organization’s objectives while evaluating their current environment and workloads.

Cloud providers are constantly updating their service catalogs to cater to the range of use cases that different markets demand. An organization, with the help of service integrators (SIs), must determine the means to move their workloads to the cloud.

There are two critical topics organizations need to explore during a cloud transformation assessment:

Feasibility

Are workloads really in shape to be migrated to the cloud, or are there any specific characteristics that would prevent them from functioning on the cloud? Such proactive assessment of the workloads prevents a lot of wasted time and effort, not to mention unnecessary costs.

In one of our engagements, a large media organization in North America had telecom-related applications that needed a physical connection. The cost of the virtual appliance to ensure this connection made it impractical to move the workloads to the cloud.

Fitment

Workloads that have cloud feasibility need to be further analyzed to determine the most appropriate means of migration. This could vary from a simple lift-and-shift to a more rigorous inside out re-architecture of the workload.

While addressing an AWS migration solution for a client, we realized they were using Oracle Real Application Clusters (RACS). Oracle does not provide support for their RAC technology on any third-party clouds.

Workloads using RAC should either look at an alternative solution, which may involve a re-architect effort, or run on the cloud without Oracle support.

Design and Modernize

The cloud can be viewed as a bunch of IT and application-related services provided by the vendor, each of which has an SLA associated with it. This model is different from the traditional on-premises model where service layers were thin and siloed.

Decisions taken during this phase are usually not easily reworked, so it’s important to factor in the optimum cloud costs during the design and migration.

AWS Service Levels

Let’s look at Amazon Simple Storage Service (Amazon S3), which has six levels of storage class with their own monthly cost. They are: S3 Standard, S3 Intelligent Tiering, S3 Standard – Infrequent Access, S3 One Zone – Infrequent Access, S3 Glacier, and Glacier Deep Archive. Here’s a link to the comparison view of the different storage classes.

Organizations have the flexibility to choose an optimum storage class as per the use case.

Resource Tagging

Cloud optimization begins with proper monitoring of an organization’s cloud resource inventory, and a comprehensive tagging strategy is important to gain visibility to the cloud resources.

Tagging is compulsory for organizations that want to set up a cost-effective Dev/Test environment, as resources to be turned on and off must be tagged. Tagging is also the key to facilitating operations teams to have organization-wide coverage.

Scalable Architecture

Workloads that can experience spikes in response volumes should be set up within an auto scaling group. AWS scales up and down the resources, according to the policies setup in the auto scaling group, to meet the current demand.

This feature helps in optimizing cost of the resources without compromising performance. It can be used against Amazon Elastic Compute Cloud (Amazon EC2) instances including spot fleets, Amazon Elastic Container Service (Amazon ECS) tasks, Amazon DynamoDB, and Amazon Aurora.

Network Considerations

Different AWS services, including Amazon Virtual Private Cloud (Amazon VPC) and AWS Direct Connect, help address an organization’s networking needs. For example, AWS Direct Connect is available in two tiers at 1G capacity and 10G capacity.

These services have differential cost, and the onus is on organizations to have a proper understanding of the networking bandwidth at the cloud foundation stage to decide which tier to procure. This helps avoid being overcharged for capacity that may not be needed.

Data Transfer Costs

The cloud gives a lot of freedom in terms of data mobility, but at a higher price. The data flow design for an organization’s AWS architecture should provide the least expensive route to minimize the cost of data transfer.

Different AWS services have different rules for charging data transfer (in and out). Amazon Kinesis Data Stream cost includes data transfer costs, while Amazon S3 charges data transfer out between regions and out to the internet while keeping the data transfer in free of charge.

Amazon EC2 instances charge data transfer in and out when data is moved across AWS Availability Zones. A comprehensive understanding of the data transfer rules is essential to building the data flow designs on the AWS.

Next-Gen Models

With the next-gen compute models, cloud has further refined the pay-as-you-go philosophy down to the output, request, and CPU levels. Containers and container platforms help optimize resources and reduce cost spent on the cloud.

AWS Lambda offers a pay-as-you-go pricing model to sub-second metering. AWS services that provide caching facilities help reduce data transfer cost quite a bit. Amazon CloudFront with caching at edge locations, and in-memory caching services like Amazon ElastiCache help retain data at the appropriate locations, thus reducing data transfer cost.

One of our multinational food customers at Cognizant had a database backup configured to Amazon S3 using the network address translation (NAT) gateway. Data was going in and out of the VPC to the NAT gateway, thus increasing charges for the company.

Our solution was to introduce a VPC endpoint for that S3 bucket and save on the network charges. With this change, the customer was able to realize a total annual saving of $76,864.

One of our global biopharmaceutical customers at Cognizant had a disaster recovery (DR) setup in another region where they were doing a full backup of data, and replication of their data happened multiple times based on their recovery point objective (RPO) and recovery time objective (RTO). We advised the company to instead go for an incremental back up, which reduced their data transfer cost considerably.

In an analysis of one of our customers, we projected 62 percent cost savings on infrastructure alone by moving their applications (125 in number) to containers.

Operate and Optimize

Similar to compliance-related efforts, organizations need to conduct cloud optimization at regular intervals to ensure the runtime environment is still being cost effective. Some of the tenets that come into play during the cloud operation cycle include:

Unused, Underutilized, and Right-Sizing Resources

Cloud is elastic and can tend to zero utilization. With the pay-as-you-go model on AWS, orphaned resources, and consolidation of underutilized resources, right-sizing your resource for the workloads are an important factor in reducing cost.

Amazon Elastic Block Store (Amazon EBS) volumes orphaned after the instances they were attached to have been deleted, unused/orphaned IP, and Amazon EC2 Reserved Instances (RIs) purchased and not used are some of the areas that need to be checked for.

An analysis of our internal Cognizant Life Science platform on AWS revealed EBS volumes that had less than one input/output operations per second (IOPS) utilization for the last seven days, while they were incurring charges. Deleting them resulted in a 6 percent cost reduction.

Another analysis of a Cognizant Life Science platform running on AWS revealed 170 instances flagged with daily CPU utilization of less than 5 percent for last 14 days, and a network I/O of less than 5 MB, clearly flagging them as underutilized. The recommendation was to reduce the number of instances and delete the rest with an estimated 25 percent cost reduction.

On-Demand, Reserved, and Spot Instances

Based on the requirement of the servers, organizations should look at procuring Reserved or Spot instances for their workloads. Production environments that would have a longer life span should procure the discounted RIs.

AWS further provides the flexibility to exchange instances under the EC2 convertible offering. While the overall discount drops a bit (2-4 percent), it provides added flexibility.

Spot instances can be used by workloads that involve batch processing and data analytics that need a cluster of instances but designed for fault tolerance. The rates for Spot instances are low, but are for a definite time period set by AWS based on supply and demand. There is no guarantee for the availability of these instances past that time period.

A popular food chain in North America saw an annual savings of $73,000 by converting their production on-demand instances into RIs.

As part of a cost optimization exercise for a Denmark-based insurance customer, instance and storage optimization were the focus. This exercise projected annual savings of $502,000 and these included Amazon Relational Database Service (Amazon RDS) and EC2 downgrades, auto shutdown of Dev/Test environments, RI conversion, and storage optimization.

Automation

Intelligent, self-healing automation is the key for cost optimization when it comes to cloud operations. The Site Reliability Engineering (SRE) model for operations has widely been adopted across organizations to maximize the automation.

Dev/Test environment automation is another area where organizations can greatly benefit. Businesses using workspace on their development environments should automate the start-stop process for these desktop-as-a-service instances.

Workspace pricing is available in two options—a fixed monthly cost (with unlimited usage or on an hourly basis bundling a low fixed cost), and an hourly rate charged only for the time the workspace is used.

The breakeven is around 3-4 hours usage per day. If the requirement for these workspaces is for more than four hours in a day, it’s advisable to go for the monthly fixed cost, or the hourly rate. Furthermore, AWS provides a workspace-specific cost optimizer implementation.

A popular food retailer in North America enjoyed an annual savings of $20,000 by automating their snapshot cleanup process.

In another instance, a popular educational media publisher had an automated CPU threshold monitoring and alerting put in place through AWS SSM, which helped the company realize a soft dollar saving of $39,600 annual for the operations team.

In a cost optimization exercise conducted for one of our Denmark-based insurance customers, we recognized instances that were not needed throughout the month. By introducing an auto shutdown feature, the customer saw a savings of $464.26 per month.

AWS Enterprise Discounts

AWS provides an enterprise-specific discount on its services in return for a committed annual spend. On average, we at Cognizant have seen our customers get a 14-18 percent discount based on their commitment.

AWS Support Tier

AWS provides three tiers of support plans on top of their basic plan: Developer, Business, and Enterprise. These have varying degrees of support capabilities and pricing.

The pricing detail is also tiered within these support plans. A careful understanding of an organization’s cloud resource requirements and ongoing support needs will go a long way in arriving at the appropriate support tier.

Following is a comprehensive comparison of the three support tiers.

AWS Savings Plans and RI Investment Plans

AWS Savings Plans offer up to a 72 percent discount compared to on-demand costs, in return for commitment from an organization on the use of compute power measured in dollars per hour. This is not the same as RI discounts and they cannot be used together.

Savings Plans are of two types: Compute Savings Plan, and EC2 Savings Plan. The Compute Savings Plan includes not just to the different types of EC2 instances but also AWS Fargate and Lambda instances. The EC2 Savings Plan is specific to a region.

RI investment Plans, meanwhile, are also two types: Convertible RI, and Standard RI. Convertible RIs are exchangeable.

Consolidated Billing Discounts

Many AWS service costs are structured to offer discounts in line with growth in volume. Organizations can consolidate the resource needs of their different accounts into a single master bill to gain volume discounts.

AWS Organizations is an account management service that enables consolidation of multiple AWS accounts into an organization that can be centrally managed.

Conclusion

Cloud economics and cloud spend are at the top of the priority list for enterprises. Traditionally, cloud spend monitoring has been considered part of the cloud operation phase, but Cognizant advocates the tenets of cloud economics throughout an enterprise’s transformation.

Cognizant’s AWS economics service offering is integrated across every phase of the journey for actionable insights and optimized cloud spend. For more details, contact AWSOfferings@cognizant.com.

The content and opinions in this blog are those of the third-party author and AWS is not responsible for the content or accuracy of this post.

Cognizant – AWS Partner Spotlight

Cognizant is an AWS Premier Consulting Partner that transforms customers’ business, operating, and technology models for the digital era by helping organizations envision, build, and run more innovative and efficient businesses.

Contact Cognizant | Partner Overview

*Already worked with Cognizant? Rate the Partner

*To review an AWS Partner, you must be a customer that has worked with them directly on a project.