Artificial Intelligence

Live call analytics and agent assist for your contact center with Amazon language AI services

| August 2025: For conversational analytics, refer to Amazon Connect Contact Lens with external voice. |

Update August 2024 (v0.9.0) – Now includes audio streaming from softphone or meeting web apps, sample server for Talkdesk audio integration, automatic language identification, Anthropic’s Claude-3 LLM models on Amazon Bedrock, Knowledge Bases on Amazon Bedrock, and much more. For details, see New features.

Your contact center connects your business to your community, enabling customers to order products, callers to request support, clients to make appointments, and much more. When calls go well, callers retain a positive image of your brand, and are likely to return and recommend you to others. And the converse, of course, is also true.

Naturally, you want to do what you can to ensure that your callers have a good experience. There are two aspects to this:

- Help supervisors assess the quality of your caller’s experiences in real time – For example, your supervisors need to know if initially unhappy callers become happier as the call progresses. And if not, why? What actions can be taken, before the call ends, to assist the agent to improve the customer experience for calls that aren’t going well?

- Help agents optimize the quality of your caller’s experiences – For example, can you deploy live call transcription, call summarization, or AI powered Agent Assistance? This removes the need for your agents to take notes, and provides them with contextually relevant information and guidance during calls, freeing them to focus more attention on providing positive customer interactions.

Contact Lens for Amazon Connect provides real-time supervisor and agent assist features that could be just what you need, but you may not yet be using Amazon Connect. You need a solution that also works with your existing contact center.

Amazon Machine Learning (ML) services like Amazon Transcribe, Amazon Comprehend, and Amazon Bedrock provide feature-rich APIs that you can use to transcribe and extract insights from your contact center calls at scale. Amazon Lex provides conversational AI capabilities that can capture intents and context from conversations, and Knowledge Bases for Amazon Bedrock offer intelligent search features that can provide useful information to agents based on callers’ needs. Although you could build your own custom call analytics and agent assist solutions using these services, that requires time and resources. In this post, we discuss our sample solution for live call analytics with agent assist.

Solution overview

Our sample solution, Live Call Analytics with Agent Assist (LCA), does most of the heavy lifting associated with providing an end-to-end solution that can plug into your contact center and provide the intelligent insights that you need.

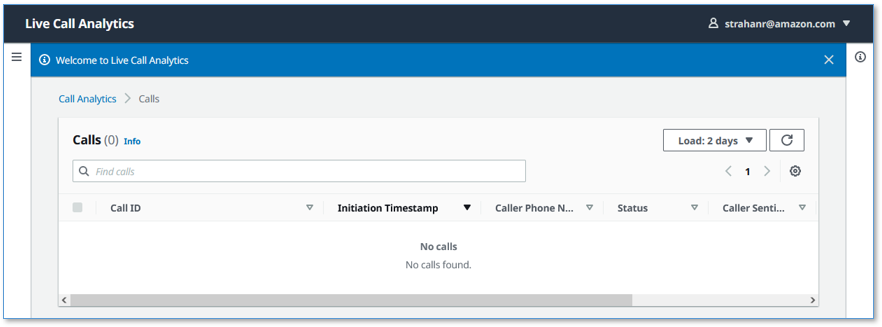

It has a call list user interface, as shown in the following screenshot.

It also has a call detail user interface.

LCA currently supports the following features:

- Accurate streaming transcription with support for personally identifiable information (PII) redaction, custom vocabulary, and custom language models

- Supports many languages and can be configured to automatically detect the spoken language

- Sentiment detection

- Issue detection using Amazon Transcribe Real-time Analytics

- Call category detection using Amazon Transcribe Real-time Analytics category rules

- Call alerts and alert notifications via email or text messages

- Integration with the companion Post Call Analytics (PCA) solution

- Integration with Chime Call Analytics for audio ingestion

- Real-Time Voice Tone Analysis when using Chime Call Analytics

- Generative transcript summarization for each completed call, using Amazon Bedrock with state-of-the-art Anthropic’s Claude LLM models.

- Generative AI Agent Assist features for contextual understanding and generative questions answering based on knowledge articles, using Amazon Bedrock.

- Agent Assist Bot interaction widget allowing agents to directly execute knowledge base queries, FAQ lookups, or automate actions, with customizable quick-prompt buttons offering, for example, on-demand in-progress call summarization to quickly bring new agents or supervisors up to speed in call transfer and manager escalation scenarios.

- Optional Salesforce integration plugin.

- Real-time translation of call transcripts using Amazon Translate

- Ability to disable agent transcripts in the Call Transcript pane

- Automatic scaling to handle call volume changes

- Call recording and archiving

- A dynamically updated web user interface for supervisors and agents:

- A call list page that displays a list of in-progress and completed calls, with call timestamps, metadata, alerts, and summary statistics like duration, sentiment trend, and detected categories

- Call detail pages showing live turn-by-turn transcription of the caller/agent dialog, turn-by-turn sentiment, and sentiment trend, voice tone, issues, categories, and alerts. The Agent Assist feature provides additional in-line messages to help agents respond to callers’ needs. The transcript summarization feature displays a short summary and additional insights from each call. The new agent assist bot widget enables the agent to retrieve knowledge base answers, interact with the bot, and generate on demand call summaries.

- Integration with your contact center using either:

- Standards-based telephony integration with Session Recording Protocol (SIPREC)

- Audiohook integration for Genesys Cloud contact centers

- Talkdesk audio stream integration

- Amazon Connect ContactLens or KVS

- or stream audio from any Chrome browser based agent softphone or meeting web app

- A built-in standalone demo mode that allows you to quickly install and try out LCA for yourself, without needing to integrate with your contact center telephony

- Easy-to-install resources with a single AWS CloudFormation template

This is still Day 1! We expect to keep adding many more exciting features over time, based on your feedback.

Deploy the CloudFormation stack

Start your LCA experience by using AWS CloudFormation to deploy the sample solution with the built-in demo mode enabled.

Prerequisite: By default LCA now uses Amazon Bedrock LLM models for its call summarization and agent assist features. Before proceeding, if you have not previously done so, you must request access to the following Amazon Bedrock models – see Amazon Bedrock Model Access:

- Amazon: All Titan Embeddings models (Titan Embeddings G1 – Text, and Titan Text Embeddings V2)

- Anthropic: All Claude 3 models (Claude 3 Sonnet and Claude 3 Haiku)

The demo mode downloads, builds, and installs a small virtual PBX server on an Amazon Elastic Compute Cloud (Amazon EC2) instance in your AWS account (using the free Asterisk project) so you can make test phone calls right away and see the solution in action. You can integrate it with your contact center later after evaluating the solution’s functionality for your unique use case.

- Use the appropriate Launch Stack button for the AWS Region in which you’ll use the solution. We expect to add support for additional Regions over time.

- For Stack name, use the default value,

LCA. - For Admin Email Address – use a valid email address—your temporary password is emailed to this address during the deployment.

- For Authorized Account Email Domain – use the domain name part of your corporate email address to allow users with email addresses in the same domain to create their own new UI accounts, or leave blank to prevent users from directly creating their own accounts.

- For CallAudioSource, choose

Demo Asterisk PBX Server. - For Allowed CIDR Block for Demo Softphone, use the IP address of your local computer with a network mask of

/32.

To find your computer’s IP address, you can use the website checkip.amazonaws.com.

Later, you can optionally install a softphone application on your computer, which you can register with LCA’s demo Asterisk server. This allows you to experiment with LCA using real two-way phone calls.

If that seems like too much hassle, don’t worry! Simply leave the default value for this parameter and elect not to register a softphone later. You will still be able to test the solution. When the demo Asterisk server doesn’t detect a registered softphone, it automatically simulates the agent side of the conversation using a built-in audio recording.

- For all other parameters, use the default values. Agent Assist and Transcript summarization features are now enabled by default.

If you want to customize the settings later, for example to apply PII redaction or custom vocabulary to improve accuracy, or to try different Agent Assist or Transcript Summarization configurations, you can update the stack for these parameters.

The main CloudFormation stack uses nested stacks to create the following resources in your AWS account:

- S3 buckets to hold build artifacts and call recordings

- An EC2 instance (t4g.large) with the demo Asterisk server installed, with VPC, security group, Elastic IP address, and internet gateway

- An Amazon Chime Voice Connector, configured to stream audio to Amazon Kinesis Video Streams

- An AWS Lambda function to combine audio channel streams from Kinesis Video Streams and relay as a stereo stream to Amazon Transcribe, publish transcription segments in Kinesis Data Streams, and create and store stereo call recordings.

- Amazon Kinesis Data Stream to relay call events and transcription segments to the enrichment processing function.

- AWS Lambda transcript processing (enrichment) that adds analytics and agent assist messages to the live call data.

- Agent assist resources including the QnABot on AWS solution stack, which interacts with Amazon OpenSearch Service and Amazon Bedrock.

- A Knowledge Base for Amazon Bedrock, with a web crawler configured to crawl two public websites for the demo

- AWS AppSync API, which provides a GraphQL endpoint to support queries and real-time updates

- Website components including S3 bucket, Amazon CloudFront distribution, and Amazon Cognito user pool

- Other miscellaneous supporting resources, including AWS Identity and Access Management (IAM) roles and policies (using least privilege best practices), Amazon Virtual Private Cloud (Amazon VPC) resources, Amazon EventBridge event rules, Amazon CloudWatch log groups, and a Simple Notifications Service (SNS) topic for subscribing to alerts.

The stacks take about 45 minutes to deploy. The main stack status shows CREATE_COMPLETE when everything is deployed.

Set your password

After you deploy the stack, you need to open the LCA web user interface and set your password.

- On the AWS CloudFormation console, choose the main stack,

LCA, and choose the Outputs tab.

- Open your web browser to the URL shown as

CloudfrontEndpointin the outputs.

You’re directed to the login page.

- Open the email your received, at the email address you provided, with the subject “Welcome to Live Call Analytics!”

This email contains a generated temporary password that you can use to log in and create your own password. Your username is your email address.

- Set a new password.

Your new password must have a length of at least eight characters, and contain uppercase and lowercase characters, plus numbers and special characters.

Follow the directions to verify your email address, or choose Skip to do it later.

Make a test phone call

Call the number shown as DemoPBXPhoneNumber in the AWS CloudFormation outputs for the main LCA stack.

You haven’t yet registered a softphone app, so the demo Asterisk server picks up the call and plays a recording. Listen to the recording, and answer the questions when prompted. Your call is streamed to the LCA application, and is recorded, transcribed, and analyzed. When you log in to the UI later, you can see a record of this call.

Optional: Install and register a softphone

If you want to use LCA with live two-person phone calls instead of the demo recording, you can register a softphone application with your new demo Asterisk server.

The following README has step-by-step instructions for downloading, installing, and registering a free (for non-commercial use) softphone on your local computer. The registration is successful only if Allowed CIDR Block for Demo Softphone correctly reflects your local machine’s IP address. If you got it wrong, or if your IP address has changed, you can choose the LCA stack in AWS CloudFormation, and choose Update to provide a new value for Allowed CIDR Block for Demo Softphone.

If you still can’t successfully register your softphone, and you are connected to a VPN, disconnect and update Allowed CIDR Block for Demo Softphone—corporate VPNs can restrict IP voice traffic.

When your softphone is registered, call the phone number again. Now, instead of playing the default recording, the demo Asterisk server causes your softphone to ring. Answer the call on the softphone, and have a two-way conversation with yourself! Better yet, ask a friend to call your Asterisk phone number, so you can simulate a contact center call by role playing as caller and agent.

Explore live call analysis and agent assist features

Live call analysis

Now, with LCA successfully installed in demo mode, you’re ready to explore the call analysis features.

- Open the LCA web UI using the URL shown as

CloudfrontEndpointin the main stack outputs.

We suggest bookmarking this URL—you’ll use it often!

- Make a test phone call to the demo Asterisk server (as you did earlier).

- If you registered a softphone, it rings on your local computer. Answer the call, or better, have someone else answer it, and use the softphone to play the agent role in the conversation.

- If you didn’t register a softphone, the Asterisk server demo audio plays the role of agent.

Your phone call almost immediately shows up at the top of the call list on the UI, with the status In progress.

The call has the following details:

- Call ID – A unique identifier for this telephone call

- Alerts – Shows the Alert count for the call. See Categories and Alerts section below.

- Agent – Shows the name of the agent, if available. See Setting the AgentId for a call.

- Initiation Timestamp – Shows the time the telephone call started

- Summary – (New!) Shows a short summary for competed calls – click to see it expanded.

- Caller Phone Number – Shows the number of the phone from which you made the call

- Status – Indicates that the call is in progress

- Caller Sentiment – The average caller sentiment

- Caller Sentiment Trend –The caller sentiment trend

- Duration – The elapsed time since the start of the call

- Categories – Shows the Transcribe Call Analytics categories detected during the call. See Categories and Alerts section below.

- Choose the call ID of your

In progresscall to open the live call detail page.

As you talk on the phone from which you made the call, your voice and the voice of the agent are transcribed in real time and displayed in the auto scrolling Call Transcript pane.

Each turn of the conversation (customer and agent) is annotated with a sentiment indicator. As the call continues, the sentiment for both caller and agent is aggregated over a rolling time window, so it’s easy to see if sentiment is trending in a positive or negative direction.

- End the call.

- Navigate back to the call list page by choosing Calls at the top of the page.

Your call is now displayed in the list with the status Done.

- To display call details for any call, choose the call ID to open the details page, or select the call to display the Calls list and Call Details pane on the same page. You can change the orientation to a side-by-side layout using the Call Details settings tool (gear icon).

You can make a few more phone calls to become familiar with how the application works. With the softphone installed, ask someone else to call your Asterisk demo server phone number: pick up their call on your softphone and talk with them while watching the turn-by-turn transcription update in real time. Observe the low latency. Assess the accuracy of transcriptions and sentiment annotation—you’ll likely find that it’s not perfect, but it’s close! Transcriptions are less accurate when you use technical or domain-specific jargon, but you can use custom vocabulary or custom language models to teach Amazon Transcribe new words and terms.

Categories and Alerts

LCA supports real-time Categories and Alerts using the Amazon Transcribe Real-time Call Analytics service. Use the Amazon Transcribe Category Management console to create one or more categories with Category Type set to REAL_TIME (see Creating Categories). As categories are matched by Transcribe Real-time Call Analytics during the progress of a call, they are dynamically identified by LCA in the live transcript, call summary, and call list areas of the UI.

Categories with names that match the LCA CloudFormation stack parameter “Category Alert Regular Expression” are highlighted with a red color to show that they have been designated by LCA as Alerts. The Alert counter in the Call list page is used to identify, sort, and filter calls with alerts, allowing supervisors to quickly identify potentially problematic calls, even while they are still in progress.

LCA publishes a notification for each matched category to a topic in the Amazon Simple Notification Service. Subscribe to this topic to receive emails, SMS text messages, or to integrate category match notifications with your own applications. For more information, see Configure SNS Notifications on Call Categories.

Agent Assist

It’s time now to try the new LCA Agent Assist capability. By default, Agent Assist is enabled using QnABot on AWS. QnABot conveniently orchestrates Amazon Lex and Amazon Bedrock to provide the conversational intent matching, context capture, and knowledge base search capabilities used for Agent Assist. And its administrative UI, the Content Designer, is where you configure how messages are generated for the agent. Later you can explore alternative options for implementing Agent Assist, including integrating your own Amazon Lex bot, or implementing your own business logic and knowledge base integrations using a custom AWS Lambda function. But first let’s see what the default implementation can do by exploring the built-in demo.

- Open and review the Agent Assist demo script.

- Make a phone call to the demo Asterisk server and open the live call detail page (as you did earlier).

- Play the role of the caller from the demo script dialog.

- Ideally, enlist a friend to play the role of agent. Your agent friend uses the softphone (discussed earlier) to answer your call, and speak the agent lines from the script. If that’s not convenient, don’t worry! You can still experiment with Agent Assist by voicing only the caller portions of the dialog and allowing the demo recording to play the agent role.

Observe the messages labelled AGENT_ASSISTANT as they appear in the transcript in near real time. These messages help guide the agent to respond to the caller.

Observe the features illustrated in each section of the demo script:

- Providing a suggested answer to the caller’s question, based on information provided earlier (rewards-card)

- Answering a self-contained caller question by searching pre-configured FAQs.

- Finding information relevant to a caller’s question by searching unstructured documents such as web pages, in the absence of a predefined FAQ.

Think about the concepts at work in each of these examples. Consider their relative importance, and how to apply them to your own contact center scenarios.

You’ll notice that agent assist messages are displayed only when the caller says their lines according to the script without too much ad-libbing. Additional configuration is required to enable our agent assist capabilities to operate effectively in a wider variety of situations. We need a larger knowledge base, a larger set of FAQs, and a larger set of caller intents with sample utterances. You can help make this more robust!

See the Agent Assist README for much more detail on how to customize and extend the capabilities. Experiment with the configuration. Make it provide useful suggestions for a variety of situations that reflect your own use cases. Can you get it to work, at least some of the time, for unscripted calls?

You can also interact directly with the Agent Assist bot using the interactive bot widget in the Call Detail page. Use this to generate an on-demand summary of the current call at any time, to ask the bot questions, or to perform actions as needed, during or after the call.

We plan to provide additional capabilities for the LCA Agent Assistant in future releases, leveraging new features from Amazon Transcribe, and generative AI capabilities enabled by the latest Large Language Models on the Amazon Bedrock service. Let us know what other features are important to you.

Live translation

LCA supports real-time translation of call transcripts using Amazon Translate, enabling agents or supervisors to see transcripts in a language of their choice. Use Enable Translation at the top of the Call Transcript pane, and choose from the list of available languages supported by Amazon Translate. Your language choice is now the default when you enable translation again. Translated text in the chosen language appears below each completed transcription segment in real time for in-progress calls and for the entire transcript of completed calls. When you enable translation during a live call the subsequent transcript segments are translated in real time, and when the call ends, the whole transcript is automatically translated so that you can review the call in both spoken and translated languages without leaving the page.

Transcript summaries

When the call has ended, LCA automatically runs a set of large language model (LLM) prompts on Amazon Bedrock to summarize the call transcript and extract insights. You can customize these prompts to make the summaries reflect the insights and style you need.

By default we use Anthropic’s Claude Instant model on Amazon Bedrock. When you deploy LCA using the BEDROCK option for transcript summaries, everything is configured automatically. Hopefully already you’ve been able to observe the transcript summaries generated from your earlier test calls. Summaries typically appear in the Call Detail page within a few seconds after the end of the call.

See Transcript Summarization for much more detail on how to extend and customize this feature. Modify our default prompts and / or add additional prompts to generate more insights. Please experiment and let us know what you think!

Processing flow overview

How did LCA transcribe and analyze your test phone calls? Let’s take a quick look at how it works.

The following diagram shows the main architectural components and how they fit together at a high level.

The demo Asterisk server is configured to use Voice Connector, which provides the phone number and SIP trunking needed to route inbound and outbound calls. When you configure LCA to integrate with your contact center using the Chime Voice Connector (SIPREC) option, instead of the demo Asterisk server, Voice Connector is configured to integrate instead with your existing contact center using SIP-based media recording (SIPREC) or network-based recording (NBR). In both cases, Voice Connector streams audio to Kinesis Video Streams using two streams per call, one for the caller and one for the agent. (LCA also supports additional call input sources, Genesys Cloud AudioHook, Talkdesk, Amazon Connect, and any audio streamed from your microphone and an app in your Chrome browser such as an agent softphone or meeting app. These use different architectures for ingestion – see the section titled “Integrate with your contact center” later in this post.)

When a new caller or agent Kinesis Video stream is initiated, an event is fired using EventBridge. This event triggers the Call Transcriber Lambda function. When both the caller and agent streams have been established, your custom call initialization Lambda hook function, if specified, is invoked for you (see Call Initialiation LambdaHookFunction). Then the Call Transcriber function starts consuming real time audio fragments from both input streams and combines them to create a new stereo audio stream. The stereo audio is streamed to an Amazon Transcribe Real-time Call Analytics or standard Amazon Transcribe session (depending on stack parameter value), and the transcription results are written in real time to Kinesis Data Streams.

Each call processing session runs until the call ends. Any session that lasts longer than the maximum duration of an AWS Lambda function invocation (15 minutes) is automatically and seamlessly transitioned to a new ‘chained’ invocation of the same function, while maintaining a continuous transcription session with Amazon Transcribe. This function chaining repeats as needed until the call ends. At the end of the call the function creates a stereo recording file in Amazon S3.

Another Lambda function, the Call Event Processor, fed by Kinesis Data Streams, processes and enriches call metadata and transcription segments. The Call Event Processor integrates with the (optional) Agent Assist services. By default, LCA agent assist is powered by Amazon Lex, and Amazon Bedrock using the open source QnABot on AWS solution. Alternatively you can opt to provide your own agent assist bot or custom agent assist logic in the form of an AWS Lambda function. The Call Event Processor also invokes the Transcript Summarization lambda when the call ends, to generate a summary of the call from the full transcript.

The Call Event Processor function interfaces with AWS AppSync to persist changes (mutations) in DynamoDB and to send real-time updates to logged in web clients.

The LCA web UI assets are hosted on Amazon S3 and served via CloudFront. Authentication is provided by Amazon Cognito. In demo mode, user identities are configured in an Amazon Cognito user pool. In a production setting, you would likely configure Amazon Cognito to integrate with your existing identity provider (IdP) so authorized users can log in with their corporate credentials.

When the user is authenticated, the web application establishes a secure GraphQL connection to the AWS AppSync API, and subscribes to receive real-time events such as new calls and call status changes for the calls list page, and new or updated transcription segments and computed analytics for the call details page. When translation is enabled, the web application also interacts securely with Amazon Translate to translate the call transcription into the selected language.

The entire processing flow, from ingested speech to live webpage updates, is event driven, and so the end-to-end latency is small—typically just a few seconds.

Monitoring and troubleshooting

AWS CloudFormation reports deployment failures and causes on the relevant stack Events tab. See Troubleshooting CloudFormation for help with common deployment problems. Look out for deployment failures caused by limit exceeded errors; the LCA stacks create resources that are subject to default account and Region Service Quotas.

Amazon Transcribe has a default limit of 25 concurrent transcription streams, which limits LCA to 25 concurrent calls. Request an increase for the number of concurrent HTTP/2 streams for streaming transcription if you need to handle a larger number of concurrent calls.

LCA provides runtime monitoring and logs for each component using CloudWatch:

- Call processing Lambda function – On the Lambda console, open the

LCA-AISTACK-CallTranscriberfunction. Choose the Monitor tab to see function metrics. Choose View logs in CloudWatch to inspect function logs. - Call Event Processor Lambda function – On the Lambda console, open the

LCA-AISTACK-CallEventProcessorfunction. Choose the Monitor tab to see function metrics. Choose View logs in CloudWatch to inspect function logs. - AWS AppSync API – On the AWS AppSync console, open the

CallAnalytics-LCAAPI. Choose Monitoring in the navigation pane to see API metrics. Choose View logs in CloudWatch to inspect AppSyncAPI logs. - When using QnABot on AWS with Amazon Lex and Amazon Bedrock for Agent Assist, refer to the Agent Assist README and the QnABot solution implementation guide for additional information.

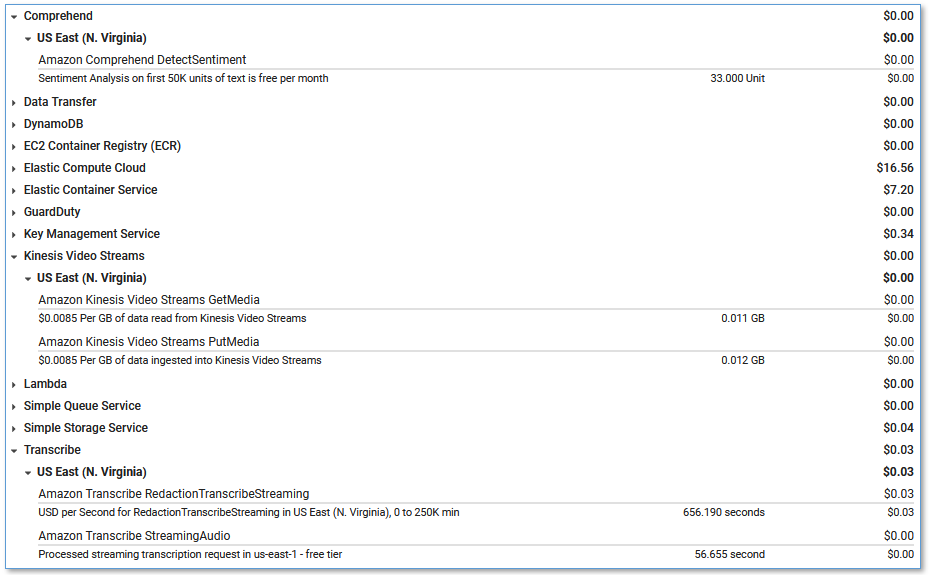

Cost assessment

With the demo Asterisk server enabled, there is a small hourly cost for a t4g.large EC2 instance costing about $0.07/hour – see Amazon EC2 pricing.

When Agent Assist is enabled using QnABot on AWS, there are additional hourly charges for the services used by QnABot. For details, see QnABot on AWS solution costs. Buy default LCA is enabled using Knowledge Bases for Amazon Bedrock, which you use for LCA and potentially other use cases. For more details, see Amazon Bedrock Pricing. Alternatively, you can choose to use QnABot with an Amazon Bedrock LLM, without a knowledge base.

When Transcript Summarization is enabled using BEDROCK(default – recommended), ANTHROPIC or LAMBDA options for summarization, you are responsible for any LLM usage related charges. If you enabled Transcript Summarization using SAGEMAKER, you incur additional charges for the SageMaker endpoint, depending on the value you entered for Initial Instance Count for Summarization SageMaker Endpoint (default is a provisioned 1-node ml.m5.xlarge endpoint). If you entered ‘0’ to select SageMaker Serverless Inference then you will be charged only for usage. See Amazon SageMaker pricing for relevant costs and information on Free Tier eligibility.

The remaining solution costs are based entirely on usage.

The usage costs add up to about $0.17 for a 5-minute call, although this can vary based on options selected, whether you use Transcribe analytics or standard mode, whether you use the LLM options for Agent Assist and call summarization, and total usage because usage affects Free Tier eligibility and volume tiered pricing for many services. For more information about the services that incur usage costs, see the following:

- AWS AppSync pricing

- Amazon Translate pricing

- Amazon Cognito Pricing

- Amazon Comprehend Pricing

- Amazon DynamoDB pricing

- Amazon EventBridge pricing

- Amazon Kinesis Video Streams pricing

- AWS Lambda Pricing

- Amazon S3 pricing

- Amazon Transcribe Pricing

- Amazon SageMaker pricing for Serverless Inference (Call summarization – optional)

- Amazon Voice Connector Chime pricing (streaming)

- Amazon Bedrock pricing (Call summarization and Agent Assist – default, optional)

- QnABot on AWS costs (Agent Assist – optional)

To explore LCA costs for yourself, use AWS Cost Explorer or choose Bill Details on the AWS Billing Dashboard to see your month-to-date spend by service.

Integrate with your contact center

To deploy LCA to analyze real calls to your contact center using AWS CloudFormation, update the existing LCA demo stack, changing the CallAudioSource parameter.

Alternatively, delete the existing LCA demo stack, and deploy a new LCA stack using the appropriate option for the CallAudioSource parameter.

You could also deploy a new LCA stack in a different AWS account or Region.

Genesys Cloud

Use these parameters to configure LCA for Genesys Cloud contact center integration:

- For CallAudioSource, choose

Genesys Cloud Audiohook Web Socket. - For Allowed CIDR Block for Demo Softphone, leave the default value.

- For Allowed CIDR List for Siprec Integration, leave the default value.

- For Amazon Connect instance ARN (existing), leave the default value.

When you deploy LCA, all the required resources for Genesys Audiohook web socket integration are created for you. Please refer to the LCA Genesys Cloud Audiohook README for architecture and detailed instructions.

Talkdesk Conversation Orchestrator (sample)

Use these parameters to configure LCA for Talkdesk integration:

- For CallAudioSource, choose

Talkdesk Voice Stream Web Socket. - For Allowed CIDR Block for Demo Softphone, leave the default value.

- For Allowed CIDR List for Siprec Integration, leave the default value.

- For Amazon Connect instance ARN (existing), leave the default value.

- For TalkdeskAccountId, provide the unique account identifier assigned to your Talkdesk account

When you deploy LCA, all the required resources for Talkdesk web socket integration are created for you. Please refer to the LCA Talkdesk Conversation Orchestrator Sample Service README for architecture and detailed instructions.

Chime Voice Connector (SIPREC)

Use these parameters to configure LCA for standard SIPREC based contact center integration:

- For CallAudioSource, choose

Chime Voice Connector (SIPREC) - For Call Audio Processor, optionally choose

Chime Call Analytics, and then enableChime Voice Tone Analysis. Known issue: Enabling Chime Call Analytics currently disables PCA integration. This will be addressed in a later release. - For Allowed CIDR Block for Demo Softphone, leave the default value.

- For Allowed CIDR List for SIPREC Integration, use the CIDR blocks of your SIPREC source hosts, such as your SBC servers. Use commas to separate CIDR blocks if you enter more than one.

- For Lambda Hook Function ARN for SIPREC Call Initialization (existing), leave the default value, or optionally provide the ARN of your own Lambda function to selectively choose calls to process, toggle agent/caller streams, assign

AgentId, and/or modify values for CallId and displayed phone numbers. See Call Initialization LambdaHookFunction. - For Amazon Connect instance ARN (existing), leave the default value.

See Setting AgentId for information on providing or updating the AgentId attribute for a call.

See Start call processing for an in-progress call for information on options to delay starting the transcription of a call. You might want to do this if your contact center SBC initiates a SIPREC session at the start of each call, but you don’t want to start transcribing the call until later, when the caller and agent are connected.

When you deploy LCA, a Voice Connector is created for you. Use the Voice Connector documentation as guidance to configure this Voice Connector and your PBX/SBC for SIP-based media recording (SIPREC) or network-based recording (NBR). The Voice Connector Resources page provides some vendor-specific example configuration guides, including:

- SIPREC Configuration Guide: Cisco Unified Communications Manager (CUCM) and Cisco Unified Border Element (CUBE)

- SIPREC Configuration Guide: Avaya Aura Communication Manager and Session Manager with Sonus SBC 521

The LCA GitHub repository has additional vendor specific notes that you may find helpful; see SIPREC.md.

Amazon Connect

Use these parameters to configure LCA for Amazon Connect Contact Lens integration:

- For CallAudioSource, choose

Amazon Connect Contact Lens. - For Allowed CIDR Block for Demo Softphone, leave the default value.

- For Allowed CIDR List for Siprec Integration, leave the default value.

- For Amazon Connect instance ARN (existing), enter the Connect instance Amazon Resource Name (ARN) of an existing Amazon Connect instance.

When you deploy LCA, integration with the specified Connect instance is configured automatically. Please refer to the LCA Connect Integration README for architecture, prerequisites, and detailed instructions.

LCA also supports direct voice integration with Amazon Connect using Kinesis Video Streams. Please refer to the LCA Amazon Connect Kinesis Video Streams Audio Source README for architecture, prerequisites, and detailed instructions.

Stream calls from your microphone and any browser-based audio application

Use LCA to stream audio from your microphone and any browser-based softphone, meeting app, or other audio source playing in your Chrome browser, using the convenient Stream Audio tab that is built into the LCA UI.

- Open any audio source in a browser tab.

For example, this could be a softphone on your contact center agent application, a meeting web app, or for demo purposes, you can simply play a local audio recording or a YouTube video in your browser to emulate a caller. If you just want to try it, open the following YouTube video in a new tab.

- In the LCA App UI, choose Stream Audio to open the Stream Audio tab.

- For Call ID, leave the default auto-generated ID, or enter a different unique call identifier, possibly copy/pasted from your contact center agent application.

- For Agent ID, enter a name for yourself (applied to audio from your microphone).

- For Customer Phone, enter the caller ID phone number (or caller name) for LCA to display as ‘Caller phone number’ for the call.

- For System Phone, enter the system phone number (or name) for LCA to display as ‘System phone number’ for the call.

- For Microphone Role, leave the default (AGENT) for normal call use cases where you are the agent. You can swap the role assignments to have your voice (microphone) assigned the CALLER role, and the browser app audio assigned the AGENT role – for example to make a demo call where you have the agent’s voice recording playing in a browser tab.

- Choose Start Streaming.

- Choose the browser tab you opened earlier, and choose Allow to share.

- Access the meeting from the LCA UI Calls tab where you will see it “In Progress”.

Customize your deployment

Use the following CloudFormation template parameters when creating or updating your stack to customize your LCA deployment:

- To use your own S3 bucket for call recordings, use Call Audio Recordings Bucket Name and Audio File Prefix.

- To enable Amazon Transcribe Real-time Analytics API set Transcribe API mode to

analytics. This enables features including call categories, alerts, detected issues, and integration with your existing Post Call Analytics (PCA) deployment (see below). - To redact PII from the transcriptions, set IsContentRedactionEnabled to

true. For more information, see Redacting or identifying PII in a real-time stream. - To improve transcription accuracy for technical and domain-specific acronyms and jargon, set Transcription Custom Vocabulary Name to the name of a custom vocabulary that you already created in Amazon Transcribe and/or set Transcription Custom Language Model Name to the name of a previously created custom language model. For more information, see Improving Transcription Accuracy.

- To transcribe calls in a supported language other than US English, chose the desired value for Language for Transcription. You can also choose to have Transcribe identify the primary language, or even multiple languages used during the meeting by setting Language for Transcription to

identify-languageoridentify-multiple-languagesand provide a list of languages with an optional preferred language – see Language identification with streaming transcriptions. - To customize transcript processing, optionally set Lambda Hook Function ARN for Custom Transcript Segment Processing to the ARN of your own Lambda function. For more information, see Using a Lambda function to optionally provide custom logic for transcript processing.

- To use your own custom bot or custom knowledge bases for Agent Assist, or to disable the Agent Assist feature, choose from the available Enable Agent Assist options.

- To customize the Agent Assist capabilities based on the QnABot on AWS solution, Amazon Lex, Amazon Bedrock, and (optionally) large language model integration, see the Agent Assist README.

- To customize Transcript Summarization by configuring LCA to call your own Lambda function, see Transcript Summarization LAMBDA option.

- To customize Transcript Summarization by modifying the default prompts or adding new ones, see Transcript Summarization.

- To change the retention period, set Record Expiration In Days to the desired value. All call data is permanently deleted from the LCA DynamoDB storage after this period. Changes to this setting apply only to new calls received after the update.

- To selectively choose which calls to process, toggle agent/caller streams, assign AgentId, and/or modify values for CallId and displayed phone numbers, set Lambda Hook Function ARN for SIPREC Call Initialization (existing) to the ARN of a Lambda function that implements your custom logic. See LambdaHookFunction.

LCA is an open-source project. You can fork the LCA GitHub repository, enhance the code, and send us pull requests so we can incorporate and share your improvements!

Update an existing LCA stack

Easily update your existing LCA stack to the latest release – see Update an existing stack.

Clean up

When you’re finished experimenting with this solution, clean up your resources by opening the AWS CloudFormation console and deleting the LCA stacks that you deployed. This deletes resources that were created by deploying the solution. The recording S3 buckets, DynamoDB table, and CloudWatch Log groups are retained after the stack is deleted to avoid deleting your data.

Post Call Analytics: Companion solution

Our companion solution, Post Call Analytics (PCA), offers additional insights and analytics capabilities by using the Amazon Transcribe Call Analytics batch API to detect common issues, interruptions, silences, speaker loudness, call categories, and more. Unlike LCA, which transcribes and analyzes streaming audio in real time, PCA analyzes your calls after the call has ended. The Amazon Transcribe Real-time Call Analytics service provides post-call analytics output from your streaming sessions just a few minutes after the call has ended. LCA can send this post-call analytics data to PCA to provide analytics visualizations for your streaming sessions without needing to transcribe the audio a second time. Configure LCA to integrate with an existing PCA deployment using the LCA CloudFormation template parameters labeled Post Call Analytics (PCA) Integration. PCA also integrates with Amazon QuickSight to enable powerful analytics across all your calls. Use these solutions together to get the best of both worlds. For more information, see Post call analytics for your contact center with Amazon language AI services and watch the new video demo showing the latest Amazon Q on Quicksight feature using call analytics data from LCA and PCA.

Conclusion

The Live Call Analytics (LCA) with agent assist sample solution offers a scalable, cost-effective approach to provide live call analysis with features to assist supervisors and agents to improve focus on your callers’ experience. It uses Amazon ML services like Amazon Transcribe, Amazon Comprehend, Amazon Lex, and Amazon Bedrock to transcribe and extract real-time insights from your contact center audio, and to provide real time agent assistance using trusted documents from your own knowledge base.

The sample LCA application is provided as open source—use it as a starting point for your own solution, and help us make it better by contributing back fixes and features via GitHub pull requests. Browse to the LCA GitHub repository to explore the code, choose Watch to be notified of new releases, and check the README for the latest documentation updates.

For expert assistance, AWS Professional Services and other AWS Partners are here to help.

We’d love to hear from you. Let us know what you think in the comments section, or use the issues forum in the LCA GitHub repository.

About the Authors

Bob Strahan is a Principal Solutions Architect in the AWS Language AI Services team.

Bob Strahan is a Principal Solutions Architect in the AWS Language AI Services team.

Oliver Atoa is a Principal Solutions Architect in the AWS Language AI Services team.

Oliver Atoa is a Principal Solutions Architect in the AWS Language AI Services team.

Sagar Khasnis is a Senior Solutions Architect focused on building applications for Productivity Applications. He is passionate about building innovative solutions using AWS services to help customers achieve their business objectives. In his free time, you can find him reading biographies, hiking, working out at a fitness studio, and geeking out on his personal rig at home.

Sagar Khasnis is a Senior Solutions Architect focused on building applications for Productivity Applications. He is passionate about building innovative solutions using AWS services to help customers achieve their business objectives. In his free time, you can find him reading biographies, hiking, working out at a fitness studio, and geeking out on his personal rig at home.

Court Schuett is a Chime Specialist SA with a background in telephony and now likes to build things that build things.

Court Schuett is a Chime Specialist SA with a background in telephony and now likes to build things that build things.

Christopher Lott is a Senior Solutions Architect in the AWS AI Language Services team. He has 20 years of enterprise software development experience. Chris lives in Sacramento, California and enjoys gardening, aerospace, and traveling the world.

Christopher Lott is a Senior Solutions Architect in the AWS AI Language Services team. He has 20 years of enterprise software development experience. Chris lives in Sacramento, California and enjoys gardening, aerospace, and traveling the world.

Babu Srinivasan is a Sr. Specialist SA – Language AI services in the World Wide Specialist organization at AWS, with over 24 years of experience in IT and the last 6 years focused on the AWS Cloud. He is passionate about AI/ML. Outside of work, he enjoys woodworking and entertains friends and family (sometimes strangers) with sleight of hand card magic.

Babu Srinivasan is a Sr. Specialist SA – Language AI services in the World Wide Specialist organization at AWS, with over 24 years of experience in IT and the last 6 years focused on the AWS Cloud. He is passionate about AI/ML. Outside of work, he enjoys woodworking and entertains friends and family (sometimes strangers) with sleight of hand card magic.