Category: Auto Scaling

EC2 Spot Instance Updates – Auto Scaling and CloudFormation Integration, New Sample App

I have three exciting news items for Amazon EC2 users:

- You can now use Auto Scaling to place bids on the EC2 Spot Market on your behalf.

- Similarly, you can now place Spot Market bids from within an AWS CloudFormation template.

- We have a new article to show you how to track Spot instance activity with notifications to an Amazon Simple Notification Service (SNS) topic.

Huh?

Unless you are intimately familiar with the entire AWS product lineup, you may have found the preceding list of items just a bit mysterious. Before I get to the heart of today’s announcement, let’s review the fundamentals of each product:

You probably know all about Amazon EC2. You can launch servers on an as-needed basis and pay for only the resources that you consume. You can pay the on-demand prices, or you can bid for unused EC2 capacity on the Spot Market, taking advantage of prices that vary in response to changes in supply and demand.

AWS CloudFormation allows you to create and manage a collection of AWS resources, all specified by a single declarative template expressed in JSON format.

The Simple Notification Service lets you create any number of message topics and to publish messages to the topics.

Together, these features should make it much easier to use Spot Instances, which in turn can help you run EC2 instances more cost-effectively.

With that out of the way, let’s dig in!

Spot +Auto Scaling

You can now set up Auto Scaling to make Spot bids on your behalf. As you may know, you must create an Auto Scaling Group and associate a launch configuration with it in order to make use of Auto Scaling. The Auto Scaling group lists the desired Availability Zones, the minimum and maximum size of the group, health checks, and other properties. The launch configuration includes a number of important parameters including the EC2 AMI to launch, the instance type to use, user data to pass to the newly launched instances, and so forth.

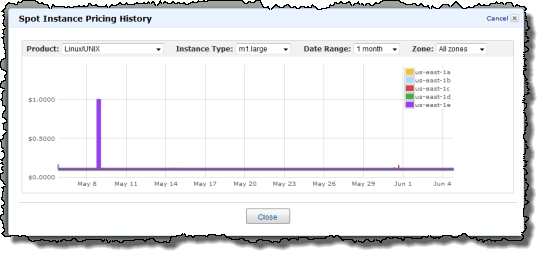

You can now include a bid price in your launch configuration if you want to use Spot Instances. Auto Scaling will use that price to continually place bids in an effort to keep the Auto Scaling group at the desired size. You can use this to soak up background capacity at a price point that is economically viable for your application. For example, let’s say that you can make good use of up to 10 m1.large instances. You consult the Spot Instance Pricing History in the AWS Management Console, and decide that a bid of $0.12 (twelve cents) per hour will work well for you:

Your Auto Scaling Group would have a minimum and a maximum size of 10, and the launch configuration would set the bid price at $0.12. When sufficient capacity is available at or below or your bid price, your group will expand up to the maximum size, and you’ll pay the market price (which could be lower than your bid). The group will contract if there are other demands on the capacity that cause the market price to exceed your bid price. You can alter the bid price at any time by creating a new launch configuration and attaching it to the Auto Scaling Group. Of course, if you want to use On-Demand instances instead, you can simply omit the bid price from your launch configuration.

For even more flexibility, you can use Auto Scaling’s scaling policies feature to change the minimum and maximum group sizes at a predetermined future time or dynamically based on your applications requirements. You could increase your group size at times when your workload is highest or when spot prices are historically low (this is subject to change, of course).

Spot + CloudFormation

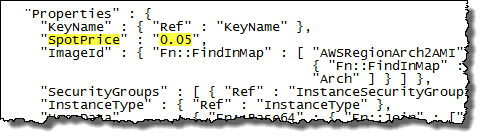

You can now create CloudFormation templates that include a bid for Spot capacity as part of an Auto Scaling group (as described above).

The template can describe the construction of an entire application stack. AWS resources in the stack will be created in dependency-based order. The spot bid will be activated after the Auto Scaling group has been created. Here’s an example taken directly from a template’s definition of an Auto Scaling group:

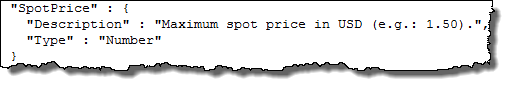

You can also specify the bid price as a parameter to the template:

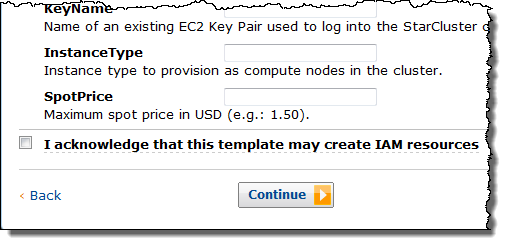

In this case, the AWS Management Console will prompt for the price (and the other parameters specified in the template) when you use the template to create a stack

The parameter value can be used directly in the template, or it can be used in other ways. For example, our StarCluster template has been updated to include the spot bid price as a parameter and to pass it in to the starcluster command:

In addition to the Starcluster template that I mentioned above, we are also releasing two other templates today:

- The Bees With Machine Guns template gives you the power to create a swarm of bees (EC2 micro instances) to load test your web site.

- The Asynchronous Processing template adjusts the number of workers (EC2 instances) that are pulling data from an SQS queue, increasing the number of workers when the queue depth rises above a certain level and reducing it when the number of empty polls on the queue starts to grow. Even though it is of modest size, this template illustrates a number of clever techniques. It installs some packages, configures a crontab entry, loads some Perl code, and uses CloudWatch alarms for scaling.

My advice? Spend some time digging in to these templates to get a better understanding of how you can use the very potent combination of Spot Instances, Auto Scaling groups, and CloudFormation to design complex, parameterized application stacks that can be instantiated transactionally (all or nothing) with just a few clicks. Print them out, draw some diagrams, and gain a better appreciation for how they work — I guarantee you that it will be time well spent, and that you will walk away with some really good ideas!

Notifications Application / Tutorial

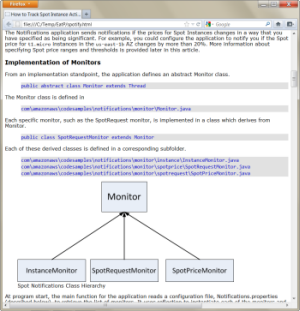

We’ve written a new article to show you how to track Spot instance activity programmatically. Along with this article, we’re distributing a sample application in source code form. As described in the article, the application uses the EC2 APIs to track three types of items, all within a designated region:

We’ve written a new article to show you how to track Spot instance activity programmatically. Along with this article, we’re distributing a sample application in source code form. As described in the article, the application uses the EC2 APIs to track three types of items, all within a designated region:

- The list of EC2 instances currently running in your account (Spot and On-Demand).

- Your current Spot Instance requests.

- Current prices for Spot Instances.

When the application detects a change in any of the items that it monitors, it uses the Simple Notification Service to send a notification. You can use this notification-based model to decouple your application’s bid generation mechanism from the actual processing logic, and you can also do a better job of dealing with processing interruptions if you are outbid and some of your Spot Instances are terminated.

The notification is sent as a simple XML document; here’s a sample:

<accountId>455364113843 </accountId>

<resourceId>sir-aca7a011 </resourceId>

<type>Amazon.EC2.Request.StateTransition </type>

<code>FROM: open TO: cancelled </code>

<message>Your Amazon EC2 Spot Request has had a state transition. </message>

</PostNotification>

The application was written in Java using the AWS SDK for Java. Because the applications stores all of its configuration information and persistent data in Amazon SimpleDB, you can make configuration changes (e.g. updating notification thresholds) by storing new values in the appropriate items in the application’s SimpleDB domain.

Here’s Dave

I interviewed Dave Ward of the EC2 Spot Instance team for The AWS Report. Here’s what Dave had to say:

One small correction — It turns out that the Chocolate + Peanut Butter analogy that I used is out of date. All of the cool kids on the EC2 team now use term Crazy Delicious to refer to the unholy combination of Mr. Pibb and Red Vines.

Talk to Us

We would love to see what kinds of CloudFormation templates you come up with for use with Spot Instances. Please feel free to post them in the CloudFormation forum or leave a note on this post. Also, if you have thoughts on the features you want next on Spot please let us know at spot-instance-feedback@amazon.com or via a note below.

— Jeff;

Two New AWS Getting Started Guides

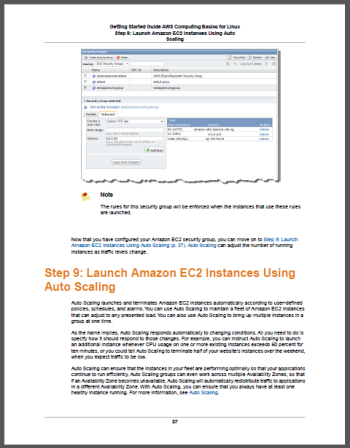

We’ve put together a pair of new Getting Started Guides for Linux and Microsoft Windows. Both guides will show you how to use EC2, Elastic Load Balancing, Auto Scaling, and CloudWatch to host a web application.

We’ve put together a pair of new Getting Started Guides for Linux and Microsoft Windows. Both guides will show you how to use EC2, Elastic Load Balancing, Auto Scaling, and CloudWatch to host a web application.

The Linux version of the guide (HTML, PDF) is built around the popular Drupal content management system. The Windows version (HTML, PDF) is built around the equally popular DotNetNuke CMS.

These guides are comprehensive. You will learn how to:

- Sign up for the services

- Install the command line tools

- Find an AMI

- Launch an Instance

- Deploy your application

- Connect to the Instance using the MindTerm SSH Client or PuTTY

- Configure the Instance

- Create a custom AMI

- Create an Elastic Load Balancer

- Update a Security Group

- Configure and use Auto Scaling

- Create a CloudWatch Alarm

- Clean up

Other sections cover pricing, costs, and potential cost savings.

We also have Getting Started Guides for Web Application Hosting, Big Data, and Static Website Hosting.

— Jeff;

Plat_Forms Contest in Germany – Not Your Typical Hackathon

Were excited to work with the Plat_Forms programming contest, an effort organized in Berlin, at Freie Universitt this spring.

Focus on Scalability & Cloud Architectures

The Plat_Forms contest has been around in Germany since 2007. Its hallmark is celebrating the diversity and strength of various development languages (Java EE, .NET, PHP, Perl, Python, Ruby, etc.). This year, the focus of the contest is entirely on cloud computing and scalability. Ulrich Strk, the program organizer, explained AWS is technology agnostic, thus allowing for a fair comparison of the individual platforms. Apart from that, AWS is the market leader in IaaS services, thus making a perfect choice for us since we can expect that a lot of developers have either used AWS already or are interested in trying it now.

The Coding Challenge

Unlike the typical hackathons or programming contests where developers can enter with radically different apps, Plat_Forms is all about giving everyone the same coding challenge. The organizers have established a set of requirements for the contest task and plan on evaluating all entries holistically, including against principles such as application usability and robustness.

Some of the considerations required on the submission include:

- It will be a web-based application, with a simple RESTful web service interface.

- It will have challenging Service Level Agreement (SLA) requirements such as number of concurrent users it needs to support, guaranteed response times, fault tolerance, etc.

- It will be some kind of messaging service, where users can send each other messages.

- It will require persistent storage of data.

- It may require integration with external systems or data sources, but using simple and standard kinds of mechanisms only (such as HTTP/REST).

- It will require scaling by operation on multiple nodes (computers), which must use a stateless operation mode (i.e. if a single node fails the system overall, the application must not fail and must not lose data).

- It must run completely on the Amazon Web Services infrastructure.

Bonus!

For Germany-based programmers entering the contest, there is an extra bonus prize of $1,000 in AWS credits to the winning teams, in addition to the prestige and prizes provided by the contest itself. The deadline for entering has just been extended to March 16, 2012 and the actual coding challenge will take place in April.

To learn more (guidelines, judging criteria, process etc.), please visit the Plat_Forms website and let us know if you enter! :)

-rodica

New Tagging for Auto Scaling Groups

You can now add up to 10 tags to any of your Auto Scaling Groups. You can also, if you’d like, propagate the tags to the EC2 instances launched from your groups.

Adding tags to your Auto Scaling groups will make it easier for you to identify and distinguish them.

Each tag has a name, a value, and an optional propagation flag. If the flag is set, then the corresponding tag will be applied to EC2 instances launched from the group. You can use this feature to label or distinguish instances created by distinct Auto Scaling groups. You might be using multiple groups to support multiple scalable applications, or multiple scalable tiers or components of a single application. Either, way the tags can help you to keep your instances straight.

Read more in the newest version of the Auto Scaling Developer Guide.

— Jeff;

Behind the Scenes of the AWS Jobs Page, or Scope Creep in Action

The AWS team is growing rapidly and we’re all doing our best to find, interview, and hire the best people for each job. In order to do my part to grow our team, I started to list the most interesting and relevant open jobs at the end of my blog posts. At first I searched our main job site for openings. I’m not a big fan of that site; it serves its purpose but the user interface is oriented toward low-volume searching. I write a lot of blog posts and I needed something better and faster.

Over a year ago I decided to scrape all of the jobs on the site and store them in a SimpleDB domain for easy querying. I wrote a short PHP program to do this. The program takes the three main search URLs (US, UK, and Europe/Asia/South Africa) and downloads the search results from each one in turn. Each set of results consists of a list of URLs to the actual job pages (e.g. Mgr – AWS Dev Support).

Early versions of my code downloaded the job pages sequentially. Since there are now 370 open jobs, this took a few minutes to run and I became impatient. I found Pete Warden’s ParallelCurl and adapted my code to use it. I was now able to fetch and process up to 16 job pages at a time, greatly reducing the time spent in the crawl phase.

for ( $i = 0 ; $i < count ( $JobLinks ) ; $i ++ )

{

$PC -> startRequest ( $JobLinks [ $i ] [ ‘Link’ ] , ‘JobPageFetched’ , $i ) ;

}

$PC -> finishAllRequests ( ) ;

My code also had to parse the job pages and to handle five different formatting variations. Once the pages were parsed it was easy to write the jobs to a SimpleDB domain using the AWS SDK for PHP.

Now that I had the data at hand, it was time to do something interesting with it. My first attempt at visualization included a tag cloud and some jQuery code to show the jobs that matched a tag:

I was never able to get this page to work as desired. There were some potential scalability issues because all of the jobs were loaded (but hidden) so I decided to abandon this approach.

I gave upon the fancy dynamic presentation and generated a simple static page (stored in Amazon S3, of course) instead, grouping the jobs by city:

My code uses the data stored in the SimpleDB domain to identify jobs that have appeared since the previous run. The new jobs are highlighted in the yellow box at the top of the page.

I set up a cron job on an EC2 instance to run my code once per day. In order to make sure that the code ran as expected, I decided to have it send me an email at the conclusion of the run. Instead of wiring my email address in to the code, I created an SNS (Simple Notification Service) topic and subscribed to it. When SNS added support for SMS last month, I subscribed my phone number to the same topic.

I found the daily text message to be reassuring, and I decided to take it even further. I set up a second topic and published a notification to it for each new job, in human readable, plain-text form.

The next step seemed obvious. With all of this data in hand, I could generate a tweet for each new job. I started to write the code for this and then discovered that I was reinventing a well-rounded wheel! After a quick conversation with my colleague Matt Wood, it turned out that he already had the right mechanism in place to publish a tweet for each new job.

Matt subscribed an SQS queue to my per-job notification topic. He used a CloudWatch alarm to detect a non-empty queue, and used the alarm to fire up an EC2 instance via Auto Scaling. When the queue is empty, a second alarm reduces the capacity of the group, thereby terminating the instance.

Being more clever than I, Matt used an AWS CloudFormation template to create and wire up all of the moving parts:

“ProcessorInstance” : {

“Type” : “AWS::AutoScaling::AutoScalingGroup” ,

“Properties” : {

“AvailabilityZones” : { “Fn::GetAZs” : “” } ,

“LaunchConfigurationName” : { “Ref” : “LaunchConfig” } ,

“MinSize” : “0” ,

“MaxSize” : “1” ,

“Cooldown” : “300” ,

“NotificationConfiguration” : {

“TopicARN” : { “Ref” : “EmailTopic” } ,

“NotificationTypes” : [ “autoscaling:EC2_INSTANCE_LAUNCH” ,

“autoscaling:EC2_INSTANCE_LAUNCH_ERROR” ,

“autoscaling:EC2_INSTANCE_TERMINATE” ,

“autoscaling:EC2_INSTANCE_TERMINATE_ERROR” ]

}

}

} ,

You can also view and download the full template.

The instance used to process the new job positions runs a single Ruby script, and is bootstrapped from a standard base Amazon Linux AMI using CloudFormation.

The CloudFormation template passes in a simple bootstrap script using instance User Data, taking advantage of the cloud-init daemon which runs at startup on the Amazon Linux AMI. This in turn triggers CloudFormations own cfn-init process, which configures the instance for use based on information in the CloudFormation template.

A collection of packages are installed via the yum and rubygems package managers (including the AWS SDK for Ruby), the processing script is downloaded and installed from S3, and a simple, YAML format configuration file is written to the instance which contains keys, Twitter configuration details and queue names used by the processing script.

notification = TwitterNotification.new(msg.body)

begin

client.update(notification.update)

rescue Exception => e

log.debug “Error posting to Twitter: #{e}”

end

end

The resulting tweets show up on the AWSCloud Twitter account.

At a certain point, we decided to add some geo-sophistication to the process. My code already identified the location of each job, so it was a simple matter to pass this along to Matt’s code. Given that I am located in Seattle and he’s in Cambridge (UK, not Massachusetts), we didn’t want to coordinate any type of switchover. Instead, I simple created another SNS topic and posted JSON-formatted messages to it. This loose coupling allowed Matt to make the switch at a time convenient to him.

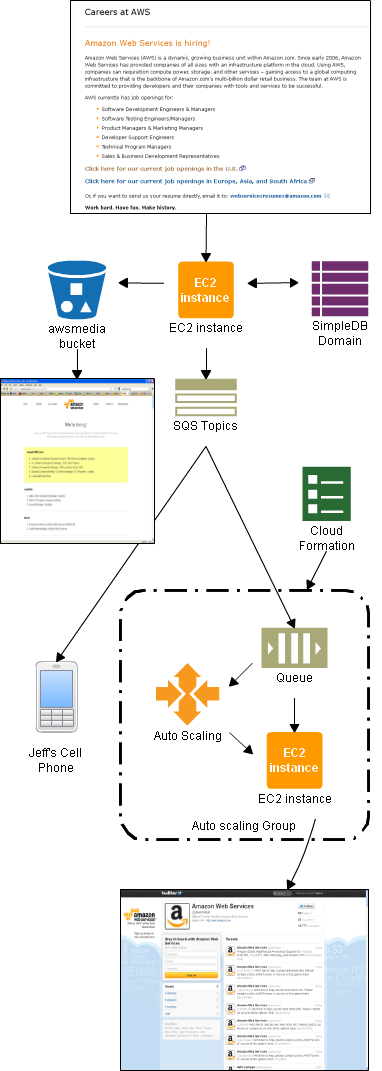

So, without any master plan in place, Matt and I have managed to create a clean system for finding, publishing, and broadcasting new AWS jobs. We made use of the following AWS technologies:

- S3 – Page storage.

- EC2 – Page and queue processing.

- SQS – Storage of queued notifications of new jobs.

- SNS – Publication of new job notifications.

- SimpleDB – Storage of job list.

- CloudWatch – Monitoring SQS queue to scale up and scale down.

- Auto Scaling – Implementation of scaling up and scaling down.

- CloudFormation – Stack construction.

- AWS SDK for PHP – My code.

- AWS SDK for Ruby – Matt’s code.

Here is a diagram to show you how it all fits together:

If you want to hook in to the job processing system, here are the SNS topic IDs:

- Run complete – arn:aws:sns:us-east-1:348414629041:aws-jobs-process

- New job found (human readable) – arn:aws:sns:us-east-1:348414629041:aws-new-job

- New job found (JSON) – arn:aws:sns:us-east-1:348414629041:aws-new-job-json

The topics are all set to be publicly readable so you can subscribe to them without any help from me. If you build something interesting, please feel free to post a comment so that I know about it.

The point of all of this is to make sure that you can track the newest AWS jobs. Please follow @AWSCloud take a look at the list of All AWS Jobs.

— Jeff (with lots of help from Matt);

Facebook Developer Update: Meet RootMusic, Funzio, and 50Cubes

In honor of today’s Facebook Developer Conference, I’d like to recognize the success of our existing Facebook app developers and invite even more developers to kick-start their next Facebook app project with Amazon Web Services.

Quick Numbers

We crunched some numbers and found out that 70% of the 50 most popular Facebook apps leverage one or more AWS services. Many of their developers rely on AWS to provide them with compute, network, storage, database and messaging services on a pay-as-you-go basis. In addition to Zyngas popular FarmVille and CafeWorld, or games from Playfish and Wooga, many of the most exciting and popular Facebook apps are also running on AWS.

Here are a few examples:

RootMusic‘s BandPage app (currently the #1 Music App on Facebook, and #8 overall app on Facebook) helps bands and musicians build fan pages that will attract and hold the interest of an audience. RootMusic enables artists to tap into the passion their fans feel for their art and keep them engaged with an interactive experience. More than 250,000 bands of all shapes and sizes, from Rihanna and Arctic Monkeys, to bands you haven’t heard of yet but may soon discover, have already made RootMusics BandPage their central online space for connecting with their fans. Artists use it to share music, release special edition songs/albums here, share photos, and list events/shows. BandPage now supports 30 million monthly active users from all over the world. Behind all the capabilities that ignite BandPages music fan communities lies a well-thought out, highly-distributed and highly-scalable backend, powered by Amazon Web Services:

RootMusic‘s BandPage app (currently the #1 Music App on Facebook, and #8 overall app on Facebook) helps bands and musicians build fan pages that will attract and hold the interest of an audience. RootMusic enables artists to tap into the passion their fans feel for their art and keep them engaged with an interactive experience. More than 250,000 bands of all shapes and sizes, from Rihanna and Arctic Monkeys, to bands you haven’t heard of yet but may soon discover, have already made RootMusics BandPage their central online space for connecting with their fans. Artists use it to share music, release special edition songs/albums here, share photos, and list events/shows. BandPage now supports 30 million monthly active users from all over the world. Behind all the capabilities that ignite BandPages music fan communities lies a well-thought out, highly-distributed and highly-scalable backend, powered by Amazon Web Services:

“In 20 seconds, we can double our server capacity. In a high-growth environment like ours, it’s very important for us to trust that we have the best support to give to the music community around the world. Five years ago, we would have crashed and been down without knowing when we would be back. Now, because of Amazons continued innovation, we can provide the best technology and scale to serve music communities needs around the world, Christopher Tholen, RootMusic CTO.

Funzio‘s Crime City is #7 in the top 10 Facebook apps, and its the highest rated Facebook game to reach 1 million daily users with an average user rating of 4.9 out of 5. Crime City currently has 5.5 million monthly active users, with 10 million monthly active users at its peak. The iPhone version was recently listed among the top 5 games in the Apple Appstore and #1 free game in 11 countries and counting. Crime City sports modern, 3D-like graphics that look great on both Facebook and iPhone, and has a collection of hundreds of virtual items that players can collect.

Funzio‘s Crime City is #7 in the top 10 Facebook apps, and its the highest rated Facebook game to reach 1 million daily users with an average user rating of 4.9 out of 5. Crime City currently has 5.5 million monthly active users, with 10 million monthly active users at its peak. The iPhone version was recently listed among the top 5 games in the Apple Appstore and #1 free game in 11 countries and counting. Crime City sports modern, 3D-like graphics that look great on both Facebook and iPhone, and has a collection of hundreds of virtual items that players can collect.

Powering this incredibly rich user experience across multiple platforms is their business acumen in promoting the app, as well as a strong backend that leverages many AWS products to serve their viral and highly active user base. Funzio uses Amazon EC2 to quickly scale up and down based on demand, Amazon RDS to store game and current state information. They use Amazon CloudFront to optimize the delivery to a global, widely-distributed audience and to meet Facebook’s SSL certificate requirements.

“At Funzio, we use AWS exclusively to host the infrastructure for our games. When developing social games, you need to be ready for that traffic burst for a hit game in a moment’s notice. AWS provides us with the flexibility to quickly and efficiently scale our applications at all layers, from increasing database capacity in RDS, to adding more application or caching servers within minutes in EC2. Amazon’s cloud services allow us to focus our efforts on developing quality games and not on worrying about managing our technology operations. – Ram Gudavalli, Funzio CTO.

50Cubes, the creator of Mall World, is a startup that has developed one of the most highly-regarded and longer-running successful female focused social game on Facebook. With over 5 million monthly active users, Mall World has a track record of being not only one of the first but also the top game of its kind for the past 1.5 years and continues to entice users world-wide.

50Cubes, the creator of Mall World, is a startup that has developed one of the most highly-regarded and longer-running successful female focused social game on Facebook. With over 5 million monthly active users, Mall World has a track record of being not only one of the first but also the top game of its kind for the past 1.5 years and continues to entice users world-wide.

50Cubes powers Mall World and other games they developed with a suite of AWS products. Out of these, they value the Amazon Auto-scaling and EBS features the most these products helps them effortlessly scale up and down their exclusive use of Amazon EC2 instances with user demand. Their database clusters are a mix of MySQL and other key value storage databases, all hosted and managed by the team on Amazon EC2 using EBS for Cloud Storage.

“One thing that impresses me the most about AWS services is that they have rapidly iterated and improved their products and services over the past year and half, executing almost like a startup of our scale.” – Fred Jin, 50cubes CTO.

Get Started: Your Facebook App, Powered by AWS

Doug Purdy, Director of Developer Relations at Facebook, said:

AWS is great for Facebook developers you can start small, test and prove your ideas. As your app grows, you can easily scale up your resources to keep your users engaged and connected. AWS allows developers to build highly-available, highly-scalable, cost-efficient apps that provide the type of rich and responsive user experiences that our global audience has grown to expect.

To make it as easy as possible for you to get started, we’ve updated our Building Facebook Apps on AWS page. We have also improved and refreshed our Facebook App AMI. The new AMI uses AWS CloudFormation to install the latest versions of the Facebook PHP SDK and the AWS SDK for PHP at startup time. If you want to learn more about developing AWS applications in PHP, feel free to check out the free chapters of my AWS programming book (or buy a complete copy).

— Jeff;

AWS Summer Startups: ShowNearby

ShowNearby is a leading location-based service in Singapore and an early adopter of the Android platform. Unlike many mobile apps out there, ShowNearby started with deployment on Android and then moved on to the iPhone by mid 2010 and Blackberry by fall of 2010. Today, the ShowNearby flagship app is available on Android, iPhone and Blackberry and reports approximately 100 Million mobile searches conducted across all its platforms.

Due to the success of our application, we had a very big growth in a short period of time. When we launched on the popular platforms of iOS and subsequently BlackBerry, we were blown away by the huge surge of users that started using ShowNearby. In fact in December of 2010, ShowNearby became the top downloaded app in the App store, edging out thousands of other popular free apps in Singapore! It was then that we realized we needed a scalable solution to handle the increasing load and strain on our servers that our existing provider was unable to provide.

Our infrastructure at the time was hosted with a local service provider, but was unable to cope with the high traffic peaks we were facing.We analyzed a few vendors and decided to go ahead with Amazon because of it’s reliability, high availability, range of services and pricing, but mostly because of its solid customer support.

As part of our deployment, we added AWS services incrementally. Currently we use extensively Amazon EC2 instances with auto scaling, Relational Database Service (RDS), Simple Queue Service (SQS), Cloudwatch and Simple Storage Service (S3).

Next item on our list is to focus on automating the deployment of infrastructure environments with cloud formations, as well as optimizing content delivery globally with Cloudfront.

Choosing the Tech Stack That Makes Business Sense

ShowNearby currently leverages on the LAMP stack for most our web services. Delivery of accurate, always available, location based data is ShowNearbys top priority.That is why we chose AWS.

Other important things why to choose cloud/AWS: Speed and agility to create and tear down infrastructure as and when it is needed. Good and fast network accessibility for our app. Ability to scale up and out when needed. Ability to duplicate infrastructure into new regions.

Reaching Automation Nirvana with AWS

We chose to use AWSs Linux based AMI and dynamically build on top of it using well defined, automatic configuration. Now, every time an instance is started, we are sure the infrastructure is always in a known state. Admittedly, a lot of hard work is involved to achieve Automation Nirvana, but knowing precisely what works at the end of the day helps us sleep at night.

- We use Amazon S3 to store infrastructure configuration and user provided content/images. ShowNearbys business is currently in, and marching into new, regions, so S3 is a natural precursor to AWSs CloudFront content distribution service.

- We use SQS to help process user behaviour and to determine usage patterns.

- We use this to provide our dear users with a better, and hopefully, more personalised experience.We use spot instances for early development & testing servers.

- We use CloudWatch extensively – how could we do without it?

- We use RDS, for our hosted mySQL databased needs, of course

- We use the command-line and PHP AWS API tools to a large extent, which provides us increased business agility.

Words of Wisdom for Mobile Startups

We would tell them to find partners who can be good friends at the same time. The race is long and tough, so better do it enjoying every step of the way. There is a window of opportunity in Asia now open to unleash your full potential, show what you are capable of and you’ll be rewarded.

Today, if we need to refresh or update a web application, we restart new instances and flush out the old. Moving forward, we are looking into reducing the time between releases still further and so, we are working to improve on our already solid infrastructure and configuration management. Further automation in the form of Chef and/or Puppet or similar is being investigated.

——————————————————

Enter Your Startup in the AWS Start-up Challenge!

This year’s AWS Start-up Challenge is a worldwide competition with prizes at all levels, including up to $100,000 in cash, AWS credits, and more for the grand prize winner. 7 Finalists receive $10,000 in AWS credits and 5 regional semi-finalists receive $2,500 in AWS credits. All eligible entries receive $25 in AWS credits. Learn more and enter today!

You can also follow @AWSStartups on Twitter for updates.

-rodica

Auto Scaling – Notifications, Recurrence, and More Control

We’ve made some important updates to EC2’s Auto Scaling feature. You now have additional control of the auto scaling process, and you can receive additional information about scaling operations.

Here’s a summary:

- You can now elect to receive notification from Amazon SNS when Auto Scaling launches or terminates EC2 instances.

- You can now set up recurrent scaling operations.

- You can control the process of adding new launched EC2 instances to your Elastic Load Balancer group.

- You can now delete an entire Auto Scaling group with a single call to the Auto Scaling API.

- Auto Scaling instances are now tagged with the name of their Autos Scaling group.

Notifications

The Amazon Simple Notification Service (SNS) allows you to create topics and to publish notifications to them. SNS can deliver the notifications as HTTP or HTTPS POSTs, email (SMTP, either plain-text or in JSON format), or as a message posted to an SQS queue.

You can now instruct Auto Scaling to send a notification when it launches or terminates an EC2 instance. There are actually four separate notifications: EC2_INSTANCE_LAUNCH, EC2_INSTANCE_LAUNCH_ ERROR, EC2_INSTANCE_TERMINATE, and EC2_INSTANCE_TERMINATE_ERROR. You can use these notifications to track the size of each of your Auto Scaling groups, or you can use them to initiate other types of application processing or bookkeeping.

George Reese, this feature is for you!

Recurrent Scaling Operations

Scheduled scaling actions for an Auto Scaling group can now include a recurrence, specified as a Cron string. If your Auto Scaling group manages a fleet of web services, you can scale it up and down to reflect expected traffic. For example, if you send out a newsletter each Monday afternoon and expect a flood of click-throughs, you can use a recurrent scaling event to ensure that you have enough servers running to handle the traffic. Or, you can use this feature to launch one or more servers to run batch processes on a periodic basis, such as processing log files each morning.

Instance Addition Control

The Auto Scaling service executes a number of processes to manage each Auto Scaling group. These processes include instance launching and termination, and health checks (a full list of the processes and a complete description of each one can be found here).

The SuspendProcesses and ResumeProcesses APIs give you the ability to suspend and later resume each type of process for a particular Auto Scaling group. In some applications, suspending certain Auto Scaling processes allows for better coordination with other aspects of the application.

With this release, you now have control of an additional process, AddToLoadBalancer. This can be particularly handy when newly launches EC2 instances must be initialized or verified in some way before they are ready to accept traffic.

Hassle-Free Group Deletion

You can now vanquish an entire Auto Scaling group to oblivion with a single call to DeleteAutoScalingGroup. You’ll need to set the new ForceDelete parameter to true in order to do this. Before ForceDelete you had to wait until all instances in an Auto Scaling group were terminated before you were allowed to delete the Auto Scaling group. Auto Scaling will terminate all running instances in the group and obviates the waiting.

Easier Identification

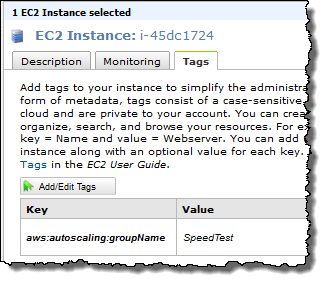

Instances launched by Auto Scaling are now tagged with the name of their Auto Scaling group so that you can find and manage them more easily. The Auto Scaling group tag is immutable and doesnt count towards the EC2 limit of 10 tags per instance. Here is how the EC2 console would show an instance of the Auto Scaling group SpeedTest:

You can read more about these new features on the Auto Scaling page or in the Auto Scaling documentation.

These new features were implemented in response to feedback from our users. Please feel free to leave your own feedback in the EC2 forum.

— Jeff;

Upcoming Event: AWS Tech Summit, London

I’m very pleased to invite you all to join the AWS team in London, for our first Tech Summit of 2011. We’ll take a quick, high level tour of the Amazon Web Services cloud platform before diving into the technical detail of how to build highly available, fault tolerant systems, host databases and deploy Java applications with Elastic Beanstalk.

We’re also delighted to be joined by three expert customers who will be discussing their own, real world use of our services:

- Francis Barton, from Costcutter

- Richard Churchill, from Servicetick

- Richard Holland, from Eagle Genomics

So if you’re a developer, architect, sysadmin or DBA, we look forward to welcoming you to the Congress Centre in London on the 17th of March.

We had some great feedback from our last summit in November, and this event looks set to be our best yet.

The event is free, but you’ll need to register.

~ Matt

New Webinar: High Availability Websites

As part of a new, monthly hands on series of webinars, I’ll be giving a technical review of building, managing and maintaining high availability websites and web applications using Amazons cloud computing platform.

Hosting websites and web applications is a very common use of our services, and in this webinar we’ll take a hands-on approach to websites of all sizes, from personal blogs and static sites to complex multi-tier web apps.

Join us on January 28 at 10:00 AM (GMT) for this 60 minute, technical web-based seminar, where we’ll aim to cover:

- Hosting a static website on S3

- Building highly available, fault tolerant websites on EC2

- Adding multiple tiers for caching, reverse proxies and load balancing

- Autoscaling and monitoring your website

Using real world case studies and tried and tested examples, well explore key concepts and best practices for working with websites and on-demand infrastructure.

The session is free, but you’ll need to register!

See you there.

~ Matt